10. Execution threads

10.1. The Thread class

When an application is launched, it runs in an execution flow called a thread. The .NET class models a thread is the System.Threading.Thread and has the following definition:

Manufacturers

|

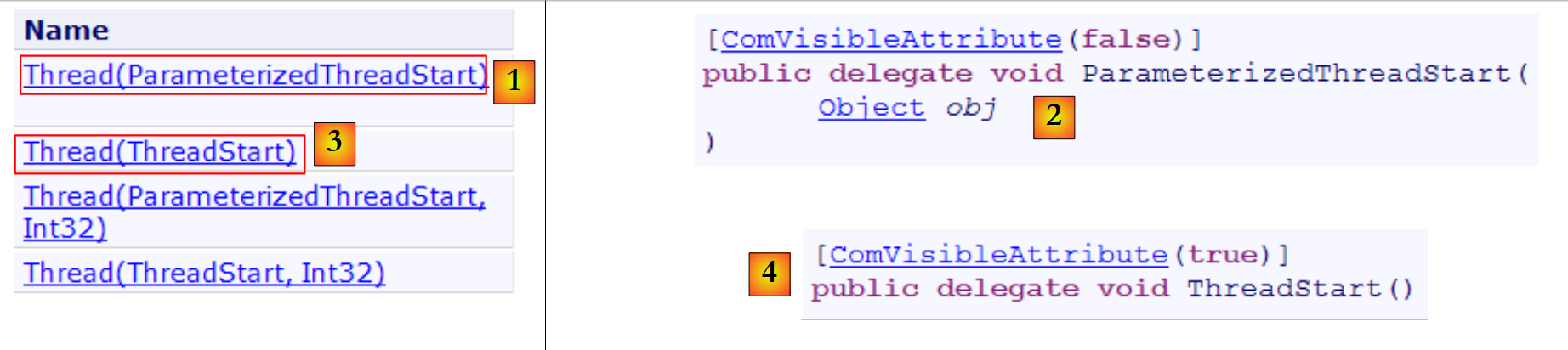

In the following examples, we will only use constructors [1,3]. Constructor [1] admits as parameter a method with signature [2], c.a.d. with a parameter of type object and returns no result. Constructor [3] accepts as parameter a method with signature [4], c.a.d. which has no parameter and returns no result.

Properties

Some useful properties:

- Thread CurrentThread : static property giving a reference to the thread in which the code requesting this property is located

- string Name : thread name

- bool IsAlive : indicates whether the thread is running or not.

Methods

The most commonly used methods are :

- Start(), Start(object obj) : starts the asynchronous execution of the thread, possibly by passing it information in a object.

- Abort(), Abort(object obj) : to forcibly terminate a thread

- Join() : the thread T1 which performs T2.Join thread is blocked until the T2. There are variants for ending the wait after a set time.

- Sleep(int n) : static method - the thread executing the method is suspended during n milliseconds. It then loses the processor, which is given to another thread.

Let's take a look at a first application demonstrating the existence of a main thread of execution, the one in which the function Main of a class :

using System;

using System.Threading;

namespace Chap8 {

class Program {

static void Main(string[] args) {

// init current thread

Thread main = Thread.CurrentThread;

// display

Console.WriteLine("Thread courant : {0}", main.Name);

// we change the name

main.Name = "main";

// check

Console.WriteLine("Thread courant : {0}", main.Name);

// infinite loop

while (true) {

// display

Console.WriteLine("{0} : {1:hh:mm:ss}", main.Name, DateTime.Now);

// temporary shutdown

Thread.Sleep(1000);

}//while

}

}

}

- line 8: retrieve a reference to the thread in which the [main] method is running

- lines 10-14: display and modify its name

- lines 17-22: a loop that displays a message every second

- line 21: the thread in which the [main] method is running will be suspended for 1 second

The screen results are as follows:

- line 1: the current thread had no name

- line 2: he has one

- lines 3-7: display every second

- line 8: the program is interrupted by Ctrl-C.

10.2. Creation of execution threads

It is possible to have applications where pieces of code run "simultaneously" in different execution threads. When we say that threads run simultaneously, this is often a misnomer. If the machine has only one processor, as is still often the case, the threads share this processor: they each have access to it, in turn, for a short time (a few milliseconds). This gives the illusion of parallel execution. The portion of time allocated to a thread depends on various factors, including its priority, which has a default value but can also be set programmatically. When a thread has the processor, he uses it normally for the full time allotted. However, he can release it early:

- by waiting for an event (Wait, Join)

- by putting itself to sleep for a set period of time (Sleep)

- A thread T is first created by one of the manufacturers presented above, for example :

where Start is a method with one of the following two signatures:

Creating a thread does not start it.

- Thread T is started by T.Start() : the method Start passed to T's constructor will then be executed by thread T. The program that executes the T.Start() does not wait for task T to finish: it immediately moves on to the next instruction. This means that two tasks are running in parallel. In many cases, they need to be able to communicate with each other to keep track of the progress of their joint work. This is the problem of thread synchronization.

- Once launched, the thread T runs autonomously. It will stop when the Start that he executes will have finished his work.

- The T thread can be forced to terminate:

- T.Abort() requests the T thread to terminate.

- You can also wait for the end of its execution by T.Join(). This is a blocking instruction: the program executing it is blocked until task T has completed its work. This is a means of synchronization.

Let's take a look at the following program:

using System;

using System.Threading;

namespace Chap8 {

class Program {

public static void Main() {

// init Current thread

Thread main = Thread.CurrentThread;

// name the Thread

main.Name = "Main";

// creation of execution threads

Thread[] tâches = new Thread[5];

for (int i = 0; i < tâches.Length; i++) {

// create thread i

tâches[i] = new Thread(Affiche);

// set the thread name

tâches[i].Name = i.ToString();

// start execution of thread i

tâches[i].Start();

}

// end of hand

Console.WriteLine("Fin du thread {0} à {1:hh:mm:ss}",main.Name,DateTime.Now);

}

public static void Affiche() {

// display start of execution

Console.WriteLine("Début d'exécution de la méthode Affiche dans le Thread {0} : {1:hh:mm:ss}",Thread.CurrentThread.Name,DateTime.Now);

// sleep for 1 s

Thread.Sleep(1000);

// display end of run

Console.WriteLine("Fin d'exécution de la méthode Affiche dans le Thread {0} : {1:hh:mm:ss}", Thread.CurrentThread.Name, DateTime.Now);

}

}

}

- lines 8-10: give a name to the thread executing the [Main] method

- lines 13-21: 5 threads are created and executed. Thread references are stored in an array for later retrieval. Each thread executes the Poster lines 27-35.

- line 20: thread no. i is started. This operation is non-blocking. Thread n° i will run in parallel with the [Main] method thread that launched it.

- line 24: the thread executing the [Main] method terminates.

- lines 27-35: the [Display] method makes displays. It displays the name of the thread executing it, as well as the start and end times of execution.

- line 31: any thread executing the [Display] method will stop for 1 second. The processor will then be given to another thread waiting for a processor. At the end of the stop second, the stopped thread will be a candidate for the processor. It will get it when its turn comes. This depends on various factors, including the priority of other threads waiting for the processor.

The results are as follows:

These results are highly instructive:

- first of all, we can see that launching the execution of a thread is not blocking. The Main launched the execution of 5 threads in parallel and completed its execution before them. The operation

// on lance l'exécution du thread i

tâches[i].Start();

starts thread execution tasks[i] but once this has been done, execution continues immediately with the next instruction, without waiting for the thread to finish executing.

- all threads created must execute the Affiche. The execution order is unpredictable. Although in the example the order of execution seems to follow the order of execution requests, no general conclusions can be drawn from this. The operating system has ici 6 threads and one processor. It will distribute the processor to these 6 threads according to its own rules.

- the results are a consequence of the method Sleep. In the example, thread 0 is the first to execute method Affiche. The start-of-execution message is displayed, and then it executes the Sleep which suspends it for 1 second. It then loses the processor, which becomes available for another thread. The example shows that thread 1 will get it. Thread 1 will follow the same path as the other threads. When thread 0's second of sleep is over, its execution can resume. The system gives it the processor and it can finish executing method Affiche.

Let's modify our program to terminate the Main with the instructions :

// end of hand

Console.WriteLine("Fin du thread " + main.Name);

// stop all threads

Environment.Exit(0);

Running the new program gives the following results:

- lines 1-5: threads created by the Main begin execution and are interrupted for 1 second

- line 6: the [Main] thread recovers the processor and executes the instruction :

This instruction stops all threads and not just the Main.

If the Main wants to wait for the threads it has created to finish executing, it can use the Join class Thread :

public static void Main() {

...

// we wait for all threads

for (int i = 0; i < tâches.Length; i++) {

// wait for thread i to finish execution

tâches[i].Join();

}

// end of hand

Console.WriteLine("Fin du thread {0} à {1:hh:mm:ss}", main.Name, DateTime.Now);

}

- line 6: the [Main] thread waits for each of the threads. It is first blocked waiting for thread n° 1, then for thread n° 2, etc... Finally, when it exits the loop of lines 2-5, all 5 threads it started are finished.

The results are as follows:

- line 11: the [Main] thread terminated after the threads it had started.

10.3. The benefits of threads

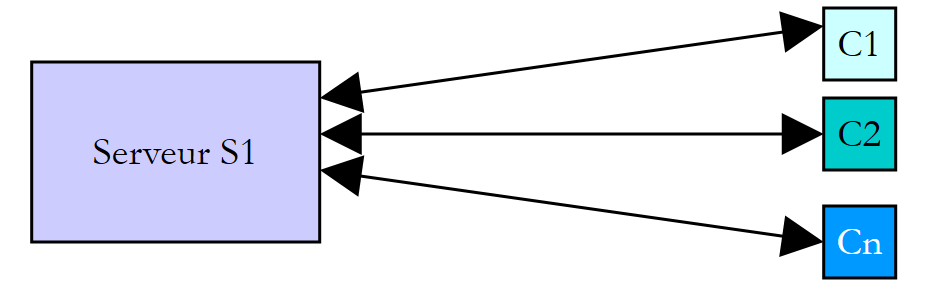

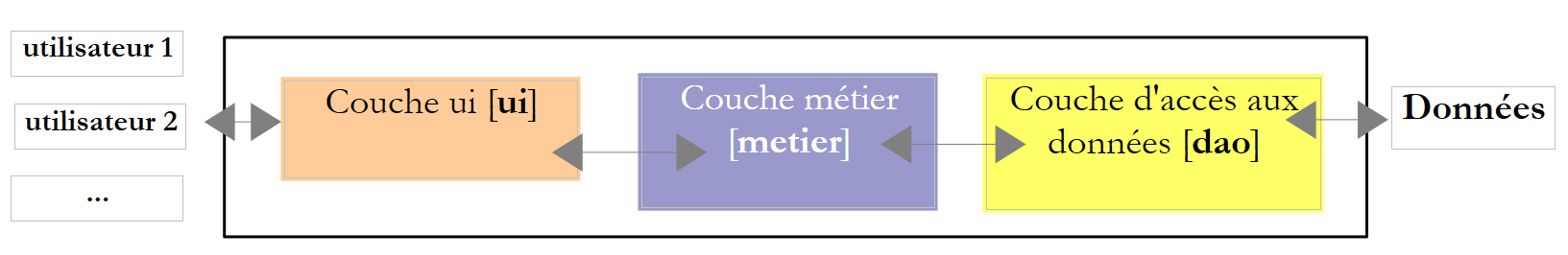

Now that we've highlighted the existence of a default thread, the one that executes the Main, and we know how to create new ones, let's take a look at what threads mean to us and why we're introducing them ici. There's one type of application that lends itself well to the use of threads, and that's the client-server applications of internet. We'll introduce them in the following chapter. In a client-server application of the internet, a server on machine S1 responds to requests from clients on remote machines C1, C2, ..., Cn.

|

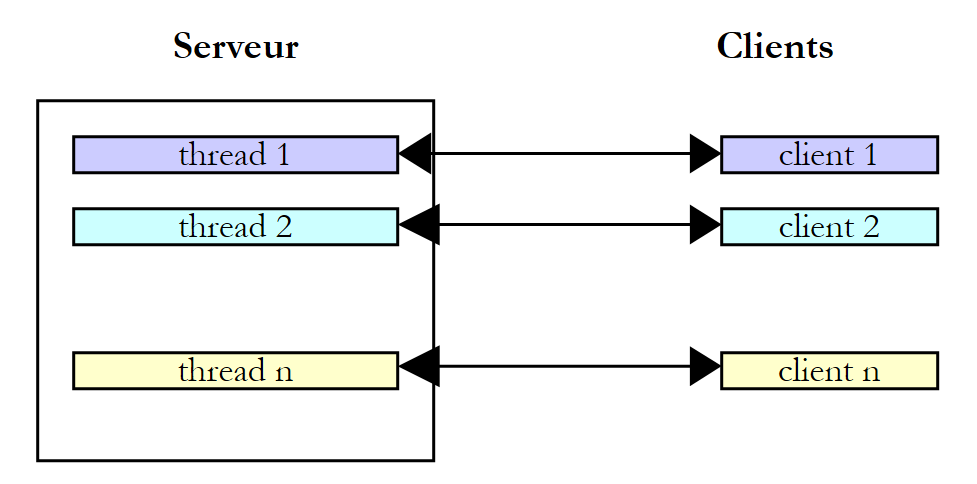

Every day, we use internet applications corresponding to this diagram: Web services, e-mail, forum consultation, file transfer... In the above diagram, the S1 server must serve the Ci clients simultaneously. If we take the example of a FTP (File Transfer Protocol) server delivering files to its clients, we know that a file transfer can sometimes take several minutes. Of course, it's out of the question for a client to monopolize the server for this length of time. What is usually done is for the server to create as many execution threads as there are clients. Each thread is then responsible for dealing with a particular client. As the processor is shared cyclically between all the machine's active threads, the server spends a little time with each client, ensuring simultaneous service.

|

In practice, the server uses a thread pool with a limited number of threads, 50 for example. The 51st client is then asked to wait.

10.4. Information exchange between threads

In the previous examples, a thread was initialized as follows:

where Run was a method with the following signature :

It is also possible to use the following signature:

This allows information to be transmitted to the launched thread. For example,

will launch the t which will then execute the Run associated with it by design, passing it the effective parameter obj1. Here is an example:

using System;

using System.Threading;

namespace Chap8 {

class Program4 {

public static void Main() {

// init Current thread

Thread main = Thread.CurrentThread;

// name the Thread

main.Name = "Main";

// creation of execution threads

Thread[] tâches = new Thread[5];

Data[] data = new Data[5];

for (int i = 0; i < tâches.Length; i++) {

// create thread i

tâches[i] = new Thread(Sleep);

// set the thread name

tâches[i].Name = i.ToString();

// start execution of thread i

tâches[i].Start(data[i] = new Data { Début = DateTime.Now, Durée = i+1 });

}

// we wait for all threads

for (int i = 0; i < tâches.Length; i++) {

// wait for thread i to finish execution

tâches[i].Join();

// result display

Console.WriteLine("Thread {0} terminé : début {1:hh:mm:ss}, durée programmée {2} s, fin {3:hh:mm:ss}, durée effective {4}",

tâches[i].Name,data[i].Début,data[i].Durée,data[i].Fin,(data[i].Fin-data[i].Début));

}

// end of hand

Console.WriteLine("Fin du thread {0} à {1:hh:mm:ss}", main.Name, DateTime.Now);

}

public static void Sleep(object infos) {

// parameter is retrieved

Data data = (Data)infos;

// sleep mode for Duration

Thread.Sleep(data.Durée*1000);

// end of execution

data.Fin = DateTime.Now;

}

}

internal class Data {

// miscellaneous information

public DateTime Début { get; set; }

public int Durée { get; set; }

public DateTime Fin { get; set; }

}

}

- lines 45-50: information of type [Data] passed to threads :

- Start : thread execution start time - set by the launcher thread

- Duration : duration in seconds of the Sleep executed by the launched thread - set by the launcher thread

- End : thread execution start time - set by the launched thread

- lines 35-43: the method Sleep executed by threads has the signature void Sleep(object obj). The effective parameter obj will be of type [Data] defined on line 45.

- lines 15-22: creation of 5 threads

- line 17: each thread is associated with the Sleep method on line 35

- line 21: an object of type [Data] is passed to the Start which launches the thread. In this object, we have noted the start time of the thread's execution and the duration in seconds for which it must sleep. This object is stored in the table on line 14.

- lines 24-30: the [Main] thread waits for all threads it has started to finish.

- lines 28-29 : the [Main] thread retrieves the data[i] object from thread no. i and displays its contents.

- lines 35-42: the method Sleep executed by threads

- line 37: the [Data] type parameter is retrieved

- line 39: the field Duration parameter is used to set the Sleep

- line 41: the field End of the parameter is initialized

The results are as follows:

This example shows that two threads can exchange information:

- the launcher thread can control the execution of the launched thread by giving it information

- the launched thread can return results to the launching thread.

In order for the launched thread to know when the results it is waiting for are available, it must be warned when the launched thread has finished. Ici, it waited for it to terminate using the Join. There are other ways of doing the same thing. We'll look at them later.

10.5. Competing access to shared resources

10.5.1. Unsynchronized concurrent access

In the paragraph on exchanging information between threads, the information exchanged was only by two threads and at very specific times. This was classic parameter passing. In other cases, information is shared by several threads, which may wish to read or update it at the same time. This raises the problem of information integrity. Suppose the shared information is a structure S with various information items I1, I2, ... In.

- a T1 thread begins to update structure S: it modifies field I1 and is interrupted before completing the entire update of structure S

- a T2 thread which retrieves the processor then reads the S structure to make decisions. It reads a structure in an unstable state: some fields are up to date, others not.

We call this situation access to a shared resource, ici the S structure, and it's often quite tricky to manage. Let's take the following example to illustrate the problems that can arise:

- an application will generate n threads, n being passed as a parameter

- the shared resource is a counter that must be incremented by each generated thread

- at the end of the application, the counter value is displayed. We should therefore find n.

The program is as follows:

using System;

using System.Threading;

namespace Chap8 {

class Program {

// class variables

static int cptrThreads = 0; // thread counter

//hand

public static void Main(string[] args) {

// instructions for use

const string syntaxe = "pg nbThreads";

const int nbMaxThreads = 100;

// verification no. of arguments

if (args.Length != 1) {

// error

Console.WriteLine(syntaxe);

// stop

Environment.Exit(1);

}

// argument quality check

int nbThreads = 0;

bool erreur = false;

try {

nbThreads = int.Parse(args[0]);

if (nbThreads < 1 || nbThreads > nbMaxThreads)

erreur = true;

} catch {

// error

erreur = true;

}

// mistake?

if (erreur) {

// error

Console.Error.WriteLine("Nombre de threads incorrect (entre 1 et 100)");

// end

Environment.Exit(2);

}

// thread creation and generation

Thread[] threads = new Thread[nbThreads];

for (int i = 0; i < nbThreads; i++) {

// creation

threads[i] = new Thread(Incrémente);

// naming

threads[i].Name = "" + i;

// launch

threads[i].Start();

}//for

// waiting for threads to finish

for (int i = 0; i < nbThreads; i++) {

threads[i].Join();

}

// counter display

Console.WriteLine("Nombre de threads générés : " + cptrThreads);

}

public static void Incrémente() {

// increases thread counter

// meter reading

int valeur = cptrThreads;

// follow-up

Console.WriteLine("A {0:hh:mm:ss}, le thread {1} a lu la valeur du compteur : {2}", DateTime.Now, Thread.CurrentThread.Name, cptrThreads);

// waiting

Thread.Sleep(1000);

// counter incrementation

cptrThreads = valeur + 1;

// follow-up

Console.WriteLine("A {0:hh:mm:ss}, le thread {1} a écrit la valeur du compteur : {2}", DateTime.Now, Thread.CurrentThread.Name, cptrThreads);

}

}

}

We won't dwell on the thread generation part, which has already been covered. Instead, let's take a look at the Increment, of line 59 used by each thread to increment the static counter cptrThreads on line 8.

- line 62: the counter is read

- line 66: the thread stops for 1 second. It therefore loses the processor

- line 68: counter is incremented

Step 2 is only there to force the thread to lose the processor. The processor will be given to another thread. In practice, there's no guarantee that a thread won't be interrupted between the moment it reads the counter and the moment it increments it. Even if you write cptrThreads++, giving the illusion of a single instruction, there is a risk of losing the processor between reading the counter value and writing its value incremented by 1. In fact, the high-level operation cptrThreads++ will be the subject of several elementary instructions at processor level. The one-second sleep stage 2 is therefore only there to systematize this risk.

The results obtained with 5 threads are as follows:

Reading these results, it's easy to see what's going on:

- line 1: a first thread reads the counter. It finds 0. It stops for 1 second and loses the processor

- line 2: a second thread takes over the processor and also reads the counter value. It is still at 0, as the previous thread has not yet incremented it. It too stops for 1 second and loses the processor.

- lines 1-5: in 1 s, all 5 threads have time to pass and read the value 0.

- lines 6-10: when they wake up one after the other, they will increment the value 0 they have read and write the value 1 to the counter, as confirmed by the main program (Main) on line 11.

What's the problem? The second thread read the wrong value because the first had been interrupted before completing its job of updating the counter in the window. This brings us to the notion of critical resources and critical sections of a program:

- a critical resource is a resource that can only be held by one thread at a time. Ici the critical resource is the counter.

- a critical section of a program is a sequence of instructions in a thread's execution flow during which it accesses a critical resource. It must be ensured that during this critical section, it is the only thread to access the resource.

In our example, the critical section is the code between reading the counter and writing its new value:

// lecture compteur

int valeur = cptrThreads;

// attente

Thread.Sleep(1000);

// incrémentation compteur

cptrThreads = valeur + 1;

To execute this code, a thread must be guaranteed to be alone. It can be interrupted, but during this interruption, another thread must not be able to execute the same code. The .NET platform offers various tools to ensure unitary entry in critical sections of code. Let's take a look at some of them.

10.5.2. The lock clause

The clause lock is used to define a critical section as follows:

obj must be an object reference visible to all threads running the critical section. The lock ensures that only one thread at a time will execute the critical section. The previous example is rewritten as follows:

using System;

using System.Threading;

namespace Chap8 {

class Program2 {

// class variables

static int cptrThreads = 0; // thread counter

static object synchro = new object(); // synchronization object

//hand

public static void Main(string[] args) {

...

// waiting for threads to finish

Thread.CurrentThread.Name = "Main";

for (int i = nbThreads - 1; i >= 0; i--) {

Console.WriteLine("A {0:hh:mm:ss}, le thread {1} attend la fin du thread {2}", DateTime.Now, Thread.CurrentThread.Name, threads[i].Name);

threads[i].Join();

Console.WriteLine("A {0:hh:mm:ss}, le thread {1} a été prévenu de la fin du thread {2}", DateTime.Now, Thread.CurrentThread.Name, threads[i].Name);

}

// counter display

Console.WriteLine("Nombre de threads générés : " + cptrThreads);

}

public static void Incrémente() {

// increases thread counter

// exclusive access to the meter is required

Console.WriteLine("A {0:hh:mm:ss}, le thread {1} attend l'autorisation d'entrer dans la section critique", DateTime.Now, Thread.CurrentThread.Name);

lock (synchro) {

// meter reading

int valeur = cptrThreads;

// follow-up

Console.WriteLine("A {0:hh:mm:ss}, le thread {1} a lu la valeur du compteur : {2}", DateTime.Now, Thread.CurrentThread.Name, cptrThreads);

// waiting

Thread.Sleep(1000);

// counter incrementation

cptrThreads = valeur + 1;

// follow-up

Console.WriteLine("A {0:hh:mm:ss}, le thread {1} a écrit la valeur du compteur : {2}", DateTime.Now, Thread.CurrentThread.Name, cptrThreads);

}

Console.WriteLine("A {0:hh:mm:ss}, le thread {1} a quitté la section critique", DateTime.Now, Thread.CurrentThread.Name);

}

}

}

- line 9: synchro is the object that synchronizes all threads.

- lines 16-23: the [Main] method waits for threads in reverse order of creation.

- lines 29-40: the critical section of the method Increment has been framed by the lock.

The results obtained with 3 threads are as follows:

- thread 0 is the 1st to enter the critical section: lines 1, 2, 6, 8

- the other two threads will be blocked until thread 0 exits the critical section: lines 3 and 4

- thread 1 goes next: lines 7, 9, 10

- thread 2 goes next: lines 11, 12, 13

- line 14: the Main thread waiting for thread 2 to finish is warned

- line 15: thread Main is now waiting for thread 1 to finish. This thread has already finished. The Main thread is notified immediately, line 16.

- lines 17-18: the same process takes place with thread 0

- line 19: number of threads is correct

10.5.3. The Mutex class

The class System.Threading.Mutex can also be used to delimit critical sections. It differs from the lock in terms of visibility :

- the clause lock synchronizes threads in the same application

- the class Mutex allows you to synchronize threads from different applications.

We will use the following constructor and methods:

creates a Mutex M | |

The T1 thread executing the M.WaitOne() requests the property of synchronization object M. If the Mutex M is not held by any thread (as it was at the start), but is "given" to the T1 thread that requested it. If, a little later, a T2 thread performs the same operation, it will be blocked. This is because a Mutex can only belong to one thread. It will be unlocked when thread T1 releases the Mutex M it holds. Several threads can thus be blocked while waiting for the Mutex M. | |

The T1 thread performing the M.ReleaseMutex() relinquishes ownership of the Mutex M. When thread T1 loses the processor, the system can give it to one of the threads waiting for Mutex M. Only one will get it in turn, while the others waiting for M will remain blocked |

A Mutex M manages access to a shared resource R. A thread requests resource R by M.WaitOne() and makes it M.ReleaseMutex(). A critical section of code that must be executed by only one thread at a time is a shared resource. Execution of the critical section can be synchronized as follows:

where M is an object Mutex. Don't forget to release a Mutex has become useless so that another thread can enter the critical section, otherwise the threads waiting for the Mutex never released will never have access to the processor.

If we apply what we've just seen to the previous example, our application becomes the following:

using System;

using System.Threading;

namespace Chap8 {

class Program3 {

// class variables

static int cptrThreads = 0; // thread counter

static Mutex synchro = new Mutex(); // synchronization object

//hand

public static void Main(string[] args) {

...

}

public static void Incrémente() {

....

synchro.WaitOne();

try {

...

} finally {

...

synchro.ReleaseMutex();

}

}

}

}

- line 9: the thread synchronization object is now a Mutex.

- line 18: start of the critical section - only one thread needs to enter. We block until the Mutex synchro is free.

- line 33: because a Mutex must always be released, exception or not, we manage the critical section with a try / finally to free the Mutex in the finally.

- line 23: le Mutex is released once the critical section has been passed.

The results are the same as before.

10.5.4. The AutoResetEvent class

An object AutoResetEvent is a barrier that lets only one thread through at a time, like the two previous tools lock and Mutex. We build a AutoResetEvent as follows:

The Boolean status indicates whether the barrier is closed (false) or open (true). A thread wishing to pass the barrier will indicate it as follows:

- if the barrier is open, the thread passes through and the barrier is closed behind it. If several threads were waiting, we can be sure that only one will pass.

- if the barrier is closed, the thread is blocked. Another thread will open it when the time is right. This time depends entirely on the problem being addressed. The barrier will be opened by the operation :

It may happen that a thread wants to close a barrier. It can do so by :

If, in the previous example, we replace the object Mutex by an object of type AutoResetEvent, the code becomes :

using System;

using System.Threading;

namespace Chap8 {

class Program4 {

// class variables

static int cptrThreads = 0; // thread counter

static EventWaitHandle synchro = new AutoResetEvent(false); // synchronization object

//hand

public static void Main(string[] args) {

....

// we open the critical section barrier

Console.WriteLine("A {0:hh:mm:ss}, le thread {1} ouvre la barrière de la section critique", DateTime.Now, Thread.CurrentThread.Name);

synchro.Set();

// waiting for threads to finish

...

// counter display

Console.WriteLine("Nombre de threads générés : " + cptrThreads);

}

public static void Incrémente() {

// increases thread counter

// exclusive access to the meter is required

...

synchro.WaitOne();

try {

...

} finally {

// release the resource

...

synchro.Set();

}

}

}

}

- line 9: the barrier is created closed. It will be opened by the Main line 16.

- line 27: the thread responsible for incrementing the thread counter requests authorization to enter the critical section. The various threads will accumulate in front of the closed barrier. When the Main will open it, one of the waiting threads will pass.

- line 33: when he has finished his work, he reopens the gate, allowing another thread to enter.

The results are similar to the previous ones.

10.5.5. The Interlocked class

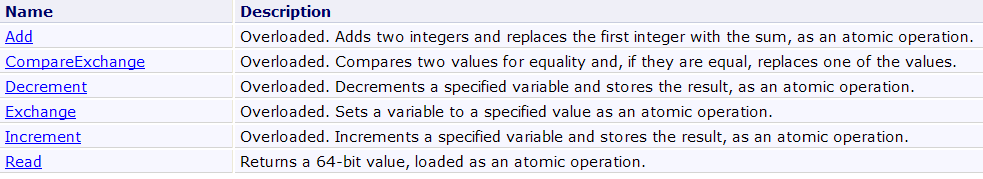

The class Interlocked makes it possible to atomic an operation group. Within an operation group atomic, either all operations are executed by the thread running the group, or none at all. You don't stay in a state where some have been executed and others haven't. Synchronization objects lock, Mutex, AutoResetEvent are all designed to make atomic a group of operations. This is achieved by blocking threads. The Interlocked allows you to avoid thread blocking for simple but frequent operations. The Interlocked offers the following static methods:

The method Incrementally has the following signature:

It increments the rental. The operation is guaranteed atomic.

Our thread counting program can then be as follows:

using System;

using System.Threading;

namespace Chap8 {

class Program5 {

// class variables

static int cptrThreads = 0; // thread counter

//hand

public static void Main(string[] args) {

...

}

public static void Incrémente() {

// increments the thread counter

Interlocked.Increment(ref cptrThreads);

}

}

}

- line 17: the thread counter is incremented atomically.

10.6. Competing access to multiple shared resources

10.6.1. An example

In our previous examples, a single resource was shared by the different threads. The situation can become more complicated if there are several resources and they are dependent on each other. This can lead to an interlocking situation. This situation, also known as deadlock is when two threads wait for each other. Consider the following actions which follow each other in time:

- a thread T1 obtains ownership of a Mutex M1 to access a shared resource R1

- a thread T2 obtains ownership of a Mutex M2 to access a shared resource R2

- thread T1 requests Mutex M2. It is blocked.

- thread T2 requests Mutex M1. It is blocked.

Ici, threads T1 and T2 wait for each other. This case arises when threads need two shared resources, resource R1 controlled by Mutex M1 and resource R2 controlled by Mutex M2. One possible solution is to request both resources at the same time, using a single Mutex M. But this isn't always possible, if, for example, it involves a time-consuming mobilization of an expensive resource. Another solution is for a thread that has M1 and cannot obtain M2, to release M1 to avoid interlocking.

- We have an array in which some threads deposit data (writers) and others read it (readers).

- Writers are equal but exclusive: only one writer at a time can enter data into the table.

- Readers are equal but exclusive: only one reader at a time can read the data deposited in the table.

- A reader can only read data in the table once a writer has deposited data in it, and a writer can only deposit new data in the table once the data in it has been read by a reader.

Two shared resources can be distinguished:

- the writing board: only one writer at a time can have access to it.

- the read-only displayboard: only one reader at a time can access it.

and an order of use for these resources:

- a reader must always come after a writer.

- a writer must always come after a reader, except the 1st time.

Access to these two resources can be controlled with two barriers of type AutoResetEvent :

- the barrier peutEcrire will control writers' access to the board.

- the barrier peutLire will control reader access to the board.

- the barrier peutEcrire will be created initially open, allowing a 1st writer to pass through and blocking all others.

- the barrier peutLire will be created and initially closed, blocking all readers.

- when a writer has finished his work, he opens the gate peutLire to let a reader in.

- when a reader has finished his work, he opens the gate peutEcrire to let a writer in.

The program illustrating this event-driven synchronization is as follows:

using System;

using System.Threading;

namespace Chap8 {

class Program {

// use of reader and writer threads

// illustrates the use of synchronization events

// class variables

static int[] data = new int[3 ]; // resource shared between reader and writer threads

static Random objRandom = new Random(DateTime.Now.Second ); // a random number generator

static AutoResetEvent peutLir e; // indicates that the contents of data can be read

static AutoResetEvent peutEcrir e; // indicates that you can write the contents of data

//hand

public static void Main(string[] args) {

// number of threads to generate

const int nbThreads = 2;

// flag initialization

peutLire = new AutoResetEvent(f als e); // cannot be read yet

peutEcrire = new AutoResetEvent( tru e); // we can already write

// creation of reader threads

Thread[] lecteurs = new Thread[nbThreads];

for (int i = 0; i < nbThreads; i++) {

// creation

lecteurs[i] = new Thread(Lire);

lecteurs[i].Name = "L" + i.ToString();

// launch

lecteurs[i].Start();

}

// creating writer threads

Thread[] écrivains = new Thread[nbThreads];

for (int i = 0; i < nbThreads; i++) {

// creation

écrivains[i] = new Thread(Ecrire);

écrivains[i].Name = "E" + i.ToString();

// launch

écrivains[i].Start();

}

//end of hand

Console.WriteLine("Fin de Main...");

}

// read the contents of the table

public static void Lire() {

...

}

// write in the table

public static void Ecrire() {

....

}

}

}

- line 11: the table data is the resource shared between reader and writer threads. It is shared for reading by reader threads and for writing by writer threads.

- line 13: the object peutLire is used to warn reader threads that they can read the array data. It is set to true by the thread writer who filled in the table data. It is initialized to false, line 23. A writer thread must first fill the array before passing the event peutLire à real.

- line 14: the object peutEcrire is used to warn writer threads that they can write to the data. It is set to true by the reader thread that has used the entire array data. It is initialized to true, line 24. The table data is free to write.

- lines 27-34: create and launch reader threads

- lines 37-44: create and launch writer threads

The method Read executed by reader threads is as follows :

public static void Lire() {

// follow-up

Console.WriteLine("Méthode [Lire] démarrée par le thread n° {0}", Thread.CurrentThread.Name);

// we have to wait for reading authorization

peutLire.WaitOne();

// table reading

for (int i = 0; i < data.Length; i++) {

//wait 1 s

Thread.Sleep(1000);

// display

Console.WriteLine("{0:hh:mm:ss} : Le lecteur {1} a lu le nombre {2}", DateTime.Now, Thread.CurrentThread.Name, data[i]);

}

// we can write

peutEcrire.Set();

// follow-up

Console.WriteLine("Méthode [Lire] terminée par le thread n° {0}", Thread.CurrentThread.Name);

}

- line 5: we wait for a writer thread to signal that the array has been filled. When this signal is received, only one of the reader threads waiting for this signal can pass.

- lines 7-12: table operation data with a Sleep in the middle to force the thread to lose the processor.

- line 14: tells writer threads that the array has been read and can be refilled.

The method Write executed by the writer threads is as follows :

public static void Ecrire() {

// follow-up

Console.WriteLine("Méthode [Ecrire] démarrée par le thread n° {0}", Thread.CurrentThread.Name);

// we have to wait for write authorization

peutEcrire.WaitOne();

// writing table

for (int i = 0; i < data.Length; i++) {

//wait 1 s

Thread.Sleep(1000);

// display

data[i] = objRandom.Next(0, 1000);

Console.WriteLine("{0:hh:mm:ss} : L'écrivain {1} a écrit le nombre {2}", DateTime.Now, Thread.CurrentThread.Name, data[i]);

}

// on peut lire

peutLire.Set();

// follow-up

Console.WriteLine("Méthode [Ecrire] terminée par le thread n° {0}", Thread.CurrentThread.Name);

}

- line 5: we wait for a reader thread to signal that the array has been read. When this signal is received, only one of the writer threads waiting for this signal can pass.

- lines 7-13: table operation data with a Sleep in the middle to force the thread to lose the processor.

- line 15: tells reader threads that the array has been filled and can be read again.

Execution gives the following results:

The following points are worth noting:

- there is only 1 drive at a time, although it loses the processor in the critical section Read

- there is only 1 writer at a time, although he loses the processor in the review section Write

- a reader only reads when there's something to read in the table

- a writer doesn't write until the picture has been fully read

10.6.2. The Monitor class

In the previous example :

- there are two shared resources to manage

- for a given resource, threads are equal.

When writer threads are blocked on the peutEcrire.WaitOne, one of them, any one of them, is unlocked by operation peutEcrire.Set. If the previous operation involves opening the gate to a particular writer, things get more complicated.

The analogy is with an establishment serving the public at counters, where each counter is specialized. When customers arrive, they take a ticket from the ticket dispenser for counter X and then take a seat. Each ticket is numbered, and customers are called by their number over a loudspeaker. While waiting, customers can do as they please. They can read or doze off. Each time, he's awakened by the loudspeaker announcing that number Y has been called to counter X. If it's him, the customer gets up and goes to counter X, otherwise he continues what he was doing.

We can ici in a similar way. Take writers, for example:

their threads are blocked | |

the thread that was reading the array tells the writers that the array is available. It or another thread sets the writer thread to pass the barrier. | |

each thread checks to see if it's the chosen one. If so, it passes the barrier. If not, it returns to standby. |

The class Monitor is used to implement this scenario.

We now describe a standard construction (pattern), proposed in the chapter Threading of the book C# 3.0 referred to in the introduction to this document, capable of solving barrier problems with entry conditions.

- First of all, threads that share a resource (the counter, etc.) access it via an object that we'll call a token. To open the gate leading to the counter, you need to have the token to open it, and there is only one token. Threads must therefore pass the token between themselves.

- To get to the counter, threads first request the :

If the token is free, it is given to the thread that executed the previous operation, otherwise the thread is put on hold for the token.

- If access to the counter is unordered, c.a.d. If the person entering doesn't matter, the previous operation is sufficient. The thread with the token goes to the counter. If access is ordered, the thread with the token checks that it meets the condition for going to the counter:

If the thread is not the one expected at the counter, it gives up its turn by returning the token. It enters a blocked state. It will be woken up as soon as the token becomes available again. He will then check again if he meets the condition to go to the counter. The operation Monitor.Wait(token) that releases the token can only be done if the thread owns of the token. If not, an exception is thrown.

- The thread that checks the condition to go to the counter goes there:

- // counter work

- ....

Before leaving the counter, the thread must return its token, otherwise threads blocked waiting for it will remain blocked indefinitely. There are two different situations:

- the first situation is where the thread holding the token is also the one to signal to threads waiting for the token that it is free. It will do this as follows:

On line 6, it wakes up the threads waiting for the token. This means they become eligible to receive the token. It does not mean they receive it immediately. Line 8, the token is released. All eligible threads will receive the token in turn, indeterminately. This will give them the opportunity to check again whether they meet the access condition. The thread that released the token has modified this condition on line 4 to allow a new thread to enter. The first thread to check this condition keeps the token and goes to the counter in turn.

- the second situation is where the thread holding the token is not the one to signal to threads waiting for the token that it is free. It must, however, release it, because the thread responsible for sending this signal must be the token holder. It will do so using the operation :

The token is now available, but the threads waiting for it (they have performed a Wait(token) operation) are not notified. This task is entrusted to another thread, which at some point will execute code similar to the following:

In the end, the standard construction proposed in the chapter Threading of the book C# 3.0 is as follows:

- define counter access token :

- request access to the counter :

lock(jeton){

while (! jeNeSuisPasCeluiQuiEstAttendu)

Monitor.Wait(jeton);

}

// passage au guichet

...

is equivalent to

Note that in this scheme the token is released immediately, as soon as the barrier is passed. Another thread can then test the access condition. The previous construction therefore lets in all threads verifying the access condition. If this is not what you want, you can write :

lock(jeton){

while (! jeNeSuisPasCeluiQuiEstAttendu)

Monitor.Wait(jeton);

// passage au guichet

...

}

where the token is released only after passing through the counter.

- modify counter access conditions and notify other threads

lock(jeton){

// modifier la condition d'accès au guichet

...

// en avertir les threads en attente du jeton

Monitor.PulseAll(jeton);

}

Above, the access condition can only be modified by the thread holding the token. You can also write :

// modifier la condition d'accès au guichet

...

// en avertir les threads en attente du jeton

Monitor.PulseAll(jeton);

// libérer le jeton

Monitor.Exit(jeton);

if the thread already has the token.

Armed with this information, we can rewrite the readers/writers application, setting an order for readers and writers to access their respective counters. The code is as follows:

using System;

using System.Threading;

namespace Chap8 {

class Program2 {

// use of reader and writer threads

// illustrates the use of synchronization events

// class variables

static int[] data = new int[3 ]; // resource shared between reader and writer threads

static Random objRandom = new Random(DateTime.Now.Second ); // a random number generator

static object peutLire = new object( ); // indicates that the contents of data can be read

static object peutEcrire = new object( ); // indicates that you can write the contents of data

static bool lectureAutorisée = fals e; // to authorize the reading of the table

static bool écritureAutorisée = fals e; // to authorize writing in the table

static string[] ordreLectur e; // sets the order of readers

static string[] ordreEcritur e; // sets the order for writers

static int lecteurSuivant = 0; // indicates the next drive number

static int écrivainSuivant = 0; // indicates the number of the following writer

//hand

public static void Main(string[] args) {

// number of threads to generate

const int nbThreads = 5;

// creation of reader threads

Thread[] lecteurs = new Thread[nbThreads];

for (int i = 0; i < nbThreads; i++) {

// creation

lecteurs[i] = new Thread(Lire);

lecteurs[i].Name = "L" + i.ToString();

// launch

lecteurs[i].Start();

}

// create playback order

ordreLecture = new string[nbThreads];

for (int i = 0; i < nbThreads; i++) {

ordreLecture[i] = lecteurs[nbThreads - i - 1].Name;

Console.WriteLine("Le lecteur {0} est en position {1}", ordreLecture[i], i);

}

// creating writer threads

Thread[] écrivains = new Thread[nbThreads];

for (int i = 0; i < nbThreads; i++) {

// creation

écrivains[i] = new Thread(Ecrire);

écrivains[i].Name = "E" + i.ToString();

// launch

écrivains[i].Start();

}

// creation of writing order

ordreEcriture = new string[nbThreads];

for (int i = 0; i < nbThreads; i++) {

ordreEcriture[i] = écrivains[i].Name;

Console.WriteLine("L'écrivain {0} est en position {1}", ordreEcriture[i], i);

}

// write authorization

lock (peutEcrire) {

écritureAutorisée = true;

Monitor.Pulse(peutEcrire);

}

//end of hand

Console.WriteLine("Fin de Main...");

}

// read the contents of the table

public static void Lire() {

...

}

// write in the table

public static void Ecrire() {

...

}

}

}

Access to the reading desk is subject to the following conditions:

- line 13: the token peutLire

- line 15: the Boolean readingAuthorized

- line 17: the ordered table of readers. Readers go to the reading desk in the order of this table, which contains their names.

- line 19: lecteurSuivant indicates the number of the next reader authorized to go to the counter.

Access to the writing desk is subject to the following conditions:

- line 14: the token peutEcrire

- line 16: the Boolean writingAuthorized

- line 18: the ordered writers' table. Writers go to the writing desk in the order of this table containing their names.

- line 20: writerNext indicates the number of the next writer authorized to go to the counter.

The other elements of the code are as follows:

- lines 29-36: create and launch reader threads. They will all be blocked because reading is not authorized (line 15).

- lines 39-43: their order of passage through the counter will be in the reverse order of their creation.

- lines 46-53: create and launch erative threads. They will all be blocked because writing is not allowed (line 16).

- lines 56-60: their order of passage through the counter will be in the order of their creation.

- line 64: writing is authorized

- line 65: the writers are warned that something has changed.

The method Read is as follows:

public static void Lire() {

// follow-up

Console.WriteLine("Méthode [Lire] démarrée par le thread n° {0}", Thread.CurrentThread.Name);

// we have to wait for reading authorization

lock (peutLire) {

while (!lectureAutorisée || ordreLecture[lecteurSuivant] != Thread.CurrentThread.Name) {

Monitor.Wait(peutLire);

}

// table reading

for (int i = 0; i < data.Length; i++) {

//wait 1 s

Thread.Sleep(1000);

// display

Console.WriteLine("{0:hh:mm:ss} : Le lecteur {1} a lu le nombre {2}", DateTime.Now, Thread.CurrentThread.Name, data[i]);

}

// next reader

lectureAutorisée = false;

lecteurSuivant++;

// writers are warned that they can write

lock (peutEcrire) {

écritureAutorisée = true;

Monitor.PulseAll(peutEcrire);

}

// follow-up

Console.WriteLine("Méthode [Lire] terminée par le thread n° {0}", Thread.CurrentThread.Name);

}

}

- all access to the counter is controlled by the lock lines 5-27. The reader who collects the token keeps it throughout his or her visit to the counter

- lines 6-8: a reader who has acquired the token on line 5 releases it if reading is not authorized or if it is not his or her turn to pass.

- lines 10-15: counter passage (table operation)

- lines 17-18: the thread changes the conditions of access to the reading counter. Note that it still has the reading token and that these modifications cannot yet allow a reader to pass.

- lines 20-23: the thread changes the conditions of access to the writing desk and warns all waiting writers that something has changed.

- line 27: the lock ends, the token peutLire is released. A read thread could then acquire it on line 5, but it would not pass the access condition, since the boolean readingAuthorized is false. In addition, all threads waiting for the peutLire remain so, as the PulseAll(peutLire) has not yet taken place.

The method Write is as follows:

public static void Ecrire() {

// follow-up

Console.WriteLine("Méthode [Ecrire] démarrée par le thread n° {0}", Thread.CurrentThread.Name);

// we have to wait for write authorization

lock (peutEcrire) {

while (!écritureAutorisée || ordreEcriture[écrivainSuivant] != Thread.CurrentThread.Name) {

Monitor.Wait(peutEcrire);

}

// writing table

for (int i = 0; i < data.Length; i++) {

//wait 1 s

Thread.Sleep(1000);

// display

data[i] = objRandom.Next(0, 1000);

Console.WriteLine("{0:hh:mm:ss} : L'écrivain {1} a écrit le nombre {2}", DateTime.Now, Thread.CurrentThread.Name, data[i]);

}

// next writer

écritureAutorisée = false;

écrivainSuivant++;

// readers waiting for the peutLire token are woken up

lock (peutLire) {

lectureAutorisée = true;

Monitor.PulseAll(peutLire);

}

// follow-up

Console.WriteLine("Méthode [Ecrire] terminée par le thread n° {0}", Thread.CurrentThread.Name);

}

}

- all access to the writing desk is controlled by the lock lines 5-27. The writer who collects the token keeps it throughout his or her time at the counter

- lines 6-8: a writer who has acquired the token on line 5 releases it if writing is not authorized or if it is not his or her turn to pass.

- lines 10-16: counter passage (table operation)

- lines 18-19: the thread changes the conditions of access to the writing desk. Note that it still has the write token and that these modifications cannot yet allow a writer to pass.

- lines 21-24: the thread changes the conditions of access to the reading desk and warns all waiting readers that something has changed.

- line 27: the lock ends, the token peutEcrire is released. A write thread could then acquire it on line 5, but it would not pass the access condition, since the boolean writingAuthorized is false. In addition, all threads waiting for the peutEcrire remain so pending a new operation PulseAll(peutEcrire).

An example of execution is as follows:

10.7. Thread pools

Until now, to manage :

- we created them by Thread T=new Thread(...)

- then executed by T.Start()

We saw in the "Databases" chapter that with some SGBD it was possible to have pools of open connections:

- n connections are opened at pool startup

- when a thread requests a connection, it is given one of the open connections in the pool

- when the thread closes the connection, it is not closed but returned to the pool

The use of a connection pool is code-transparent. The advantage lies in improved performance: opening a connection is expensive. Ici 10 open connections can serve hundreds of requests.

A similar system exists for threads:

- min threads are created at pool startup. The value of min is set using the ThreadPool.SetMinThreads(min1,min2). A thread pool can be used to execute asynchronous blocking or non-blocking tasks. The first parameter min1 sets the number of blocking threads, the second min2 the number of asynchronous threads. The current values of these two variables can be obtained by ThreadPool.GetMinThreads(out min1,out min2).

- if this number is not sufficient, the pool will create other threads to respond to requests up to the limit of max threads. The value of max is set using the ThreadPool.SetMaxThreads(max1,max2). Both parameters have the same meaning as in the SetMinThreads. The current values of these two values can be obtained by ThreadPool.GetMaxThreads(out max1,out max2). When the max1 threads have been reached, thread requests for blocking tasks will be queued for a free thread in the pool.

A thread pool offers a number of advantages:

- as with the connection pool, we save on thread creation time: 10 threads can serve hundreds of requests.

- we secure the application: by setting a maximum number of threads, we avoid suffocating the application with too many requests. These will be placed in file queue.

To assign a task to a thread in the pool, use one of two methods:

- ThreadPool.QueueWorkItem(WaitCallBack)

- ThreadPool.QueueWorkItem(WaitCallBack,object)

where WaitCallBack is any method with the signature void WaitCallBack(object). Method 1 asks a thread to execute method WaitCallBack without passing a parameter. Method 2 does the same thing, but passes a parameter of type object to the WaitCallBack.

The following program illustrates these concepts:

using System;

using System.Threading;

namespace Chap8 {

class Program {

public static void Main() {

// init Current thread

Thread main = Thread.CurrentThread;

// name the Thread

main.Name = "Main";

// we use a thread pool

int min1, min2;

// set the minimum number of blocking threads

ThreadPool.GetMinThreads(out min1, out min2);

Console.WriteLine("Nombre minimum de tâches bloquantes dans le pool : {0}", min1);

Console.WriteLine("Nombre minimum de tâches asynchrones dans le pool : {0}", min2);

ThreadPool.SetMinThreads(3, min2);

ThreadPool.GetMinThreads(out min1, out min2);

Console.WriteLine("Nombre minimum de tâches bloquantes dans le pool après changement : {0}", min1);

// set the maximum number of blocking threads

int max1, max2;

ThreadPool.GetMaxThreads(out max1, out max2);

Console.WriteLine("Nombre maximum de tâches bloquantes dans le pool : {0}", max1);

Console.WriteLine("Nombre maximum de tâches asynchrones dans le pool : {0}", max2);

ThreadPool.SetMaxThreads(5, max2);

ThreadPool.GetMaxThreads(out max1, out max2);

Console.WriteLine("Nombre maximum de tâches bloquantes dans le pool après changement : {0}", max1);

// 7 threads are executed

for (int i = 0; i < 7; i++) {

// start execution of thread i in a pool

ThreadPool.QueueUserWorkItem(Sleep, new Data2 { Numéro = i.ToString(), Début = DateTime.Now, Durée = i + 10 });

}

// end of hand

Console.Write("Tapez [entrée] pour terminer le thread {0} à {1:hh:mm:ss:FF}", main.Name, DateTime.Now);

// waiting

Console.ReadLine();

}

public static void Sleep(object infos) {

// parameter is retrieved

Data2 data = infos as Data2;

Console.WriteLine("A {2:hh:mm:ss:FF}, le thread n° {0} va dormir pendant {1} seconde(s)", data.Numéro, data.Durée,DateTime.Now);

// pool status

int cpt1, cpt2;

ThreadPool.GetAvailableThreads(out cpt1, out cpt2);

Console.WriteLine("Nombre de threads pour tâches bloquantes disponibles dans le pool : {0}", cpt1);

// sleep mode for Duration

Thread.Sleep(data.Durée * 1000);

// end of execution

data.Fin = DateTime.Now;

Console.WriteLine("A {3:hh:mm:ss:FF}, le thread n° {0} se termine. Il était programmé pour durer {1} seconde(s). Il a duré {2} seconde(s)", data.Numéro, data.Durée, data.Fin - data.Début,DateTime.Now);

}

}

internal class Data2 {

// miscellaneous information

public string Numéro { get; set; }

public DateTime Début { get; set; }

public int Durée { get; set; }

public DateTime Fin { get; set; }

}

}

- line 15-17: the current minimum number of threads in the thread pool is requested and displayed

- line 18: change the minimum number of threads for blocking tasks to 2

- lines 19-21: new minimums are displayed

- lines 22-28: do the same to set the maximum number of threads for blocking tasks: 5

- lines 30-33: 7 tasks are executed in a pool of 5 threads. 5 tasks should get 1 thread, the first 2 quickly since 2 threads are always present, the other 3 with a waiting time of 0.5 seconds. 2 tasks should wait for a thread to become available.

- line 32: tasks execute the Sleep in lines 40-54 by passing it a parameter of type Data2 defined lines 56-62.

- line 40: the method Sleep performed by tasks

- line 42: recovers the parameter passed to the Sleep.

- line 43: task identifies itself on console

- lines 45-47: display the number of threads currently available. We want to see how it evolves.

- line 49: the task stops for a few seconds (blocking task).

- line 52: when she wakes up, we display some information about her account.

The results are as follows.

For numbers min and max of threads in the pool :

To run the 7 threads :

- lines 1-6: the first 3 tasks are executed in turn. They immediately find 1 available thread (MinThreads=3) and then goes to sleep.

- lines 7-9: for tasks 3 and 4, it's a bit longer. For each of them there was no free thread. We had to create one. This mechanism is possible up to 5 (MaxThreads=5).

- line 10: no more threads available: tasks 5 and 6 will have to wait.

- lines 11-12: task 0 ends. Task 5 takes its thread.

- lines 13-14: task 1 ends. Task 6 takes its thread.

- lines 17-21: tasks are completed one after the other.

10.8. The BackgroundWorker class

10.8.1. Example 1

The class BackgroundWorker belongs to the [System.ComponentModel] namespace. It is used in the same way as a thread, but has some special features which may make it more interesting than the [Thread] class in certain cases:

- it emits the following events:

- DoWork : a thread has requested execution of the BackgroundWorker

- ProgressChanged : the object BackgroundWorker executed the ReportProgress. This is used to give a percentage of completion.

- RunWorkerCompleted : the object BackgroundWorker has completed its work. He may have completed it normally, or with a cancellation or exception.

These events make the BackgroundWorker useful in graphical interfaces: a time-consuming task will be entrusted to a BackgroundWorker which will be able to report on its progress with the ProgressChanged and its end with the event RunWorkerCompleted. The work to be done by the BackgroundWorker will be performed by a method associated with the DoWork.

- it is possible to request its cancellation. In a graphical interface, a long task can be cancelled by the user.

- objects BackgroundWorker belong to a pool and are recycled as needed. An application that needs a BackgroundWorker will get it from the pool, which will give it an existing but unused thread. Recycling threads in this way, rather than creating a new thread each time, improves performance.

We use this tool on the previous application when access to the counter is not controlled:

using System;

using System.Threading;

using System.ComponentModel;

namespace Chap8 {

class Program2 {

// use of reader and writer threads

// illustrates the simultaneous use of shared resources and synchronization

// class variables

const int nbThreads = 2; // total number of threads

static int nbLecteursTerminés = 0; // number of terminated threads

static int[] data = new int[5]; // shared array between reader and writer threads

static object appli; // synchronizes access to number of completed threads

static Random objRandom = new Random(DateTime.Now.Second); // a random number generator

static AutoResetEvent peutLire; // indicates that the contents of the table can be read

static AutoResetEvent peutEcrire; // points out that we can write in the table

static AutoResetEvent finLecteurs; // signals the end of readers

//hand

public static void Main(string[] args) {

// give the thread a name

Thread.CurrentThread.Name = "Main";

// flag initialization

peutLire = new AutoResetEvent(fals e); // cannot be read yet

peutEcrire = new AutoResetEvent(tru e); // we can already write

finLecteurs = new AutoResetEvent(false); // application not completed

// synchronizes access to terminated thread counter

appli = new object();

// creation of reader threads

MyBackgroundWorker[] lecteurs = new MyBackgroundWorker[nbThreads];

for (int i = 0; i < nbThreads; i++) {

// creation

lecteurs[i] = new MyBackgroundWorker();

lecteurs[i].Numéro = "L" + i;

lecteurs[i].DoWork += Lire;

lecteurs[i].RunWorkerCompleted += EndLecteur;

// launch

lecteurs[i].RunWorkerAsync();

}

// creating writer threads

MyBackgroundWorker[] écrivains = new MyBackgroundWorker[nbThreads];

for (int i = 0; i < nbThreads; i++) {

// creation

écrivains[i] = new MyBackgroundWorker();

écrivains[i].Numéro = "E" + i;

écrivains[i].DoWork += Ecrire;

// launch

écrivains[i].RunWorkerAsync();

}

// wait for all threads to finish

finLecteurs.WaitOne();

//end of hand

Console.WriteLine("Fin de Main...");

}

public static void EndLecteur(object sender, RunWorkerCompletedEventArgs infos) {

...

}

// read the contents of the table

public static void Lire(object sender, DoWorkEventArgs infos) {

...

}

// write in the table

public static void Ecrire(object sender, DoWorkEventArgs infos) {

...

}

}

// thread

internal class MyBackgroundWorker : BackgroundWorker {

// miscellaneous information

public string Numéro { get; set; }

}

}

We detail only the changes:

- the class Thread is replaced by the MyBackgroundWorker lines 79-82. The class BackgroundWorker method has been derived to give the thread a number. We could have done things differently by passing an object to the RunWorkerAsync lines 43 and 54, object containing the thread number.

- line 58: the method Main ends after all reader threads have done their job. To do this, on line 12, the counter nbReadersTerminated counts the number of reader threads that have completed their work. This counter is incremented by the EndLecteur in lines 63-65, which is executed each time a reader thread terminates. It is this procedure that controls the AutoResetEvent finLecteurs on line 18, which is synchronized on line 59 with the Hand.

- line 16: because several reader threads may want to increment the counter at the same time nbReadersTerminated, exclusif access to it is provided by the synchronization object app. This case is unlikely, but theoretically possible.

- lines 35-44: creation of reader threads

- line 38: creation of thread type MyBackgroundWorker

- line 39: it is given a No

- line 40: it is assigned the Read to perform

- line 41: the method EndLecteur will be executed after the end of the thread

- line 43: thread is launched

- lines 47-55: creation of writer threads

- line 50: creation of thread type MyBackgroundWorker

- line 51: it is given a No

- line 52: it is assigned the Write to perform

- line 54: thread is launched

The methods Read and Write remain unchanged. The method EndLecteur is executed at the end of each reader thread. Its code is as follows:

public static void EndLecteur(object sender, RunWorkerCompletedEventArgs infos) {

// increment no. of completed drives

lock (appli) {

nbLecteursTerminés++;

if (nbLecteursTerminés == nbThreads)

finLecteurs.Set();

}

}

The role of the method EndLecteur is to notify the Main that all the readers have done their job.

- line 4: the meter nbReadersTerminated is incremented.

- lines 5-6: if all the readers have done their job, so the event finLecteurs is set to true to prevent the Main waiting for this event.

- because the EndLecteur is executed by several threads, the preceding critical section is protected by the lock on line 3.

Execution gives results similar to those of the threaded version.

10.8.2. Example 2

The following code illustrates other points of the class BackgroundWorker :

- the ability to cancel the task

- an exception thrown in the task is reported

- passing an I/O parameter to the task

using System;

using System.Threading;

using System.ComponentModel;

namespace Chap8 {

class Program3 {

// threads

static BackgroundWorker[] tâches = new BackgroundWorker[5];

public static void Main() {

// init Current thread

Thread main = Thread.CurrentThread;

// name the Thread

main.Name = "Main";

// thread creation

for (int i = 0; i < tâches.Length; i++) {

// create thread n° i

tâches[i] = new BackgroundWorker();

// initialize it

tâches[i].DoWork += Sleep;

tâches[i].RunWorkerCompleted += End;

tâches[i].WorkerSupportsCancellation = true;

// launch it

tâches[i].RunWorkerAsync(new Data { Numéro = i, Début = DateTime.Now, Durée = i + 1 });

}

// cancel the last thread

tâches[4].CancelAsync();

// end of hand

Console.WriteLine("Fin du thread {0}, tapez [entrée] pour terminer...", main.Name);

Console.ReadLine();

return;

}

public static void Sleep(object sender, DoWorkEventArgs infos) {

...

}

public static void End(object sender, RunWorkerCompletedEventArgs infos) {

...

}

internal class Data {

// miscellaneous information

public int Numéro { get; set; }

public DateTime Début { get; set; }

public int Durée { get; set; }

public DateTime Fin { get; set; }

}

}

}

- line 9: the BackgroundWorker

- lines 18-27: thread creation

- line 20: thread creation

- line 22: the thread will execute the Sleep lines 39-41

- line 23: the method End in lines 43-45 will be executed at the end of the thread

- line 24: thread can be cancelled

- line 26: the thread is started with a parameter of type [Data], defined on lines 49-52. This object has the following fields:

- Number (input): thread number

- Start (entry): thread start time

- Duration (input): runtime of the Sleep

- End (exit): end of thread execution

- line 29: thread no. 4 is cancelled

All threads execute the Sleep next :

public static void Sleep(object sender, DoWorkEventArgs infos) {

// we use the info parameter

Data data = (Data)infos.Argument;

// exception for task no. 3

if (data.Numéro == 3) {

throw new Exception("test....");

}

// sleep mode for Duration, stopping every second

for (int i = 1; i <= data.Durée && !tâches[data.Numéro].CancellationPending; i++) {

// wait 1 second

Thread.Sleep(1000);

}

// end of execution

data.Fin = DateTime.Now;

// initialize the result

infos.Result = data;

infos.Cancel = tâches[data.Numéro].CancellationPending;

}

- line 1: the method Sleep has the standard event handler signature. It receives two parameters:

- sender : the event sender, ici the BackgroundWorker which executes the

- news : type DoWorkEventArgs which provides information on the event DoWork. This parameter is used both to transmit information to the thread and to retrieve its results.

- line 3: the parameter passed to the RunWorkerAsync of the task is found in the infos.Argument.

- lines 5-7: an exception is thrown for task no. 3

- lines 9-12: the thread "sleeps Duration seconds in one-second increments to enable the cancellation test on line 9. This simulates a long-running job during which the thread would regularly check for a cancellation request. To indicate that it has been cancelled, the thread must set the property infos.Cancel to true (line 17).

- line 16: the thread can return a result to the thread that launched it. It places this result in infos.Result.

Once completed, the threads execute the End next :

public static void End(object sender, RunWorkerCompletedEventArgs infos) {

// the infos parameter is used to display the result of execution

// exception?

if (infos.Error != null) {

Console.WriteLine("Le thread {1} a rencontré l'erreur suivante : {0}", infos.Error.Message, sender);

} else

if (!infos.Cancelled) {

Data data = (Data)infos.Result;

Console.WriteLine("Thread {0} terminé : début {1:hh:mm:ss}, durée programmée {2} s, fin {3:hh:mm:ss}, durée effective {4}",

data.Numéro, data.Début, data.Durée, data.Fin, (data.Fin - data.Début));

} else {

Console.WriteLine("Thread {0} annulé", sender);

}

}

- line 1: the method End has the standard event handler signature. It receives two parameters:

- sender : the event sender, ici the BackgroundWorker which executes the

- news : type RunWorkerCompletedEventArgs which provides information on the event RunWorkerCompleted.

- line 4: the field infos.Error type Exception is filled in only if an exception has occurred.

- line 7: the field infos.Cancelled boolean type to the value true if the thread has been cancelled.

- line 8: if there has been no exception or cancellation, then infos.Result is the result of the executed thread. Using this result if the thread has been cancelled or thrown an exception causes an exception. Thus, on lines 5 and 13, we are unable to display the number of the thread that has been cancelled or thrown an exception, as this number is in infos.Result. This problem can be circumvented by deriving the class BackgroundWorker to store the information to be exchanged between the calling thread and the the called thread as in the previous example. We then use the argument sender which represents the BackgroundWorker instead of the news.

The results are as follows:

10.9. Thread-local data

10.9.1. The principle

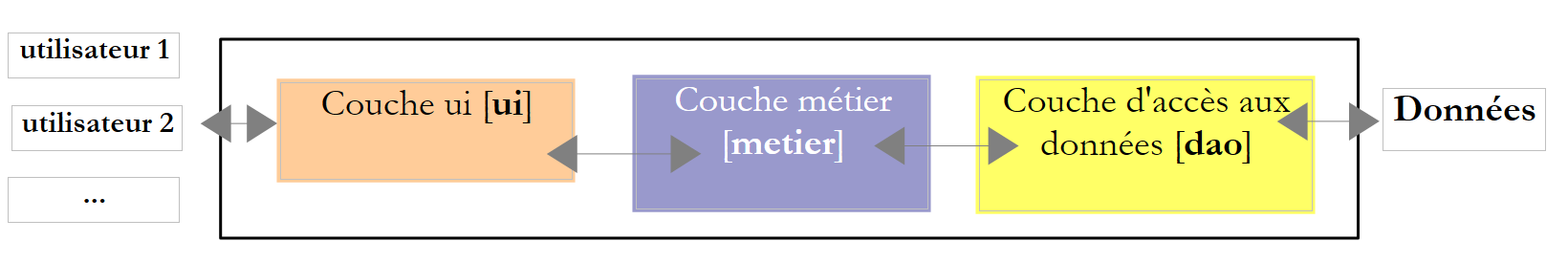

Consider a three-layer application:

|

Let's assume the application is multi-user, a web application for example. Each user is served by a dedicated thread. The life of the thread is as follows:

- the thread is created or requested from a thread pool to satisfy a user request

- if this request requires data, the thread will execute a method from the [ui] layer, which will call a method from the [metier] layer, which in turn will call a method from the [dao] layer.

- the thread returns the response to the user. It then disappears or is recycled into a thread pool.

In operation 2, it may be interesting for the thread to have its own data, c.a.d. not shared with other threads. This data could, for example, belong to the particular user the thread is serving. This data could then be used in the various layers [ui, metier, dao].

The class Thread allows this scenario thanks to a kind of private dictionary where the keys would be of type LocalDataStoreSlot :

creates an entry in the thread's private dictionary for the key name. | |

associates the value data to the key name from thread's private dictionary | |

retrieves the value associated with the name from the thread's private dictionary |

A usage model could be as follows:

- to create a couple (key,value) associated with the current thread :

- to retrieve the value associated with key :

10.9.2. Application of the principle

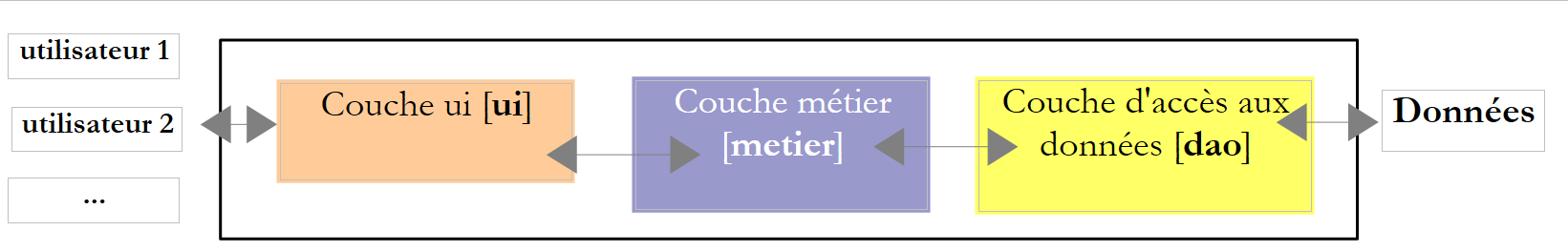

Consider the following three-layer application:

|

Let's assume that the [dao] layer manages a articles database and that its interface is initially as follows:

using System.Collections.Generic;

namespace Chap8 {

public interface IDao {

int InsertArticle(Article article);

List<Article> GetAllArticles();

void DeleteAllArticles();

}

}

- line 5: to insert an item in the database

- line 6: to retrieve all articles in the database

- line 7: to delete all articles from the database

Later, we need a method to insert an array of articles using a transaction, because we want to operate in an all-or-nothing mode: either all articles are inserted, or none at all. We can then modify the interface to integrate this new requirement:

using System.Collections.Generic;

namespace Chap8 {

public interface IDao {

int InsertArticle(Article article);

void insertArticles(Article[] articles);

List<Article> GetAllArticles();

void DeleteAllArticles();

}

}

- line 6: to add a articles array to the database

Later, for another application, the need arises to delete a list of articles saved in a list, still in a transaction. As we can see, the [dao] layer will grow to meet different business needs. We can take another route:

- put only basic operations in the [dao] layer InsertArticle, DeleteArticle, UpdateArticle, SelectArticle, SelectArticles

- deport to the [business] layer the simultaneous updating of several articles. These would use the elementary operations of the [dao] layer.

The advantage of this solution is that the same [dao] layer can be used without change with different [metier] layers. It does, however, introduce a difficulty in managing the transaction, which groups together the updates to be made atomically on the :

- the transaction must be initiated by the [metier] layer before it calls the methods of the [dao] layer

- methods at the [dao] layer must be aware of the existence of the transaction in order to take part in it if it exists

- the transaction must be terminated by the [business] layer.

To ensure that the methods of the [dao] layer are aware of the existence of any current transaction, we could add the transaction as a parameter to each method of the [dao] layer. This parameter will then appear in the signature of the interface's methods, linking it to a specific data source: the database. The local data of the thread provide us with a more elegant solution: the [business] layer will put the transaction in the thread's local data, and the [dao] layer will fetch it from there. The [dao] layer's method signature need not be changed.

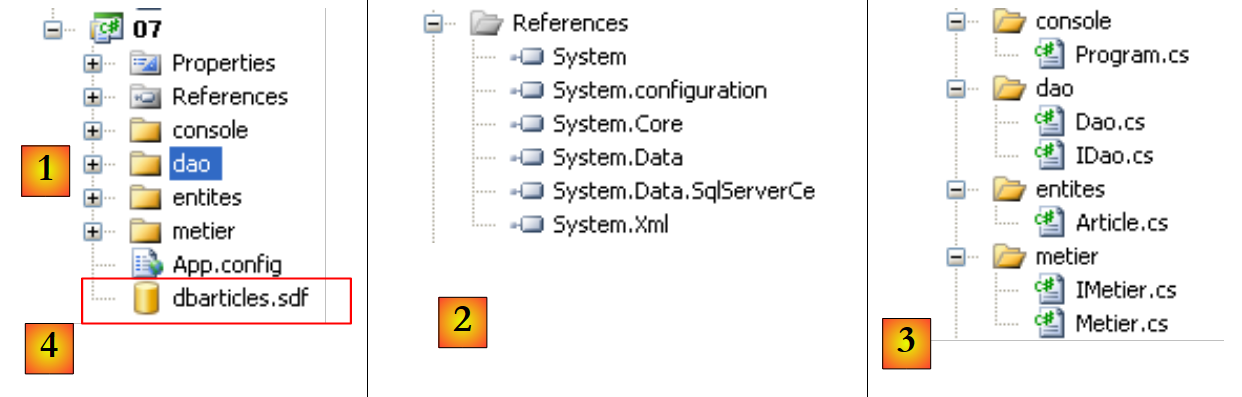

We are implementing this solution with the following Visual Studio project:

|

|

- in [1]: the solution as a whole

- in [2]: the references used. As [4] is a SQL Server Compact database, reference [System.Data.SqlServerCe] is required.

- in [3]: the different layers of the application.

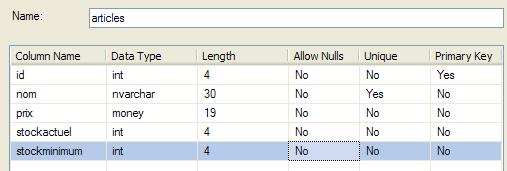

The base [4] is the SQL Server Compact database already used in the previous chapter, in particular in paragraph 9.3.1.

|

The Article class

A row from the previous table [articles] is encapsulated in an object of type Article :

namespace Chap8 {

public class Article {

// properties

public int Id { get; set; }

public string Nom { get; set; }

public decimal Prix { get; set; }

public int StockActuel { get; set; }

public int StockMinimum { get; set; }

// manufacturers

public Article() {

}

public Article(int id, string nom, decimal prix, int stockActuel, int stockMinimum) {

Id = id;

Nom = nom;

Prix = prix;

StockActuel = stockActuel;

StockMinimum = stockMinimum;

}

// identity

public override string ToString() {

return string.Format("[{0},{1},{2},{3},{4}]", Id, Nom, Prix, StockActuel, StockMinimum);

}

}

}

Layer interface [dao]

The interface IDao of the [dao] layer will be as follows:

using System.Collections.Generic;

namespace Chap8 {

public interface IDao {

int InsertArticle(Article article);

List<Article> GetAllArticles();

void DeleteAllArticles();

}

}

- line 5: to insert an item in table [articles]

- line 6: to put all the rows of table [articles] in an object list Article

- line 7: to delete all lines in table [articles]

Layer interface [metier]

The interface IMetier of the [metier] layer will be as follows:

using System.Collections.Generic;

namespace Chap8 {

interface IMetier {

void InsertArticlesInTransaction(Article[] articles);

void InsertArticlesOutOfTransaction(Article[] articles);

List<Article> GetAllArticles();

void DeleteAllArticles();

}

}

- line 5: to insert, within a transaction, a set of articles

- line 6: same but without transaction

- line 7: to obtain a list of all articles