2. The three problems studied and the results

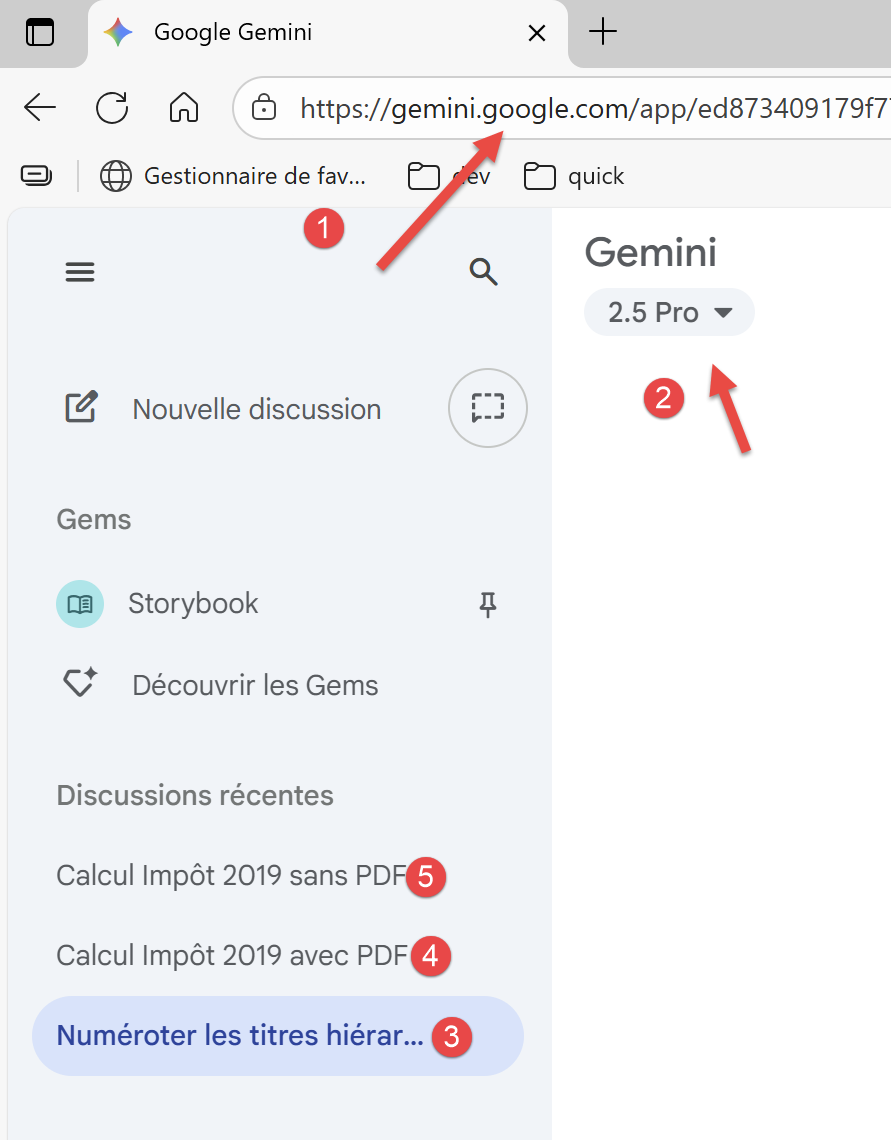

We will ask the AI to study three problems, from the simplest to the most complex. Let’s look at a screenshot from Google Gemini:

|

- In [1], the Gemini URL;

- In [2], the version of Gemini used;

- In [3-5], the three problems posed to Gemini;

2.1. Problem 1

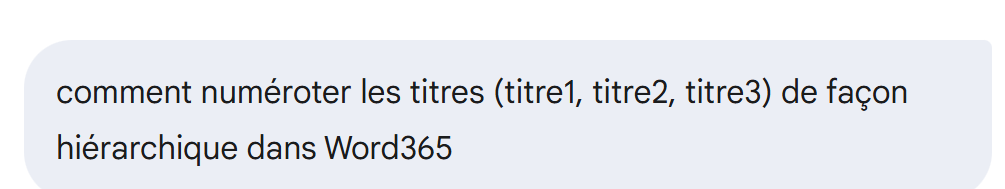

Problem 1 is a simple question:

|

All AIs will answer this question correctly.

2.2. Problem 2

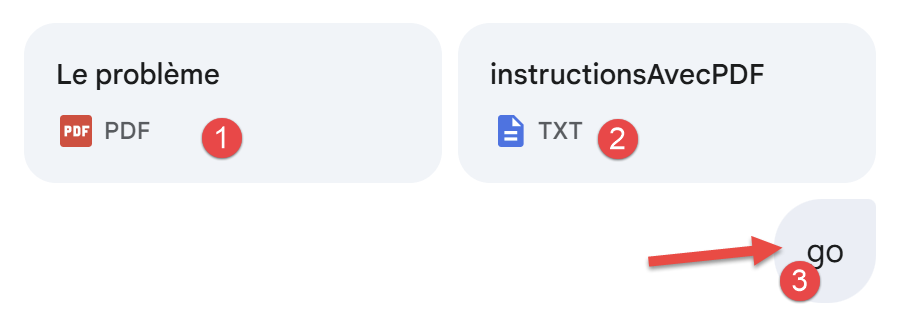

Problem 2 is as follows (screenshot from Gemini):

|

- In [1], the principle of calculating 2019 taxes on 2018 income is explained in a PDF. We’ll come back to this;

- In [2], we give Gemini precise instructions on what we want: a clean Python script that solves the problem and validates the proposed solution with 11 unit tests;

- In [3], to run Gemini, you have to write some code;

This is exactly the same scenario as a university lab assignment.

The AIs tested will solve the problem, with the exception of MistralAI and Perplexity.

2.3. Problem 3

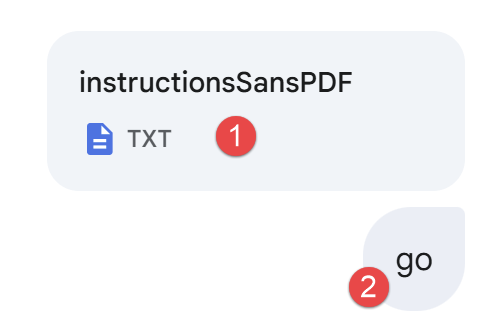

Still using a screenshot from Google Gemini, Problem 3 is as follows:

|

- In [1], we provide our instructions, the same as before. But since we don’t provide the PDF containing the exact calculation rules, the AI will have to search for these rules online;

- In [3], we launch the AI;

Only three AIs passed this test, in order of excellence (strictly personal opinion, of course):

- OpenAI’s ChatGPT;

- Grok by xAI;

- Google Gemini;

The ClaudeAI AI failed on problem 3. The MistralAI AI failed on problems 2 and 3, as did the Perplexity AI. The DeepSeek AI failed on problem 3.