6. Resolving the three issues with Grok

6.1. Introduction

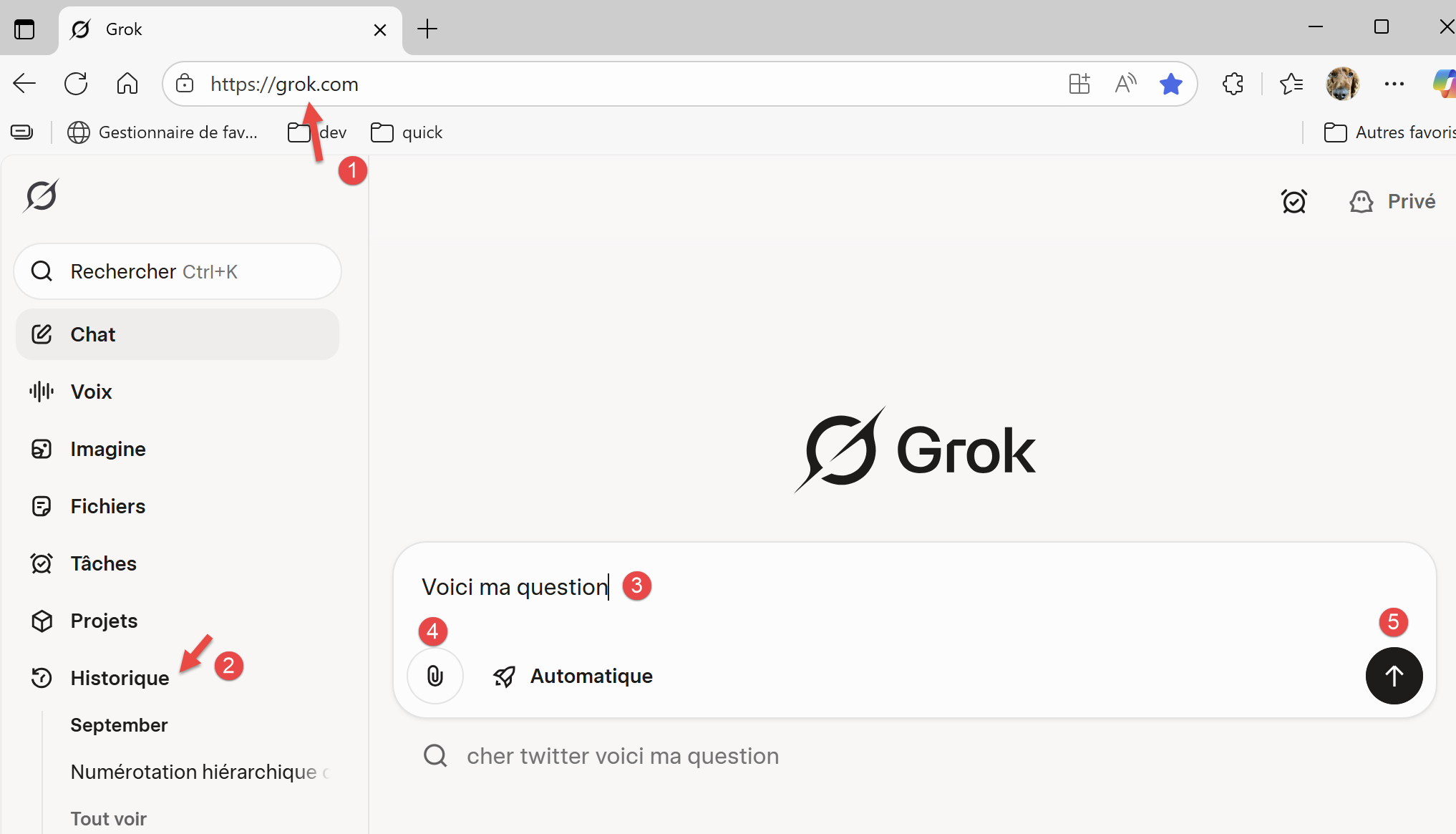

|

- In [1], the URL for the Grok AI owned by xAI [https://x.ai/company];

- In [2], your conversation history. To access it, you need to create an account;

- In [3], ask your question;

- In [4], you can attach files;

- In [5], you run the AI;

Unlike Gemini and ChatGPT, I haven’t encountered any limits on the number of questions, time, or number of attached files. That doesn’t mean these limits don’t exist.

6.2. Problem 1

|

Grok answers this question correctly.

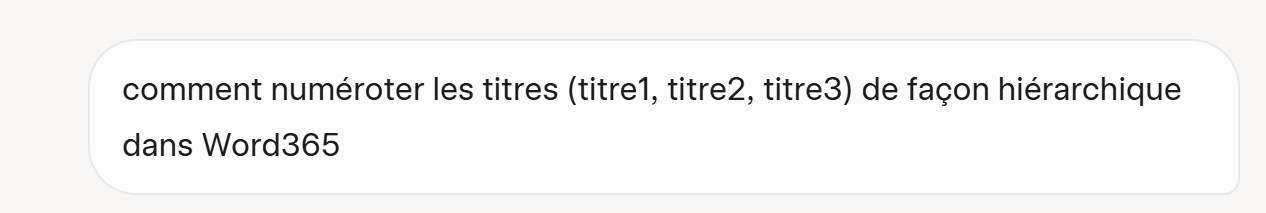

6.3. Problem 2

We ask Grok to calculate the tax using the PDF generated by ChatGPT and provide our instructions in a text file.

|

The text file is the same one used with the two previously tested AIs, but we’ve included the 25 tests validated by ChatGPT and Gemini. The PDF used is the one generated by ChatGPT:

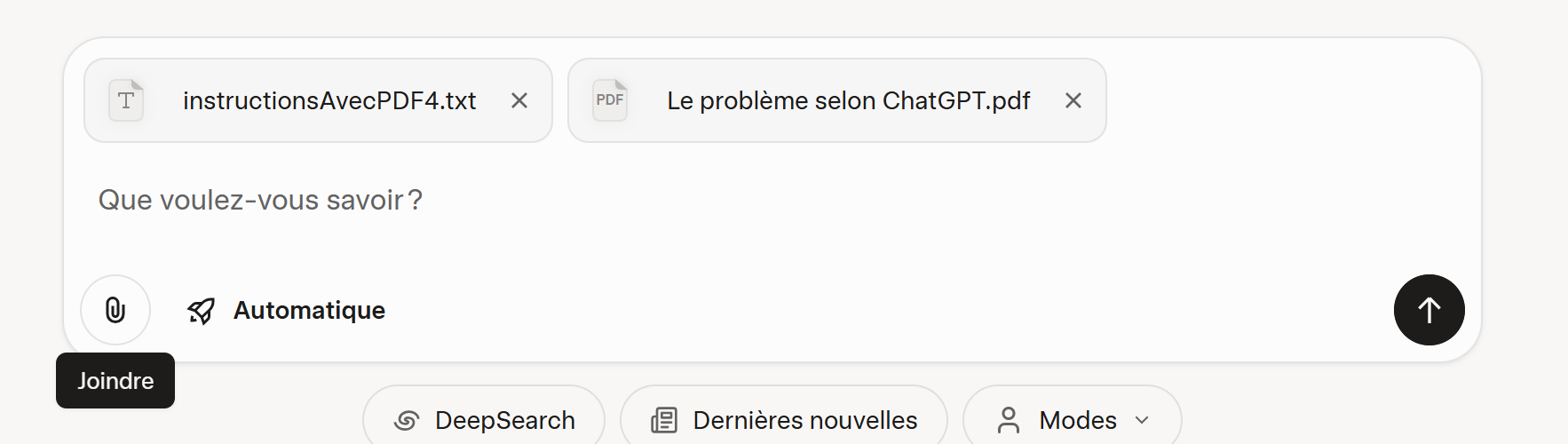

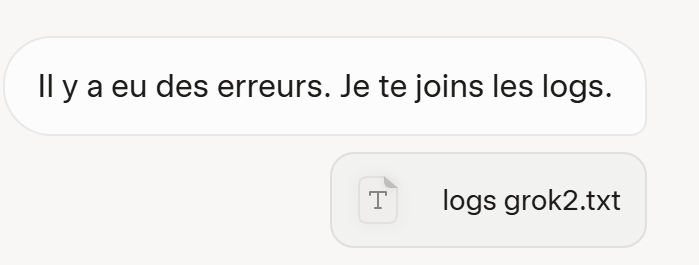

Grok then provides a very clean script, but when ported to PyCharm, virtually none of the tests pass. I then provide it with the error logs:

|  |

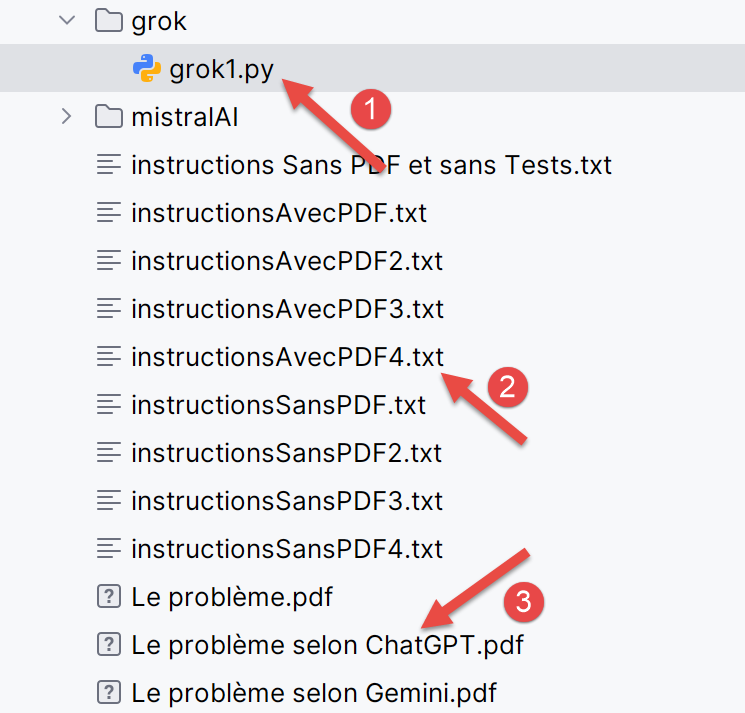

This time, Grok passes all 25 tests. In [1-3], we show the generated [grok1] script along with the two files attached to the question.

6.4. Problem 3

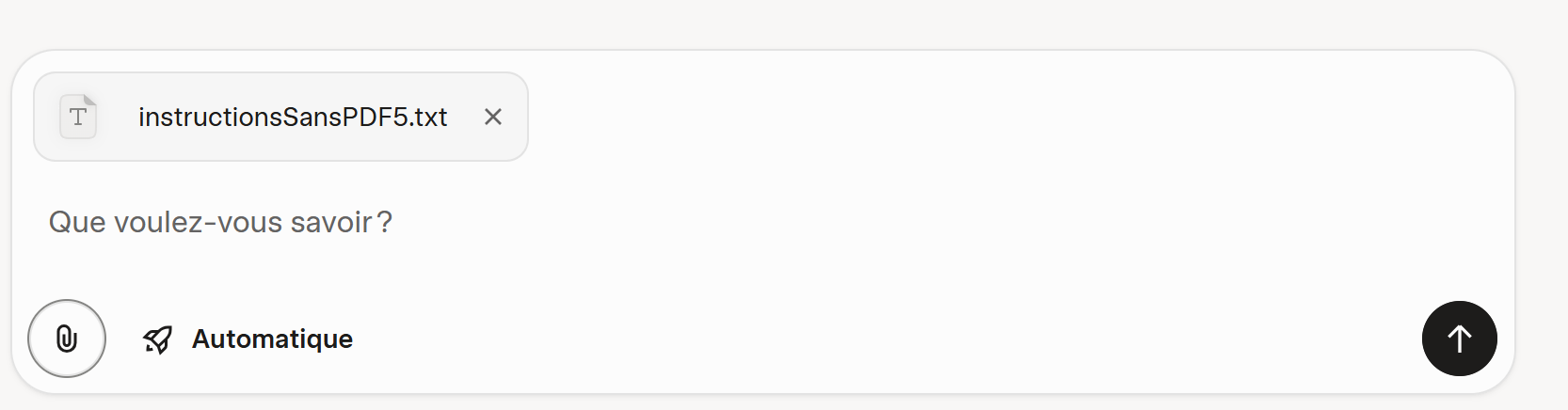

This time, no PDF is provided for the calculation rules. Grok will have to find them online. The text instructions [instructionsSansPDF5.txt] give it the same 25 tests to verify as before.

|

Grok almost succeeds on the first try. It generates a script that passes 24 out of 25 tests. We provide it with its logs.

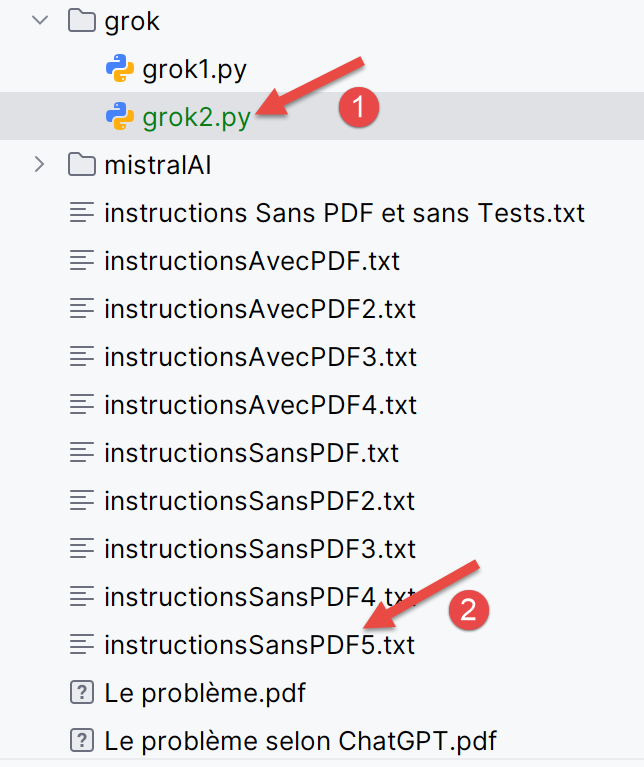

|  |

On the second try, it works. In [1], the script generated by Grok; in [2], the instructions to follow.

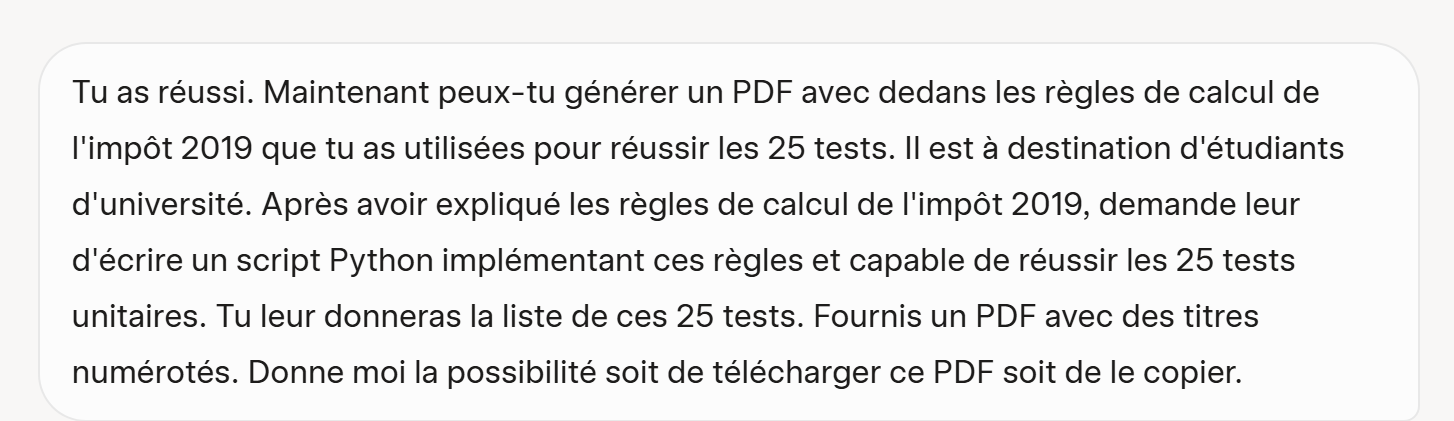

We now ask it to generate a PDF explaining the calculation rules it used to pass all 25 tests:

|

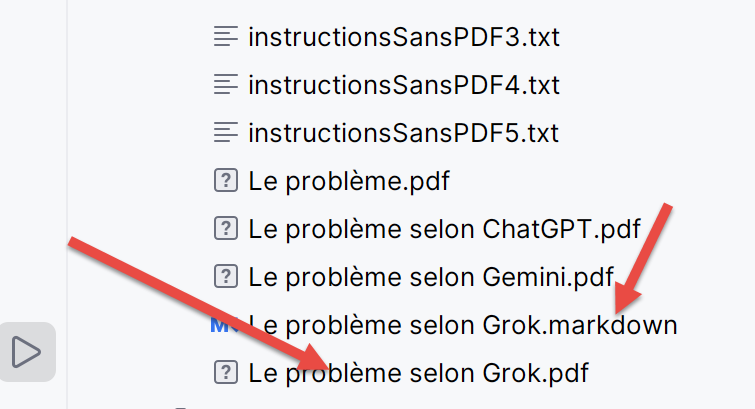

Grok does not generate a PDF but a [Markdown] file. I used a free tool to convert it to PDF. Additionally, PyCharm can read [Markdown] files:

|

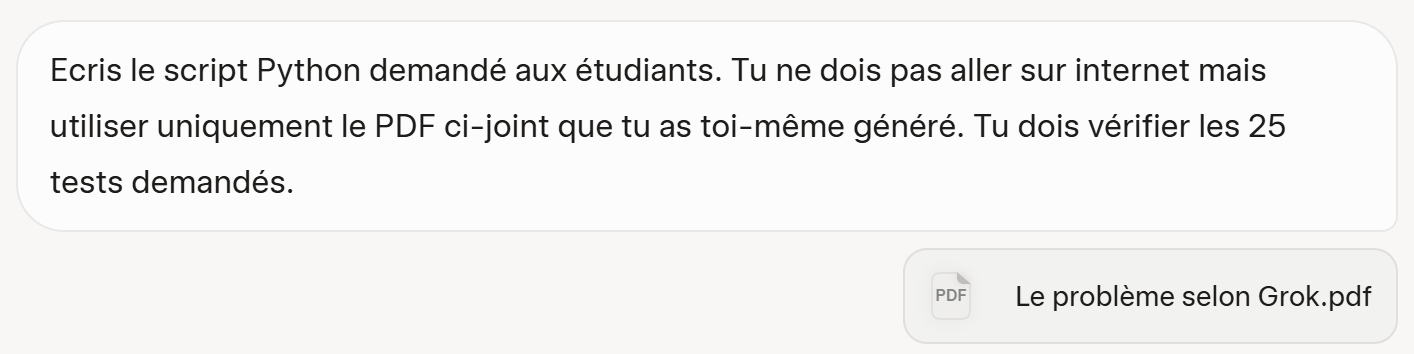

6.5. Problem 4

To validate the PDF generated earlier, we feed it to Grok.

|

Its first version is correct. The script passes all 25 tests. In fact, AIs don’t seem to be deterministic. You can ask them the same question twice and see their answers diverge. That was the case here with Grok. The first time, I had forgotten that it wasn’t supposed to go online and should use only its PDF. It then produced an incorrect script. I gave it its logs, and that’s when I saw that it was going online to check things. In the question above, I asked it not to do that. As a result, overall, Grok performed well.