2. JPA entities

2.1. Example 1 - Object representation of a single table

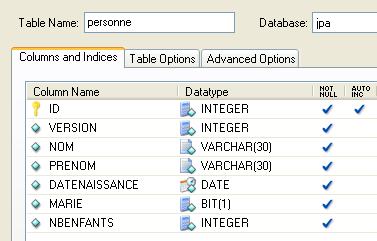

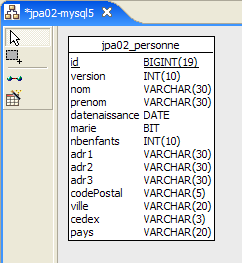

2.1.1. The [person] table

Consider a database with a single [person] table whose purpose is to store some information about individuals:

|

primary key of the table | |

version of the row in the table. Every time the person is modified, their version number is incremented. | |

person's last name | |

first name | |

her date of birth | |

integer 0 (unmarried) or 1 (married) | |

number of children |

2.1.2. The [Person] entity

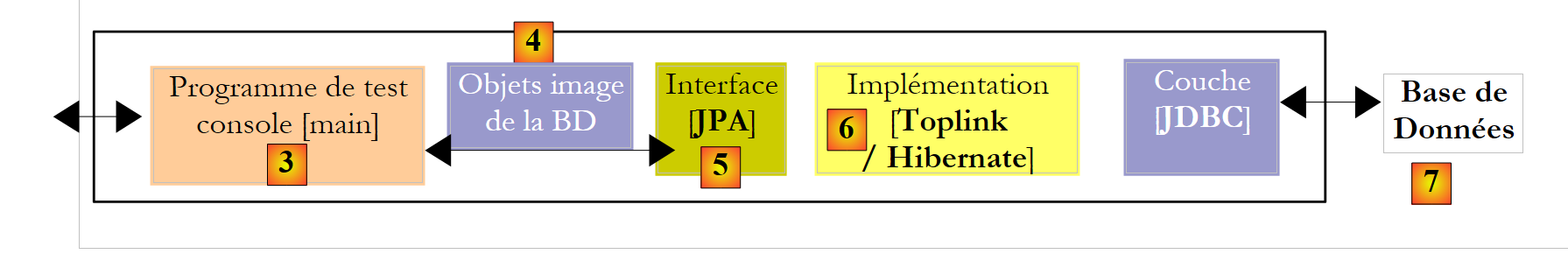

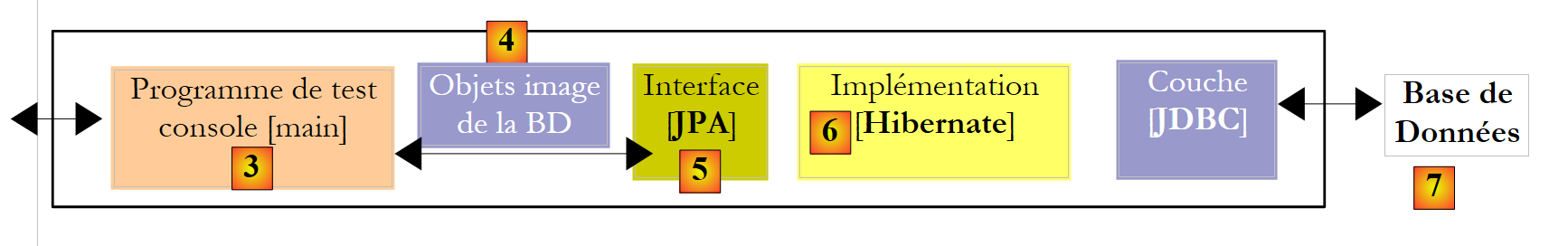

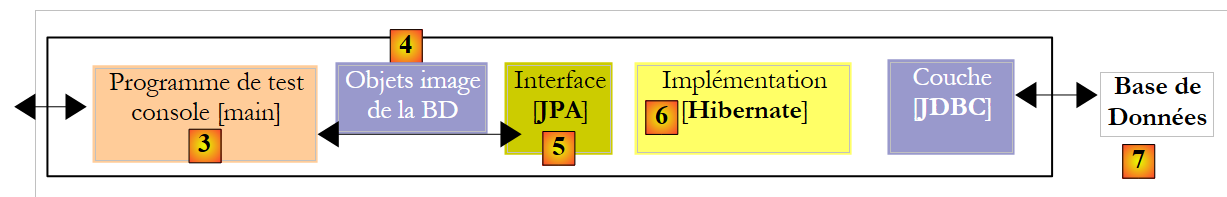

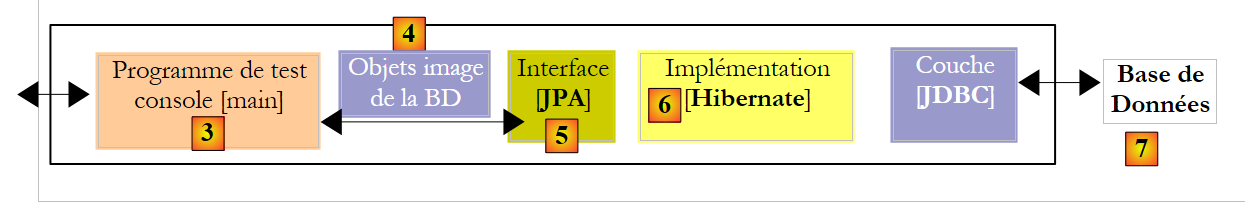

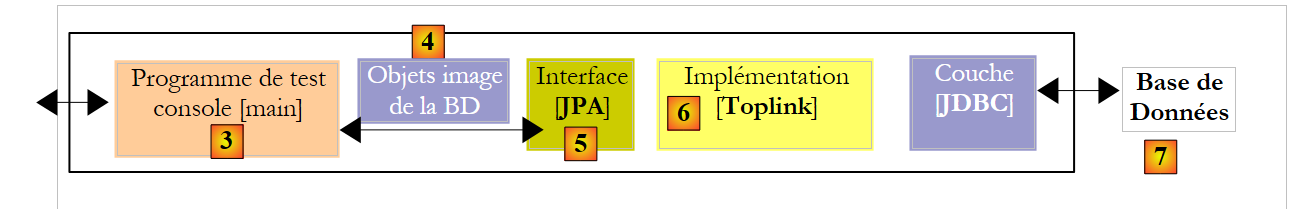

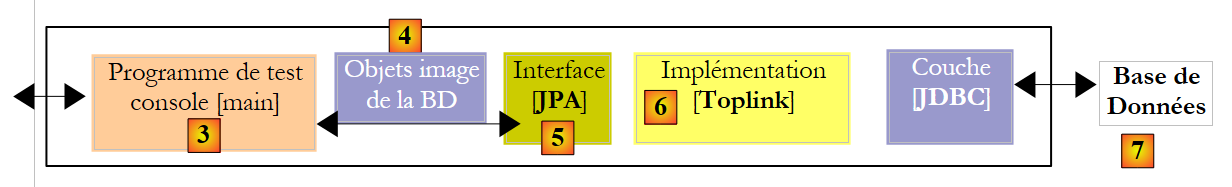

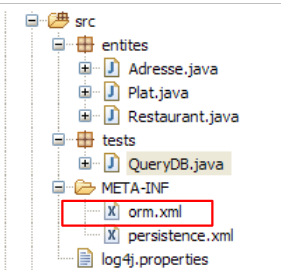

We are in the following runtime environment:

|

The JPA layer [5] must bridge the relational world of the database [7] and the object world [4] manipulated by Java programs [3]. This bridge is established through configuration, and there are two ways to do this:

- using XML files. This was virtually the only way to do it until the advent of JDK 1.5

- using Java annotations since JDK 1.5

In this document, we will use almost exclusively the second method.

The [Person] object representing the [person] table presented earlier could be as follows:

...

@SuppressWarnings("unused")

@Entity

@Table(name="Personne")

public class Personne implements Serializable{

@Id

@Column(name = "ID", nullable = false)

@GeneratedValue(strategy = GenerationType.AUTO)

private Integer id;

@Column(name = "VERSION", nullable = false)

@Version

private int version;

@Column(name = "NOM", length = 30, nullable = false, unique = true)

private String nom;

@Column(name = "PRENOM", length = 30, nullable = false)

private String prenom;

@Column(name = "DATENAISSANCE", nullable = false)

@Temporal(TemporalType.DATE)

private Date datenaissance;

@Column(name = "MARIE", nullable = false)

private boolean marie;

@Column(name = "NBENFANTS", nullable = false)

private int nbenfants;

// manufacturers

public Personne() {

}

public Personne(String nom, String prenom, Date datenaissance, boolean marie,

int nbenfants) {

setNom(nom);

setPrenom(prenom);

setDatenaissance(datenaissance);

setMarie(marie);

setNbenfants(nbenfants);

}

// toString

public String toString() {

...

}

// getters and setters

...

}

Configuration is performed using Java annotations (@Annotation). Java annotations are either processed by the compiler or by specialized tools at runtime. Apart from the annotation on line 3 intended for the compiler, all annotations here are intended for the JPA implementation being used, Hibernate or Toplink. They will therefore be processed at runtime. In the absence of tools capable of interpreting them, these annotations are ignored. Thus, the [Person] class above could be used in a non-JPA context.

There are two distinct cases for using JPA annotations in a class C associated with a table T:

- the table T already exists: the JPA annotations must then replicate the existing structure (column names and definitions, integrity constraints, foreign keys, primary keys, etc.)

- Table T does not exist and will be created based on the annotations found in class C.

Case 2 is the easiest to handle. Using JPA annotations, we specify the structure of the table T we want. Case 1 is often more complex. Table T may have been created a long time ago outside of any JPA context. Its structure may therefore be ill-suited to JPA’s relational-to-object bridge. To simplify matters, we’ll focus on Case 2, where the table T associated with class C will be created based on the JPA annotations in class C.

Let’s examine the JPA annotations of the [Person] class:

- line 4: the @Entity annotation is the first essential annotation. It is placed before the line declaring the class and indicates that the class in question must be managed by the JPA persistence layer. Without this annotation, all other JPA annotations would be ignored.

- line 5: the @Table annotation designates the database table that the class represents. Its main argument is name, which specifies the table’s name. Without this argument, the table will be named after the class, in this case [Person]. In our example, the @Table annotation is therefore unnecessary.

- Line 8: The @Id annotation is used to designate the field in the class that represents the table’s primary key. This annotation is mandatory. Here, it indicates that the id field on line 11 represents the table’s primary key.

- Line 9: The @Column annotation is used to link a field in the class to the table column that the field represents. The name attribute specifies the name of the column in the table. If this attribute is omitted, the column takes the same name as the field. In our example, the name argument was therefore not required. The nullable=false argument indicates that the column associated with the field cannot have the value NULL and that the field must therefore have a value.

- Line 10: The @GeneratedValue annotation specifies how the primary key is generated when it is automatically generated by the DBMS. This will be the case in all our examples. It is not mandatory. Thus, our Person could have a student ID that serves as the primary key and is not generated by the DBMS but set by the application. In this case, the @GeneratedValue annotation would be omitted. The strategy argument specifies how the primary key is generated when generated by the DBMS. Not all DBMSs use the same technique for generating primary key values. For example:

uses a value generator called before each insertion | |

the primary key field is defined as having the Identity type. The result is similar to Firebird’s value generator, except that the key value is not known until after the row is inserted. | |

uses an object called SEQUENCE, which again acts as a value generator |

The JPA layer must generate different SQL statements depending on the DBMS in order to create the value generator. We specify the type of DBMS it needs to handle through configuration. As a result, it can determine the standard strategy for generating primary key values for that DBMS. The argument strategy = GenerationType.*****AUTO* tells the JPA layer to use this standard strategy. This technique has worked in all the examples in this document for the seven DBMSs used.

- Line 14: The @Version annotation designates the field used to manage concurrent access to the same row in the table.

To understand this issue of concurrent access to the same row in the [person] table, let’s assume a web application allows a person’s information to be updated and consider the following scenario:

At time T1, user U1 begins editing a person P. At this moment, the number of children is 0. He changes this number to 1, but before he submits his changes, user U2 begins editing the same person P. Since U1 has not yet submitted his changes, U2 sees the number of children as 0 on his screen. U2 changes the name of person P to uppercase. Then U1 and U2 save their changes in that order. U2’s change will take precedence: in the database, the name will be in uppercase and the number of children will remain at zero, even though U1 believes they changed it to 1.

The concept of a person’s version helps us solve this problem. Let’s revisit the same use case:

At time T1, a user U1 begins editing a person P. At this point, the number of children is 0 and the version is V1. They change the number of children to 1, but before they commit their change, a user U2 begins editing the same person P. Since U1 has not yet committed their change, U2 sees the number of children as 0 and the version as V1. U2 changes the name of person P to uppercase. Then U1 and U2 commit their changes in that order. Before committing a change, we verify that the user modifying person P holds the same version as the currently saved version of person P. This will be the case for user U1. Their change is therefore accepted, and we then change the version of the modified person from V1 to V2 to indicate that the person has undergone a change. When validating U2’s modification, we will notice that U2 has version V1 of person P, whereas the current version is V2. We can then inform user U2 that someone else acted before them and that they must start with the new version of person P. They will do so, retrieve a version V2 of person P who now has a child, capitalize the name, and validate. Their modification will be accepted if the registered person P is still version V2. Ultimately, the modifications made by U1 and U2 will be taken into account, whereas in the use case without versions, one of the modifications would have been lost.

The [DAO] layer of the client application can manage the version of the [Person] class itself. Every time an object P is modified, the version of that object will be incremented by 1 in the table. The @Version annotation allows this management to be transferred to the JPA layer. The field in question does not need to be named version as in the example. It can have any name.

The fields corresponding to the @Id and @Version annotations are present for persistence purposes. They would not be needed if the [Person] class did not need to be persisted. We can see, therefore, that an object is represented differently depending on whether or not it needs to be persisted.

- Line 17: Once again, the @Column annotation provides information about the column in the [person] table associated with the name field of the Person class. Here we find two new arguments:

- unique=true indicates that a person’s name must be unique. This will result in the addition of a uniqueness constraint on the NAME column of the [person] table in the database.

- length=30 sets the number of characters in the NAME column to 30. This means that the type of this column will be VARCHAR(30).

- Line 24: The @Temporal annotation is used to specify the SQL type for a date/time column or field. The TemporalType.DATE type denotes a date without an associated time. The other possible types are TemporalType.TIME for encoding a time and TemporalType.TIMESTAMP for encoding a date and time.

Let’s now comment on the rest of the code in the [Person] class:

- Line 6: The class implements the Serializable interface. Serializing an object involves converting it into a sequence of bits. Deserialization is the reverse operation. Serialization/deserialization is particularly used in client/server applications where objects are exchanged over the network. Client or server applications are unaware of this operation, which is performed transparently by the JVMs. For this to be possible, however, the classes of the exchanged objects must be "tagged" with the Serializable keyword.

- Line 37: a constructor for the class. Note that the id and version fields are not included among the parameters. This is because these two fields are managed by the JPA layer and not by the application.

- Lines 51 and beyond: the get and set methods for each of the class’s fields. Note that JPA annotations can be placed on the fields’ get methods instead of on the fields themselves. The placement of the annotations indicates the mode JPA should use to access the fields:

- if the annotations are placed at the field level, JPA will access the fields directly to read or write them

- if the annotations are placed at the get level, JPA will access the fields via the get/set methods to read or write them

The position of the @Id annotation determines the placement of JPA annotations in a class. When placed at the field level, it indicates direct access to the fields; when placed at the get level, it indicates access to the fields via the get and set methods. The other annotations must then be placed in the same way as the @Id annotation.

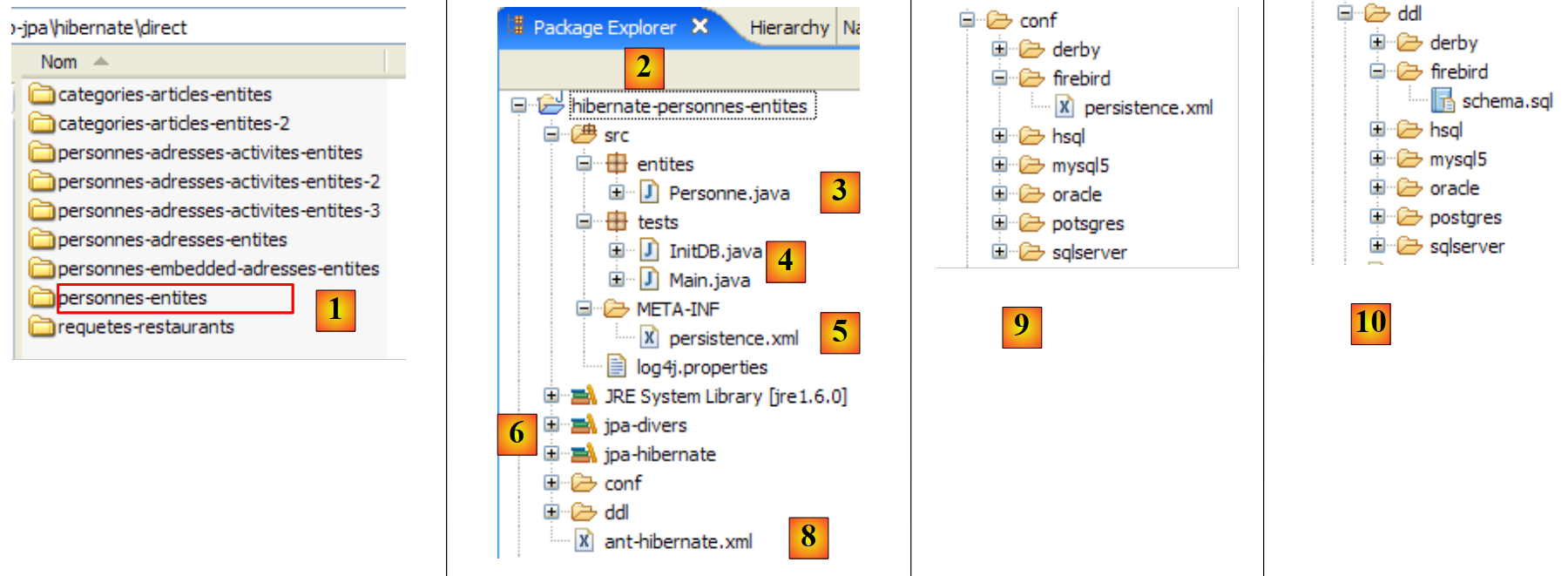

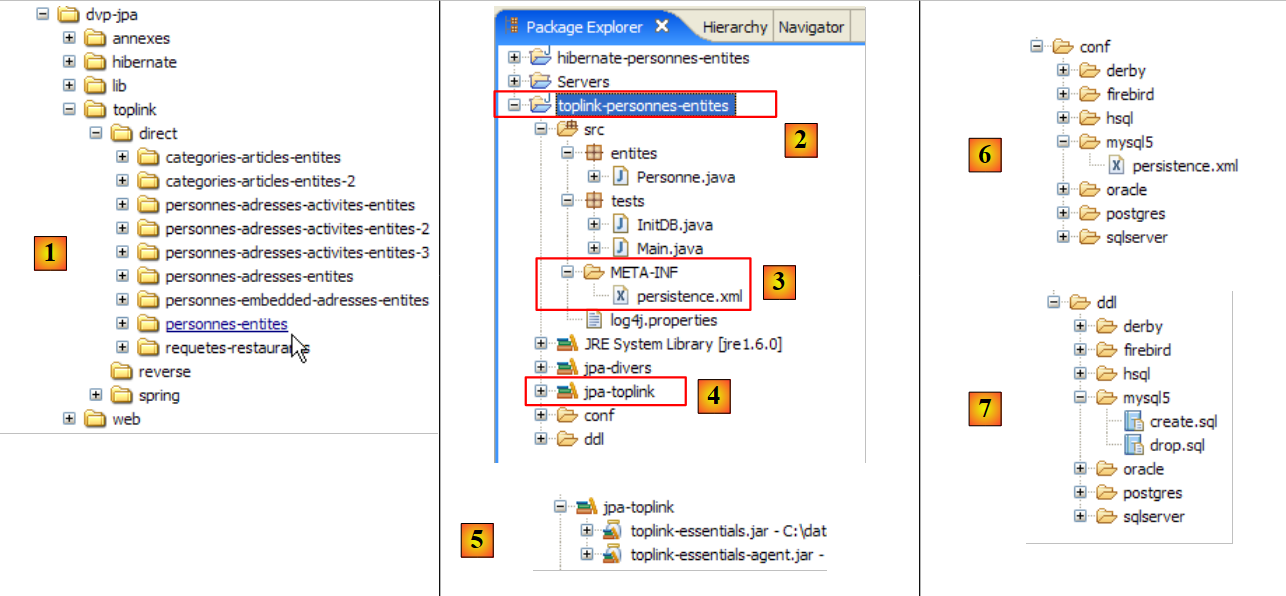

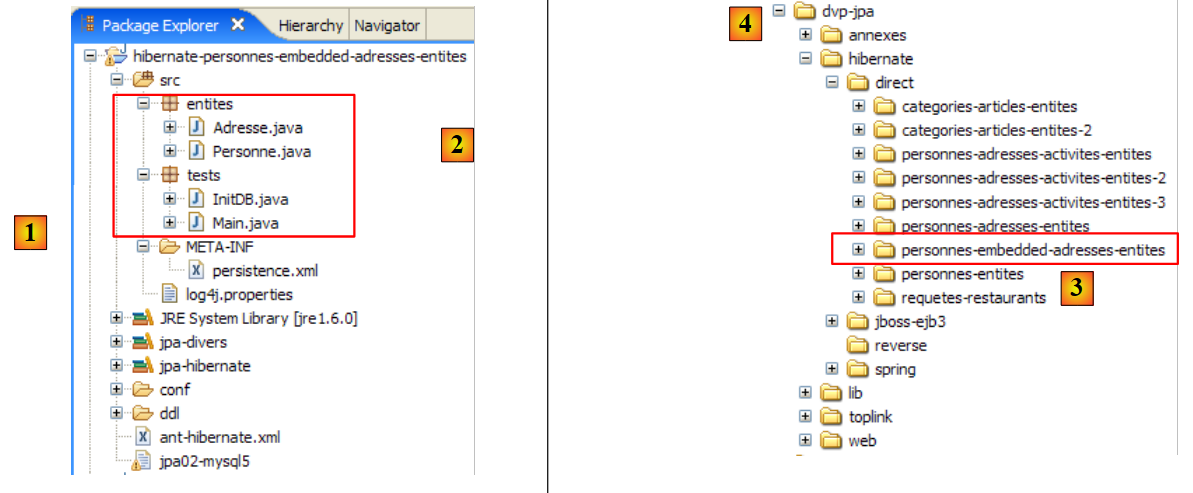

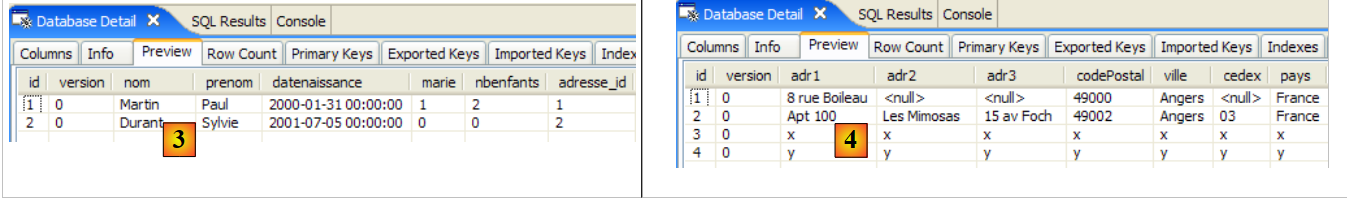

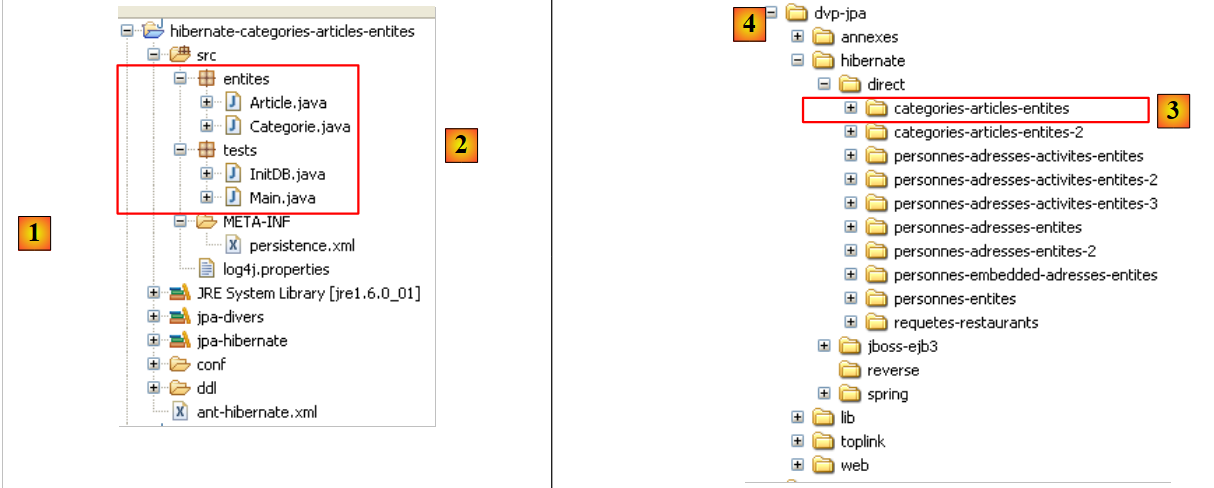

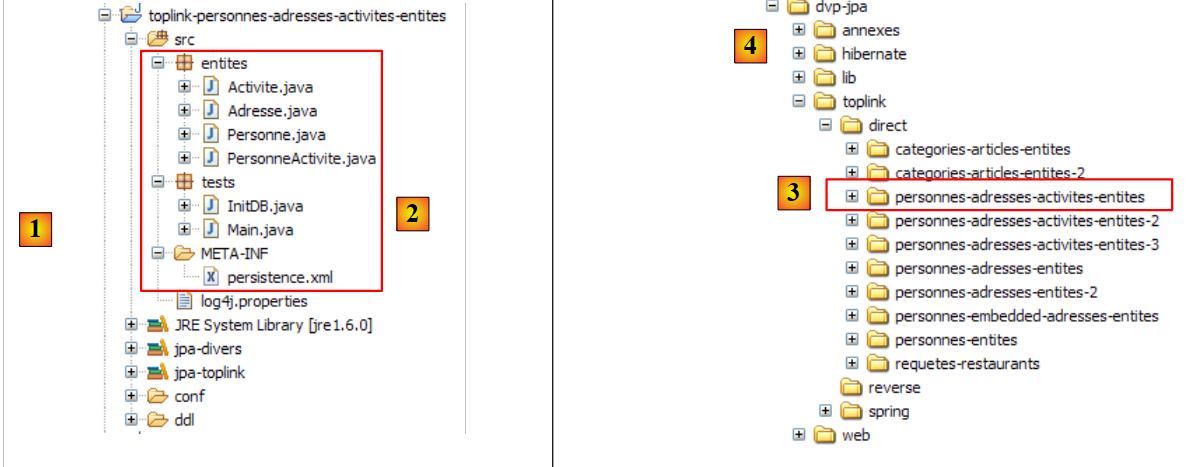

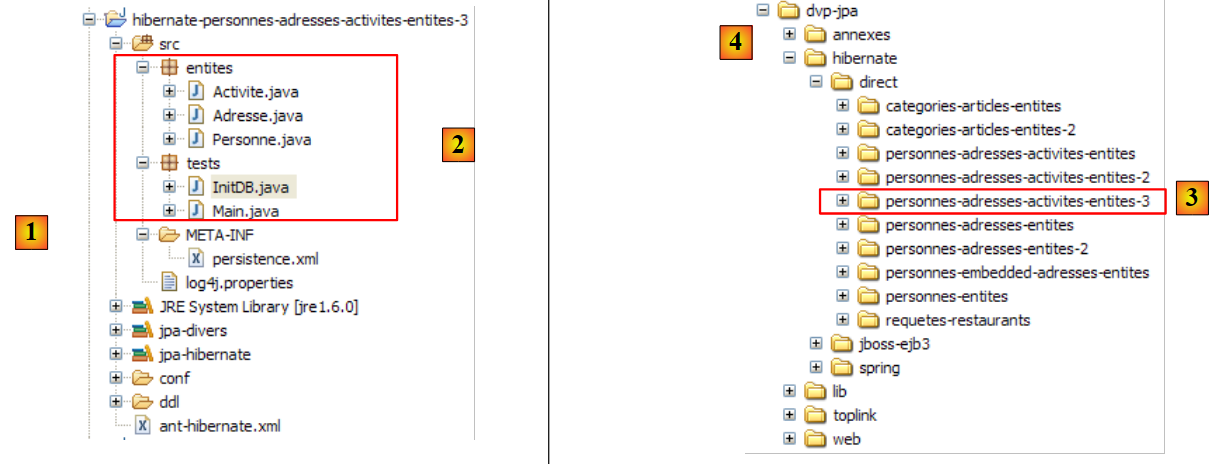

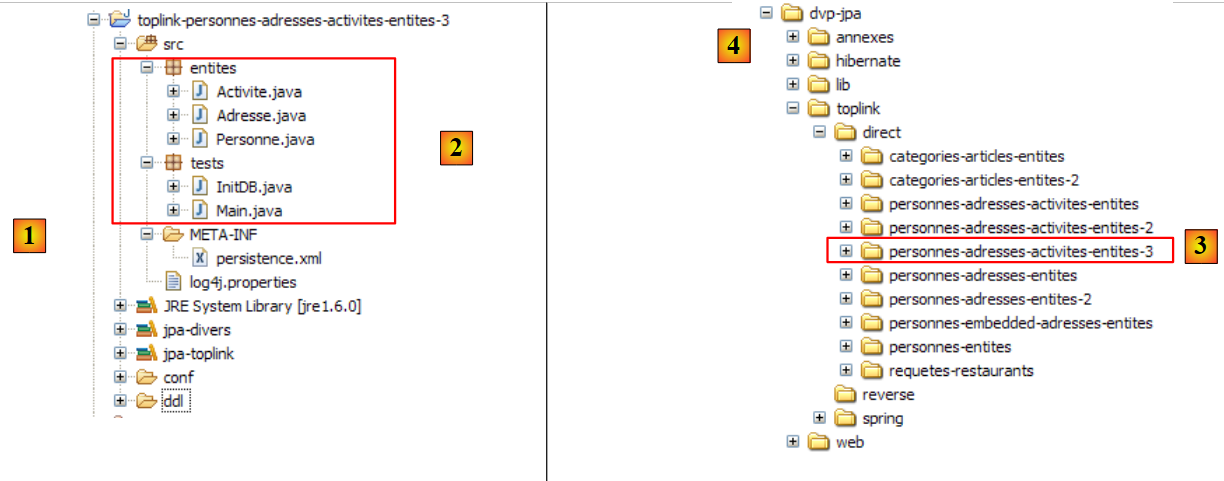

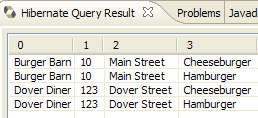

2.1.3. The Eclipse Test Project

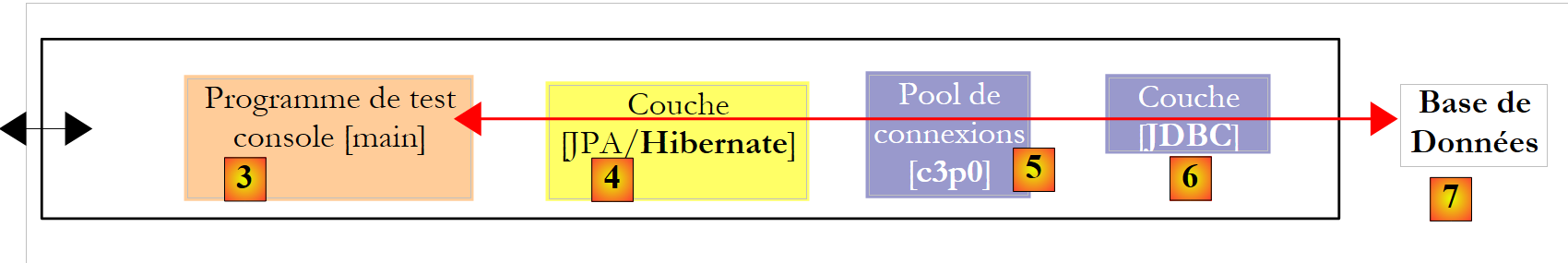

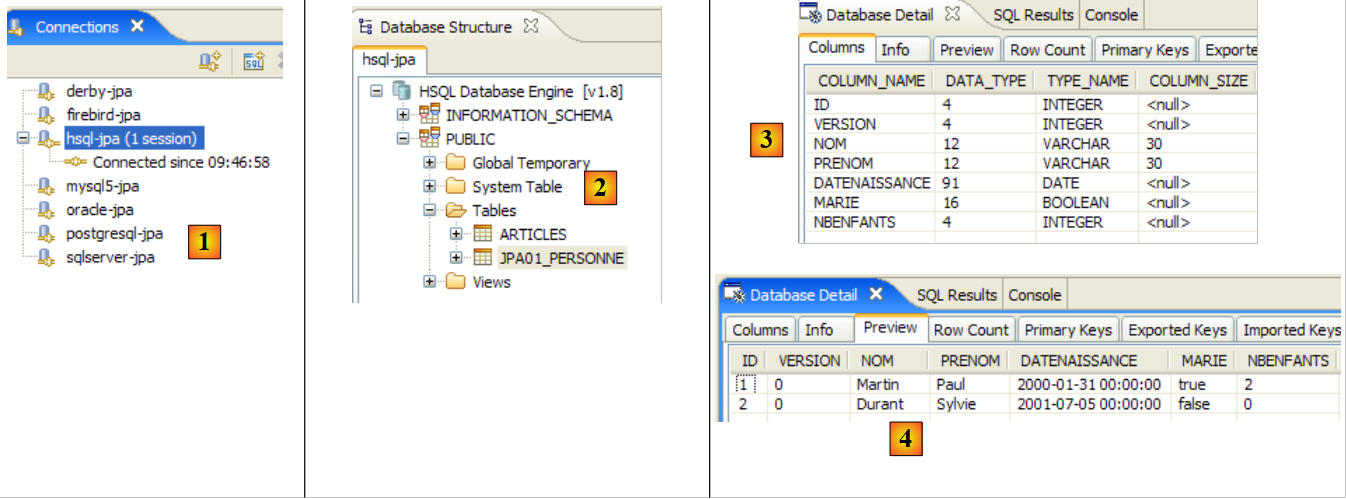

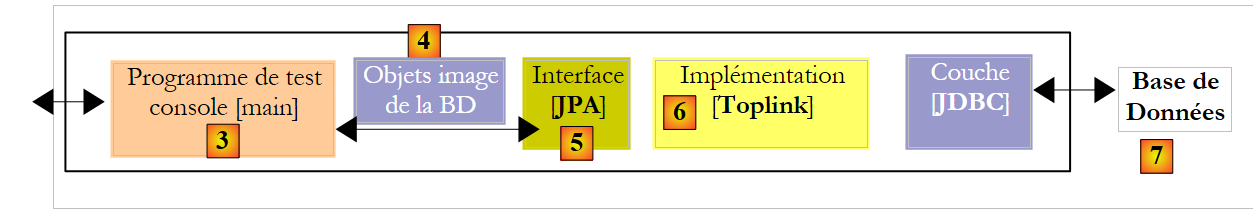

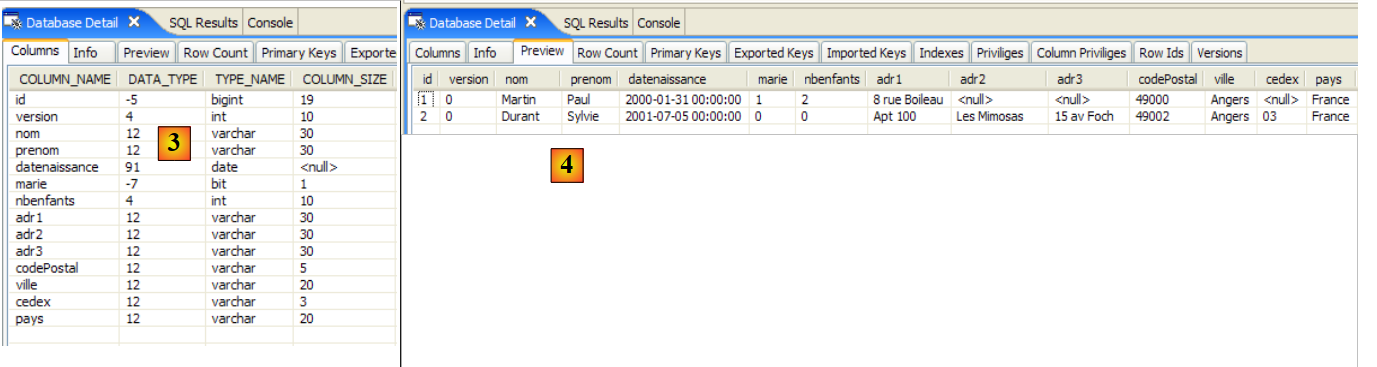

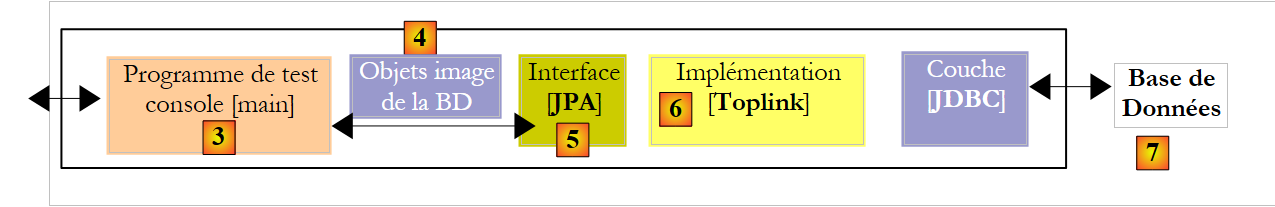

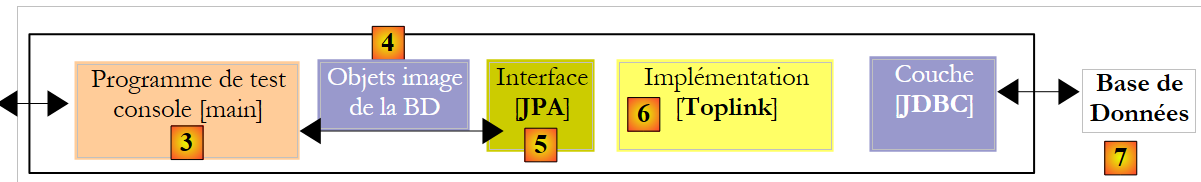

We will conduct our first experiments with the previous [Person] entity. We will carry them out using the following architecture:

|

- in [7]: the database that will be generated based on the annotations of the [Person] entity, as well as additional configurations specified in a file named [persistence.xml]

- in [5, 6]: a JPA layer implemented by Hibernate

- in [4]: the [Person] entity

- in [3]: a console-based test program

We will conduct various experiments:

- generate the database schema using an Ant script and the Hibernate Tools

- generate the database and initialize it with some data

- interact with the database and perform the four basic operations on the [person] table (insert, update, delete, query)

The necessary tools are as follows:

- Eclipse and its plugins described in Section 5.2.

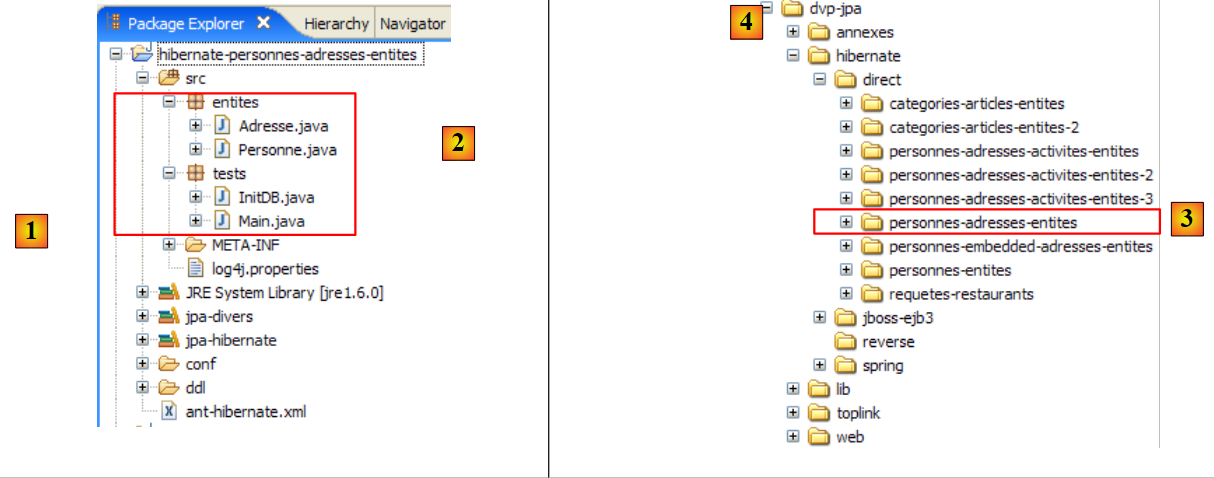

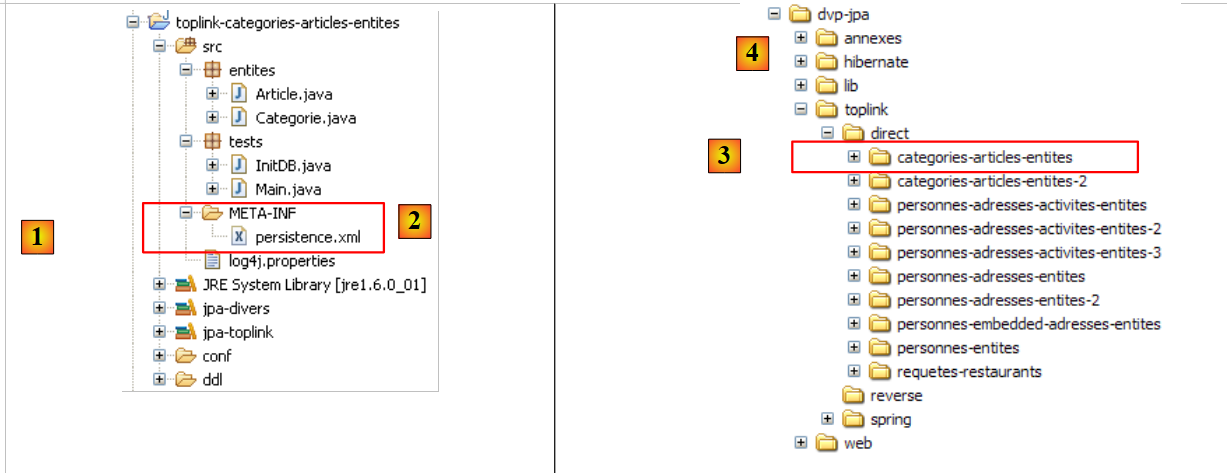

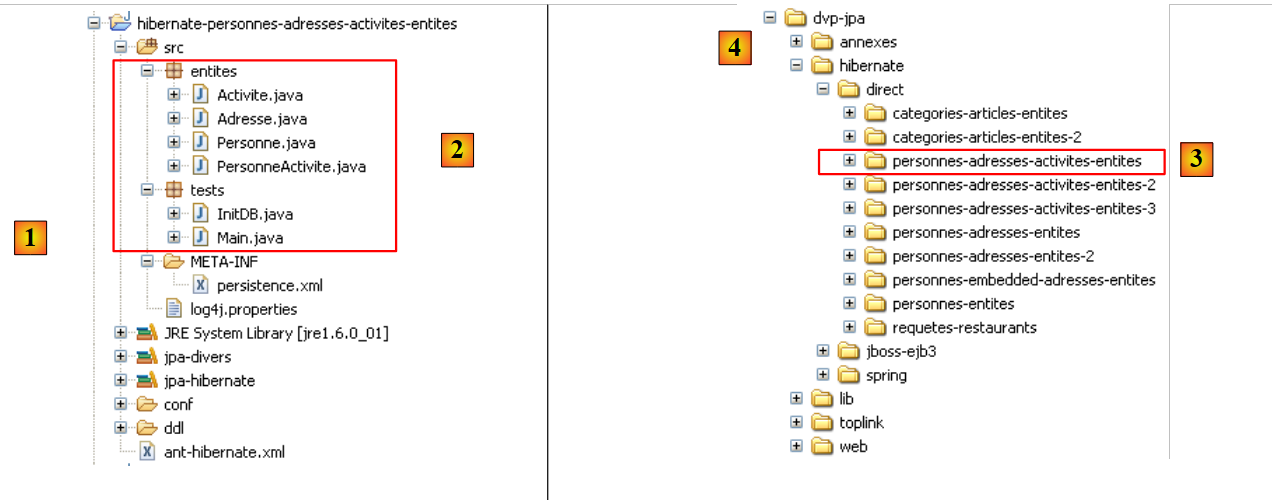

- the [hibernate-personnes-entites] project, which can be found in the <examples>/hibernate/direct/personnes-entites folder

- the various DBMSs described in the appendices (Section 5 and beyond).

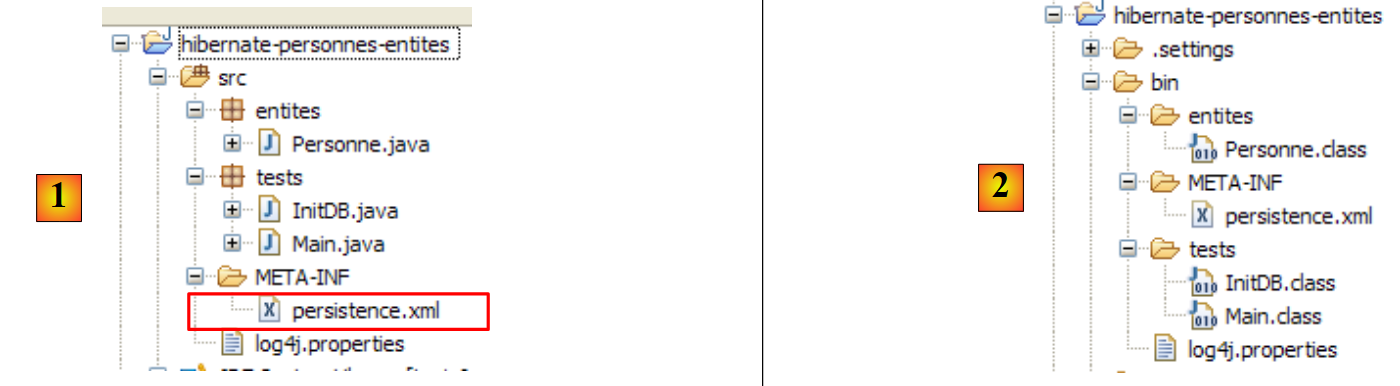

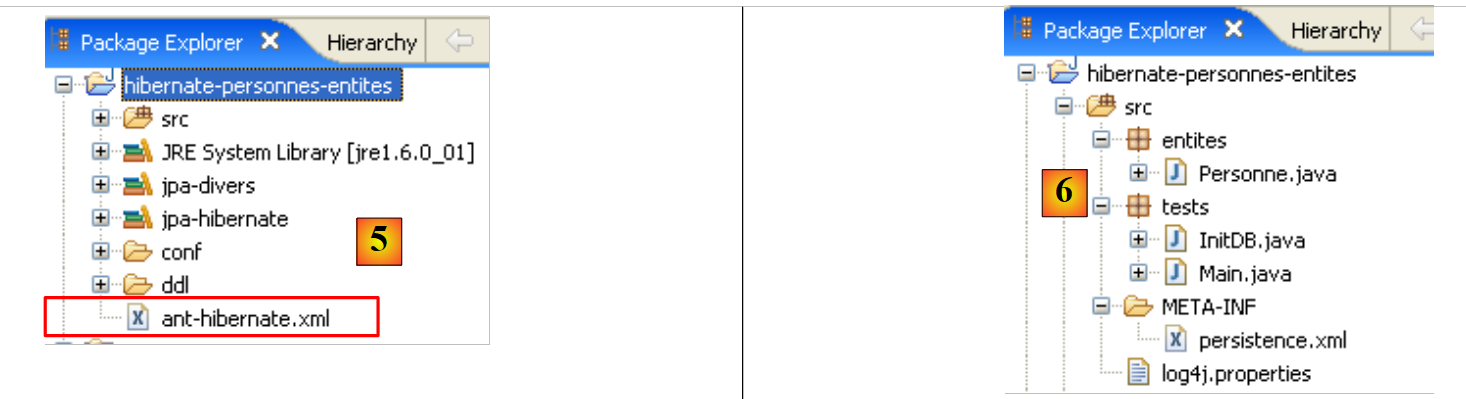

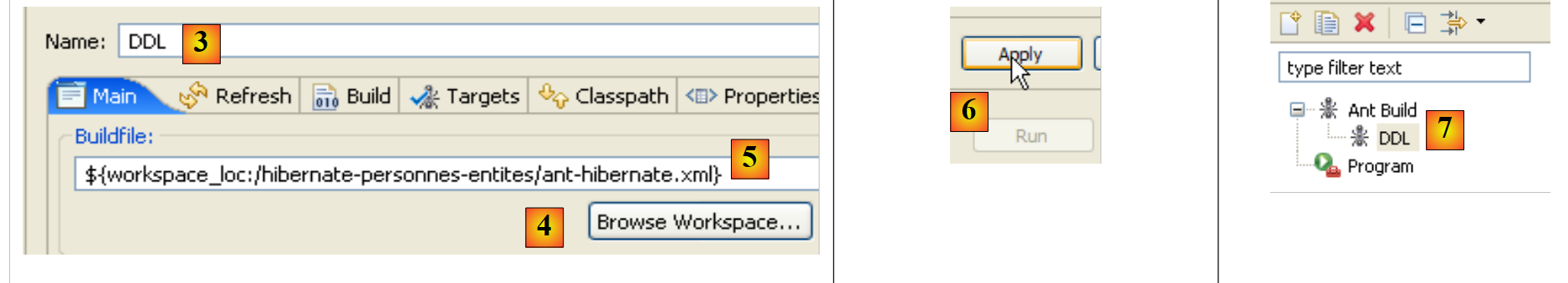

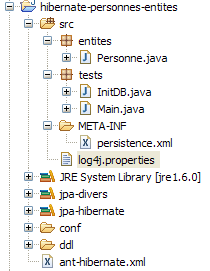

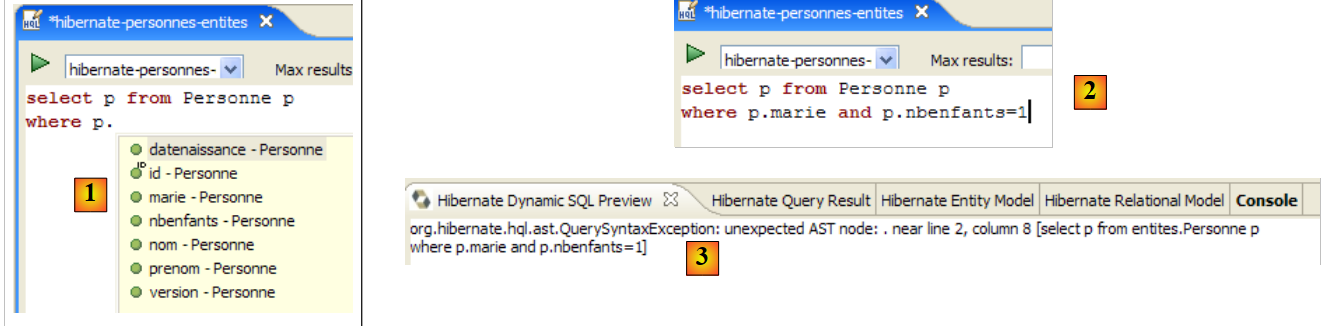

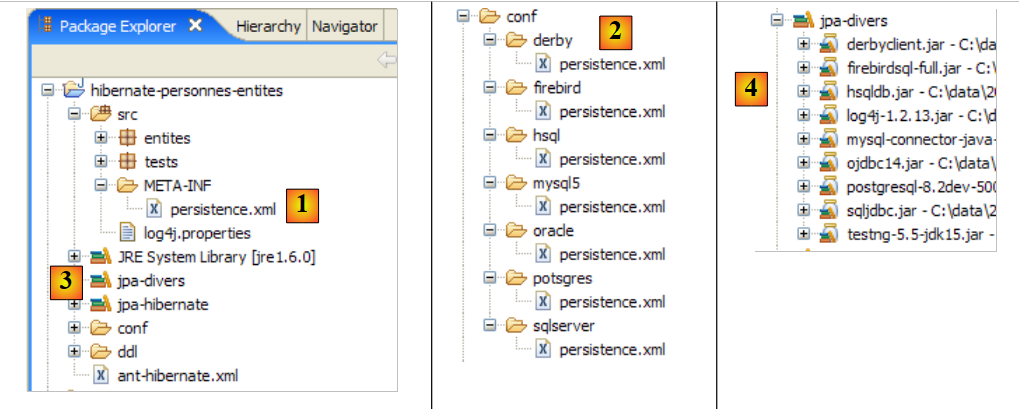

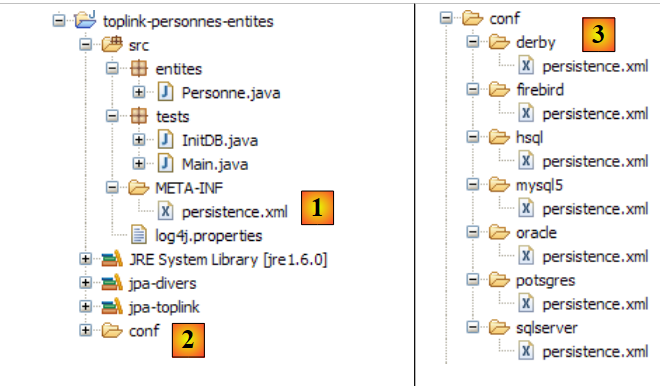

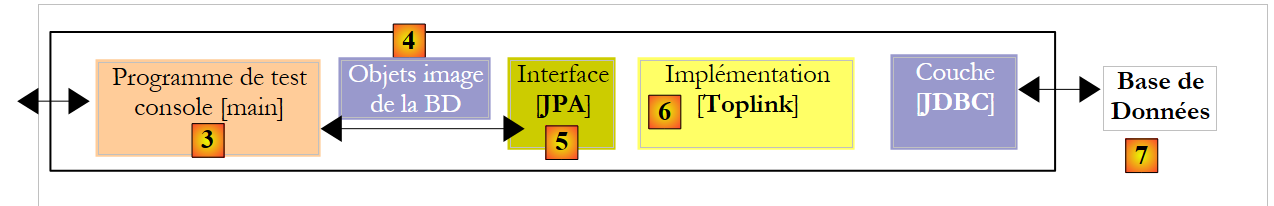

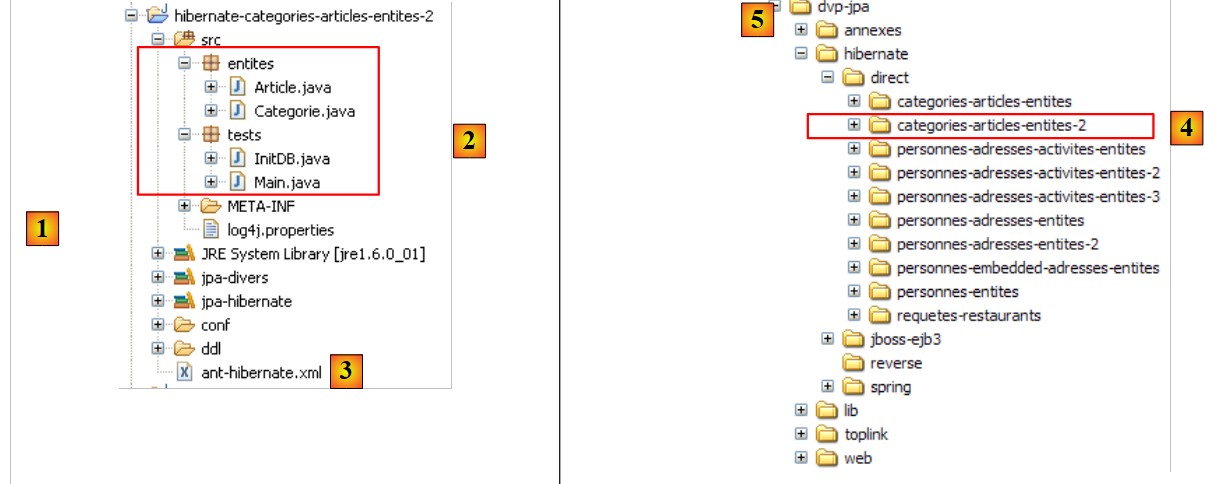

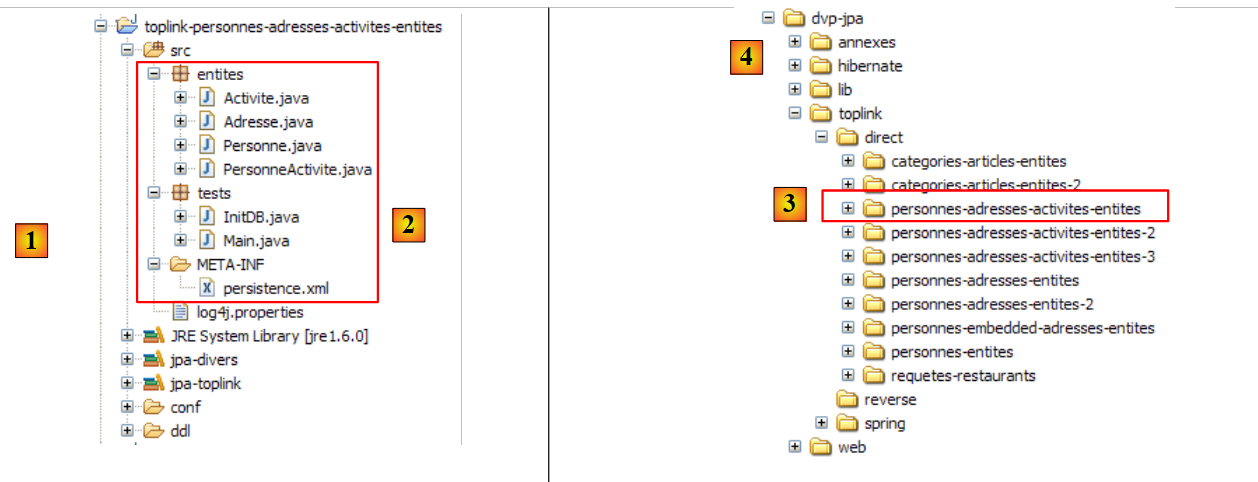

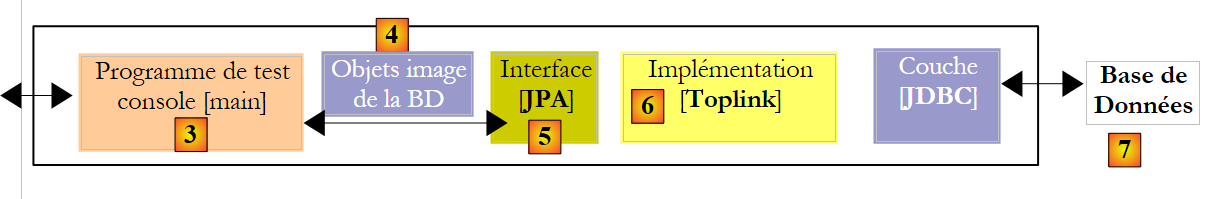

The Eclipse project is as follows:

|

- in [1]: the Eclipse project folder

- in [2]: the project imported into Eclipse (File / Import)

- in [3]: the [Person] entity being tested

- in [4]: the test programs

- in [5]: [persistence.xml] is the configuration file for the JPA layer

- in [6]: the libraries used. They were described in section 1.5.

- in [8]: an Ant script that will be used to generate the table associated with the [Person] entity

- in [9]: the [persistence.xml] files for each of the DBMSs used

- in [10]: the schemas of the generated database for each of the DBMSs used

We will describe these elements one by one.

2.1.4. The [Person] entity (2)

We are making a slight modification to the previous description of the [Person] entity, as well as adding some additional information:

package entites;

...

@SuppressWarnings({ "unused", "serial" })

@Entity

@Table(name="jpa01_personne")

public class Personne implements Serializable{

@Id

@Column(name = "ID", nullable = false)

@GeneratedValue(strategy = GenerationType.AUTO)

private Integer id;

@Column(name = "VERSION", nullable = false)

@Version

private int version;

@Column(name = "NOM", length = 30, nullable = false, unique = true)

private String nom;

@Column(name = "PRENOM", length = 30, nullable = false)

private String prenom;

@Column(name = "DATENAISSANCE", nullable = false)

@Temporal(TemporalType.DATE)

private Date datenaissance;

@Column(name = "MARIE", nullable = false)

private boolean marie;

@Column(name = "NBENFANTS", nullable = false)

private int nbenfants;

// manufacturers

public Personne() {

}

public Personne(String nom, String prenom, Date datenaissance, boolean marie,

int nbenfants) {

....

}

// toString

public String toString() {

return String.format("[%d,%d,%s,%s,%s,%s,%d]", getId(), getVersion(),

getNom(), getPrenom(), new SimpleDateFormat("dd/MM/yyyy")

.format(getDatenaissance()), isMarie(), getNbenfants());

}

// getters and setters

...

}

- line 7: we name the table associated with the [Person] entity [jpa01_personne]. In this document, various tables will be created in a schema always named jpa. By the end of this tutorial, the jpa schema will contain many tables. To help the reader keep track, tables that are related to each other will have the same prefix jpaxx_.

- line 45: a [toString] method to display a [Person] object on the console.

2.1.5. Configuring the Data Access Layer

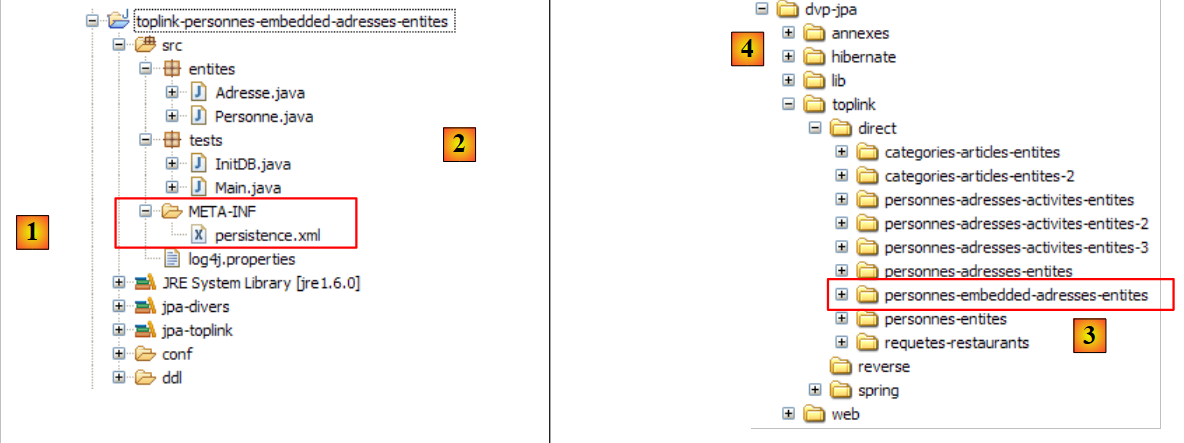

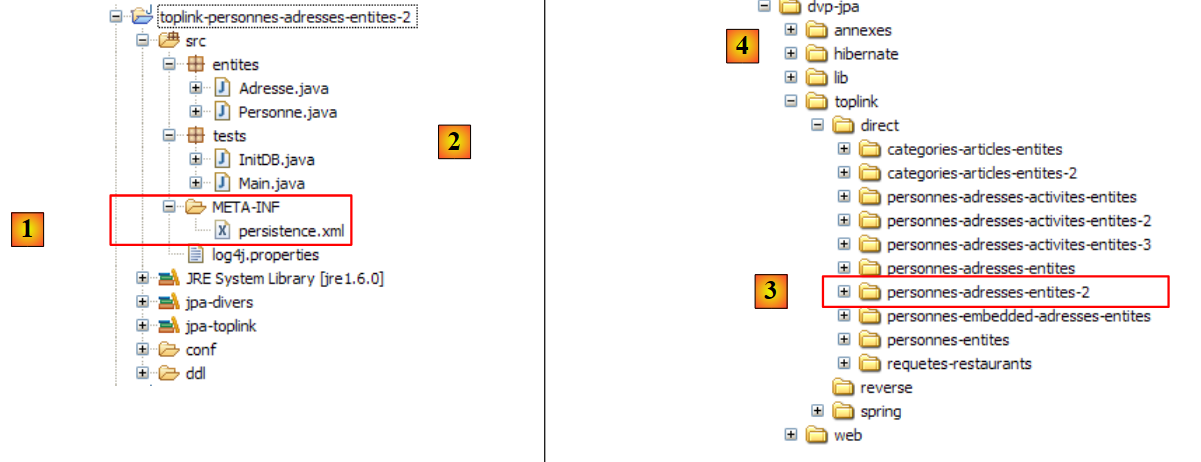

In the Eclipse project above, the JPA layer is configured via the [META-INF/persistence.xml] file:

|

At runtime, the [META-INF/persistence.xml] file is searched for in the application’s classpath. In our Eclipse project, everything in the [/src] folder [1] is copied to a [/bin] folder [2]. This folder is part of the project’s classpath. This is why [META-INF/persistence.xml] will be found when the JPA layer configures itself.

By default, Eclipse does not place source code in the project’s [/src] folder but directly under the project folder itself. All our Eclipse projects will be configured so that the sources are in [/src] and the compiled classes in [/bin], as shown in Section 5.2.1.

Let’s examine the JPA layer configuration in our project’s [persistence.xml] file:

<?xml version="1.0" encoding="UTF-8"?>

<persistence version="1.0" xmlns="http://java.sun.com/xml/ns/persistence">

<persistence-unit name="jpa" transaction-type="RESOURCE_LOCAL">

<!-- provider -->

<provider>org.hibernate.ejb.HibernatePersistence</provider>

<properties>

<!-- Persistent classes -->

<property name="hibernate.archive.autodetection" value="class, hbm" />

<!-- logs SQL

<property name="hibernate.show_sql" value="true"/>

<property name="hibernate.format_sql" value="true"/>

<property name="use_sql_comments" value="true"/>

-->

<!-- connection JDBC -->

<property name="hibernate.connection.driver_class" value="com.mysql.jdbc.Driver" />

<property name="hibernate.connection.url" value="jdbc:mysql://localhost:3306/jpa" />

<property name="hibernate.connection.username" value="jpa" />

<property name="hibernate.connection.password" value="jpa" />

<!-- automatic schematic creation -->

<property name="hibernate.hbm2ddl.auto" value="create" />

<!-- Dialect -->

<property name="hibernate.dialect" value="org.hibernate.dialect.MySQL5InnoDBDialect" />

<!-- properties DataSource c3p0 -->

<property name="hibernate.c3p0.min_size" value="5" />

<property name="hibernate.c3p0.max_size" value="20" />

<property name="hibernate.c3p0.timeout" value="300" />

<property name="hibernate.c3p0.max_statements" value="50" />

<property name="hibernate.c3p0.idle_test_period" value="3000" />

</properties>

</persistence-unit>

</persistence>

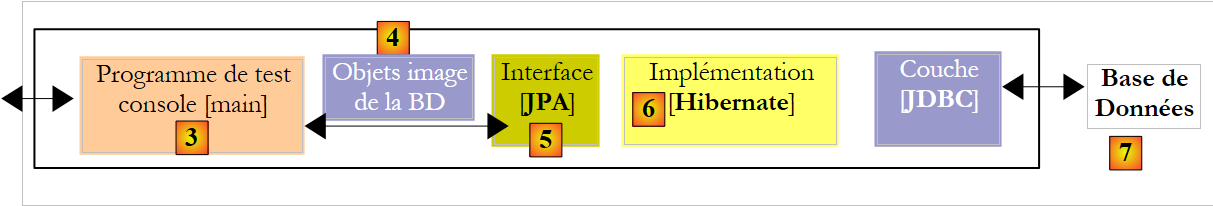

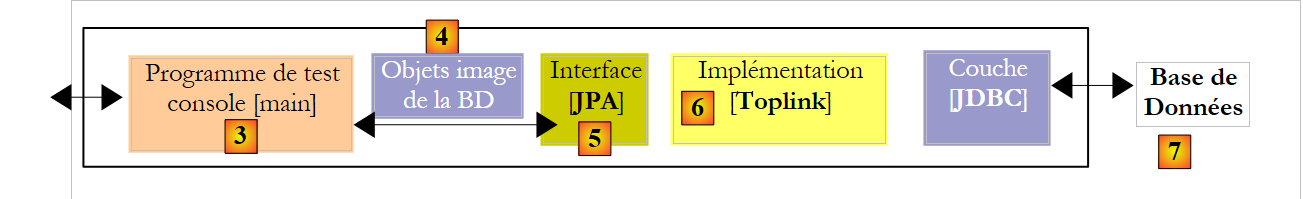

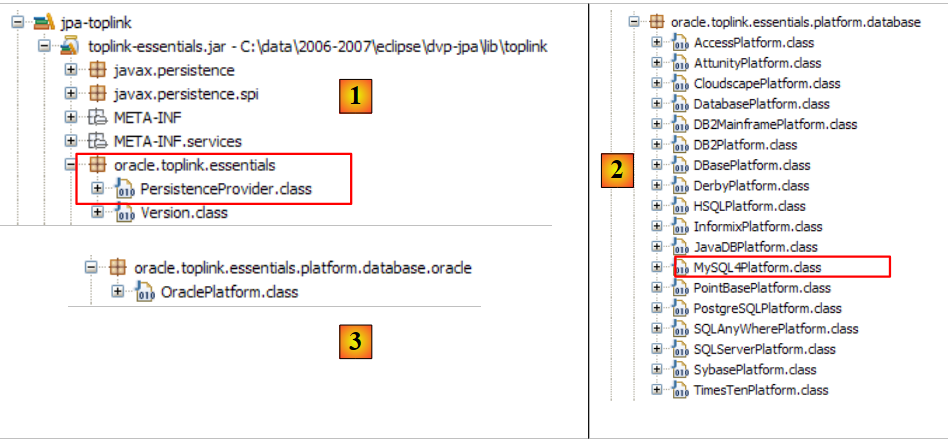

To understand this configuration, we need to revisit the data access architecture of our application:

|

- the [persistence.xml] file configures layers [4, 5, 6]

- [4]: Hibernate implementation of JPA

- [5]: Hibernate accesses the database via a connection pool. A connection pool is a pool of open connections to the DBMS. A DBMS is accessed by multiple users, yet for performance reasons, it cannot exceed a limit N of open connections simultaneously. Well-written code opens a connection to the DBMS for the minimum amount of time: it executes SQL commands and closes the connection. It will do this repeatedly, every time it needs to work with the database. The cost of opening and closing a connection is not negligible, and this is where the connection pool comes in. When the application starts, the connection pool opens N1 connections to the DBMS. The application requests an open connection from the pool whenever it needs one. The connection is returned to the pool as soon as the application no longer needs it, preferably as quickly as possible. The connection is not closed and remains available for the next user. A connection pool is therefore a system for sharing open connections.

- [6]: the JDBC driver for the DBMS being used

Now let’s see how the [persistence.xml] file configures the layers [4, 5, 6] above:

- line 2: the root tag of the XML file is <persistence>.

- line 3: <persistence-unit> is used to define a persistence unit. There can be multiple persistence units. Each one has a name (name attribute) and a transaction type (transaction-type attribute). The application will access the persistence unit via its name, in this case jpa. The transaction type RESOURCE_LOCAL indicates that the application manages transactions with the DBMS itself. This will be the case here. When the application runs in an EJB3 container, it can use the container’s transaction service. In that case, we would set transaction-type=JTA (Java Transaction API). JTA is the default value when the transaction-type attribute is omitted.

- Line 5: The <provider> tag is used to define a class that implements the [javax.persistence.spi.PersistenceProvider] interface, which allows the application to initialize the persistence layer. Because we are using a JPA/Hibernate implementation, the class used here is a Hibernate class.

- Line 6: The <properties> tag introduces properties specific to the chosen provider. Thus, depending on whether you have chosen Hibernate, TopLink, Kodo, etc., you will have different properties. The following are specific to Hibernate.

- Line 8: Instructs Hibernate to scan the project’s classpath to find classes annotated with @Entity so it can manage them. @Entity classes can also be declared using <class>class_name</class> tags, directly under the <persistence-unit> tag. This is what we will do with the JPA/Toplink provider.

- Lines 10–12, which are commented out here, configure Hibernate’s console logs:

- Line 10: to enable or disable the display of SQL statements issued by Hibernate to the DBMS. This is very useful during the learning phase. Due to the relational/object bridge, the application works on persistent objects to which it applies operations such as [persist, merge, remove]. It is very helpful to know which SQL statements are actually issued for these operations. By studying them, you gradually learn to anticipate the SQL statements Hibernate will generate when performing such operations on persistent objects, and the relational/object bridge begins to take shape in your mind.

- Line 11: The SQL statements displayed on the console can be formatted neatly to make them easier to read

- Line 12: The displayed SQL statements will also be annotated

- Lines 15–19 define the JDBC layer (layer [6] in the architecture):

- line 15: the JDBC driver class for the DBMS, here MySQL5

- line 16: the URL of the database being used

- Lines 17, 18: the connection username and password

- Here we use elements explained in the appendices in section 5.5. The reader is encouraged to read this section on MySQL5.

- line 22: Hibernate needs to know which DBMS it is working with. This is because all DBMSs have proprietary SQL extensions, such as their own way of handling the automatic generation of primary key values, ... which means that Hibernate needs to know the DBMS it is working with in order to send it SQL commands that the DBMS will understand. [MySQL5InnoDBDialect] refers to the MySQL5 DBMS with InnoDB tables that support transactions.

- Lines 24–28 configure the c3p0 connection pool (layer [5] in the architecture):

- Lines 24, 25: the minimum (default 3) and maximum number of connections (default 15) in the pool. The default initial number of connections is 3.

- Line 26: maximum wait time in milliseconds for a connection request from the client. After this timeout, c3p0 will throw an exception.

- line 27: to access the database, Hibernate uses prepared SQL statements (PreparedStatement) that c3p0 can cache. This means that if the application requests a prepared SQL statement that is already in the cache a second time, it will not need to be prepared (preparing an SQL statement incurs a cost) and the one in the cache will be used. Here, we specify the maximum number of prepared SQL statements the cache can hold, across all connections (a prepared SQL statement belongs to a single connection).

- Line 28: Connection validity check interval in milliseconds. A connection in the pool can become invalid for various reasons (the JDBC driver invalidates the connection because it has been idle too long, the JDBC driver has bugs, etc.).

- Line 20: Here, we specify that when the persistence layer is initialized, the database schema for @Entity objects should be generated. Hibernate now has all the tools to generate the SQL statements for creating the database tables:

- the configuration of the @Entity objects allows it to know which tables to generate

- Lines 15–18 and 24–28 allow it to establish a connection with the DBMS

- line 22 tells it which SQL dialect to use to generate the tables

Thus, the [persistence.xml] file used here recreates a new database with each new execution of the application. The tables are recreated (create table) after being dropped (drop table) if they existed. Note that this is obviously not something to do with a production database...

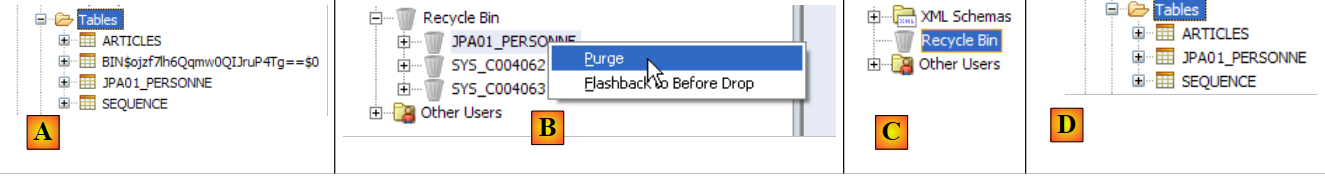

Tests have shown that the drop/create phase for tables can fail. This was particularly the case when, for the same test, we switched from a JPA/Hibernate layer to a JPA/Toplink layer or vice versa. Starting from the same @Entity objects, the two implementations do not generate exactly the same tables, generators, sequences, etc., and it has sometimes happened that the drop/create phase failed, requiring the tables to be deleted manually. The "Appendices" section, starting from paragraph 5, describes the tools available for performing this task manually. It should be noted that the JPA/Hibernate implementation proved to be the most efficient during this initial phase of database content creation: crashes were rare.

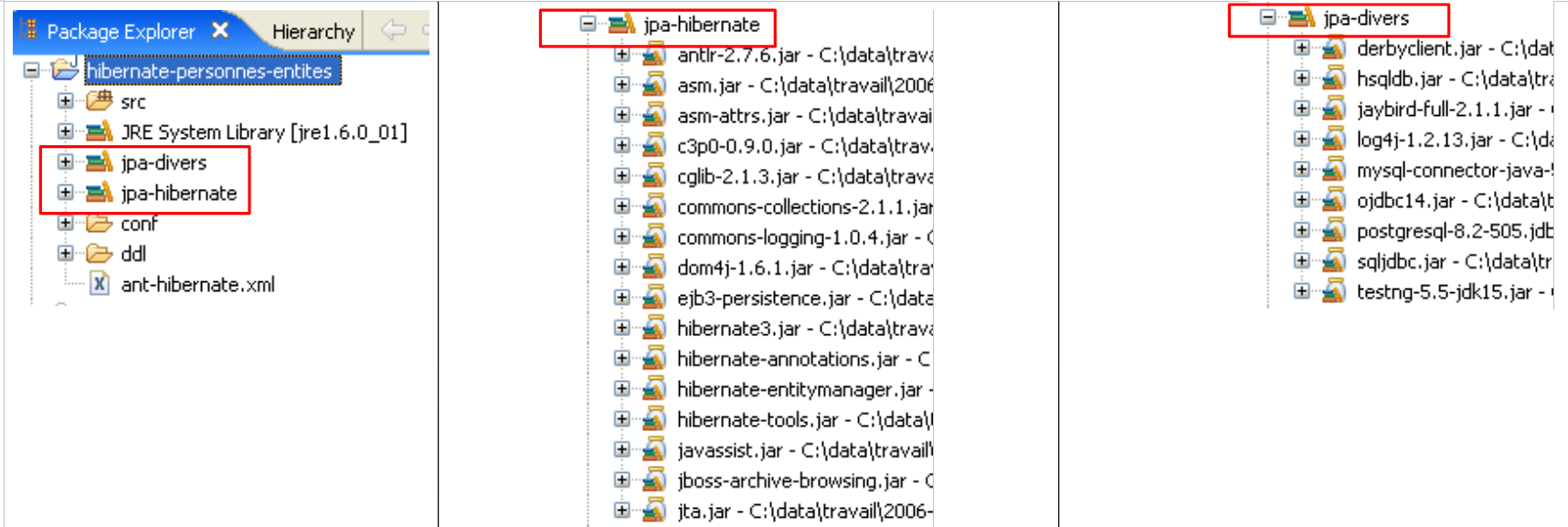

The tools used by the JPA/Hibernate layer are in the [jpa-hibernate] library, presented in section 1.5, page 8. The JDBC drivers required to access the DBMS are in the [jpa-divers] library. These two libraries have been added to the classpath of the project studied here. Their contents are summarized below:

|

2.1.6. Generating the database with an Ant script

As we have just seen, Hibernate provides tools to generate the database schema for the application’s @Entity objects. Hibernate can:

- generate the text file containing the SQL statements that create the database. Only the dialect specified in [persistence.xml] is used in this case.

- create the tables representing the @Entity objects in the target database defined in [persistence.xml]. In this case, the entire [persistence.xml] file is used.

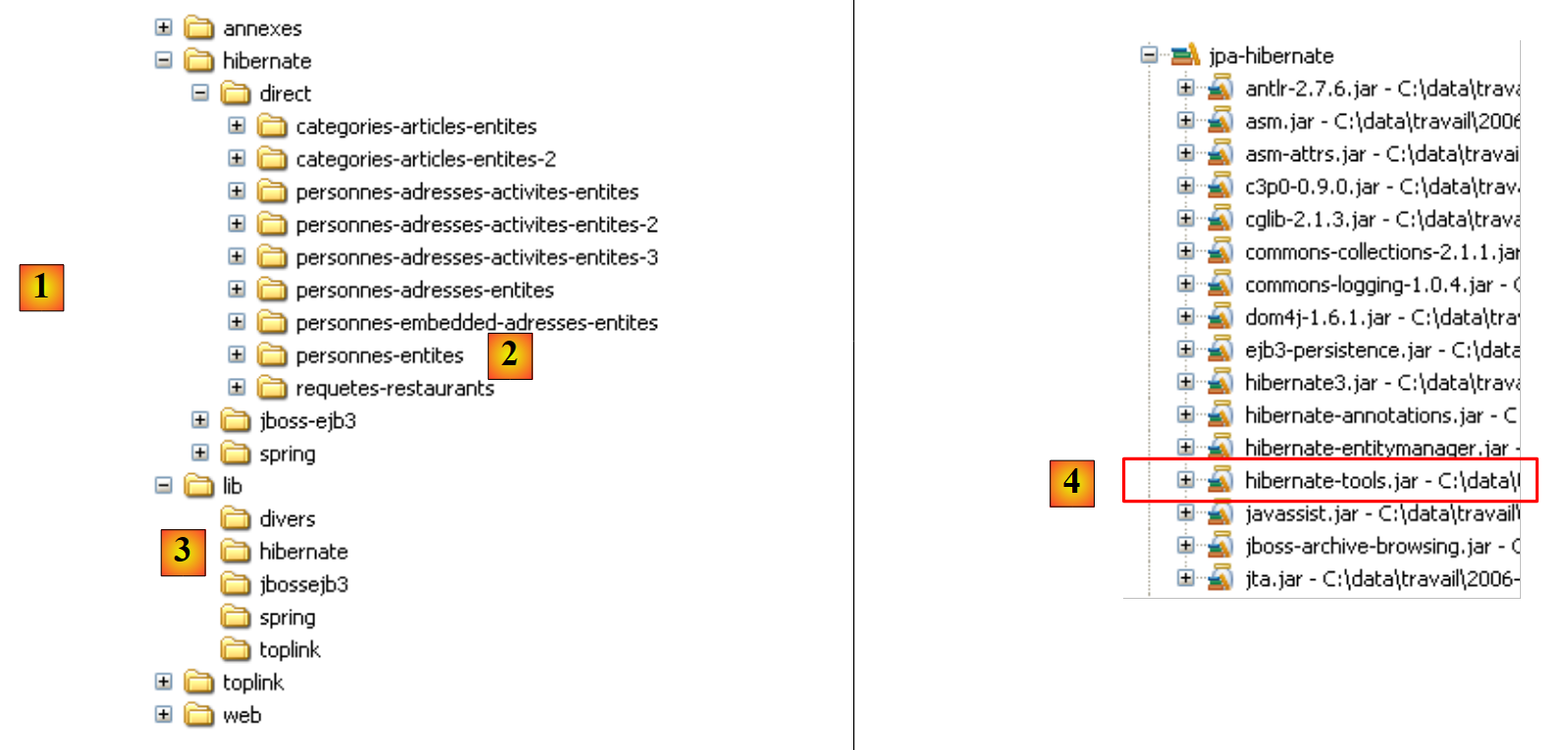

We will present an Ant script capable of generating the database schema for @Entity objects. This script is not my own: it is based on a similar script from [ref1]. Ant (Another Neat Tool) is a Java batch task tool. Ant scripts are not easy for beginners to understand. We will use only one, the one we are now commenting on:

|

- in [1]: the directory structure of the examples in this tutorial.

- in [2]: the [people-entities] folder of the Eclipse project currently being studied

- in [3]: the <lib> folder containing the five JAR libraries defined in section 1.5.

- in [4]: the [hibernate-tools.jar] archive required for one of the tasks in the [ant-hibernate.xml] script that we will examine.

|

- in [5]: the Eclipse project and the [ant-hibernate.xml] script

- in [6]: the [src] folder of the project

The [ant-hibernate.xml] script [5] will use the JAR files in the <lib> folder [3], specifically the [hibernate-tools.jar] file [4] in the [lib/hibernate] folder. We have reproduced the directory tree so that the reader can see that to find the [lib] folder from the [people-entities] folder [2] in the [ant-hibernate.xml] script, you must follow the path: ../../../lib.

Let’s examine the [ant-hibernate.xml] script:

<project name="jpa-hibernate" default="compile" basedir=".">

<!-- nom du projet et version -->

<property name="proj.name" value="jpa-hibernate" />

<property name="proj.shortname" value="jpa-hibernate" />

<property name="version" value="1.0" />

<!-- Propriété globales -->

<property name="src.java.dir" value="src" />

<property name="lib.dir" value="../../../lib" />

<property name="build.dir" value="bin" />

<!-- le Classpath du projet -->

<path id="project.classpath">

<fileset dir="${lib.dir}">

<include name="**/*.jar" />

</fileset>

</path>

<!-- les fichiers de configuration qui doivent être dans le classpath-->

<patternset id="conf">

<include name="**/*.xml" />

<include name="**/*.properties" />

</patternset>

<!-- Nettoyage projet -->

<target name="clean" description="Nettoyer le projet">

<delete dir="${build.dir}" />

<mkdir dir="${build.dir}" />

</target>

<!-- Compilation projet -->

<target name="compile" depends="clean">

<javac srcdir="${src.java.dir}" destdir="${build.dir}" classpathref="project.classpath" />

</target>

<!-- Copier les fichiers de configuration dans le classpath -->

<target name="copyconf">

<mkdir dir="${build.dir}" />

<copy todir="${build.dir}">

<fileset dir="${src.java.dir}">

<patternset refid="conf" />

</fileset>

</copy>

</target>

<!-- Hibernate Tools -->

<taskdef name="hibernatetool" classname="org.hibernate.tool.ant.HibernateToolTask" classpathref="project.classpath" />

<!-- Générer la DDL de la base -->

<target name="DDL" depends="compile, copyconf" description="Génération DDL base">

<hibernatetool destdir="${basedir}">

<classpath path="${build.dir}" />

<!-- Utiliser META-INF/persistence.xml -->

<jpaconfiguration />

<!-- export -->

<hbm2ddl drop="true" create="true" export="false" outputfilename="ddl/schema.sql" delimiter=";" format="true" />

</hibernatetool>

</target>

<!-- Générer la base -->

<target name="BD" depends="compile, copyconf" description="Génération BD">

<hibernatetool destdir="${basedir}">

<classpath path="${build.dir}" />

<!-- Utiliser META-INF/persistence.xml -->

<jpaconfiguration />

<!-- export -->

<hbm2ddl drop="true" create="true" export="true" outputfilename="ddl/schema.sql" delimiter=";" format="true" />

</hibernatetool>

</target>

</project>

- Line 1: The [ant] project is named "jpa-hibernate". It consists of a set of tasks, one of which is the default task: in this case, the task named "compile". An Ant script is called to execute a task T. If no task is specified, the default task is executed. basedir="." indicates that for all relative paths found in the script, the starting point is the folder containing the Ant script, in this case the <examples>/hibernate/direct/people-entities folder.

- Lines 3–11: define script variables using the tag <property name="variableName" value="variableValue"/>. The variable can then be used in the script with the notation ${variableName}. The names can be anything. Let’s take a closer look at the variables defined on lines 9–11:

- Line 9: defines a variable named "src.java.dir" (the name is arbitrary) which, later in the script, will refer to the folder containing the Java source code. Its value is "src", a path relative to the folder designated by the basedir attribute (line 1). This is therefore the path "./src", where . here refers to the folder <examples>/hibernate/direct/people-entities. The Java source code is indeed located in the <people-entities>/src folder (see [6] above).

- Line 10: defines a variable named "lib.dir" which, later in the script, will refer to the folder containing the JAR files required by the script’s Java tasks. Its value ../../../lib refers to the <examples>/lib folder (see [3] above).

- Line 11: defines a variable named "build.dir" which, later in the script, will refer to the folder where the .class files generated from compiling the .java sources must be placed. Its value "bin" refers to the <personnes-entites>/bin folder. We have already explained that in the Eclipse project we studied, the <bin> folder was where the .class files were generated. Ant will do the same.

- Lines 14–18: The <path> tag is used to define elements of the classpath that the Ant tasks will use. Here, the path "project.classpath" (the name is arbitrary) includes all the .jar files in the <examples>/lib directory tree.

- Lines 21–24: The <patternset> tag is used to designate a set of files using naming patterns. Here, the patternset named conf refers to all files with the .xml or .properties extension. This patternset will be used to refer to the .xml and .properties files in the <src> folder (persistence.xml, log4j.properties) (see [6]), which are application configuration files. When certain tasks are executed, these files must be copied to the <bin> folder so that they are in the project’s classpath. We will then use the conf patternset to reference them.

- Lines 27–30: The <target> tag denotes a task in the script. This is the first one we encounter. Everything that preceded this pertains to the configuration of the Ant script’s execution environment. The task is called clean. It runs in two steps: the <bin> folder is deleted (line 28) and then recreated (line 29).

- Lines 33–35: The compile task, which is the script’s default task (line 1). It depends (depends attribute) on the clean task. This means that before executing the compile task, Ant must execute the clean task, i.e., clean the <bin> folder. The purpose of the compile task here is to compile the Java source files in the <src> folder.

- Line 34: Call to the Java compiler with three parameters:

- srcdir: the folder containing the Java source files, here the <src> folder

- destdir: the folder where the generated .class files should be stored, here the <bin> folder

- classpathref: the classpath to use for compilation, here all the JAR files in the <lib> directory tree

- (continued)

- lines 38–45: the copyconf task, whose purpose is to copy all .xml and .properties files from the <src> directory into the <bin> directory.

- line 48: definition of a task using the <taskdef> tag. Such a task is intended to be reused elsewhere in the script. This is a coding convenience. Because the task is used in various places in the script, it is defined once with the <taskdef> tag and then reused via its name when needed.

- The task is called hibernatetool (name attribute).

- Its class is defined by the classname attribute. Here, the specified class will be found in the [hibernate-tools.jar] archive we mentioned earlier.

- The classpathref attribute tells Ant where to look for the preceding class

- (continued)

- Lines 51–60 pertain to the task of interest here: generating the database schema for the @Entity objects in our Eclipse project.

- Line 51: The task is called DDL (short for Data Definition Language, the SQL used to create database objects). It depends on the compile and copyconf tasks, in that order. The DDL task will therefore trigger, in order, the execution of the clean, compile, and copyconf tasks. When the DDL task starts, the <bin> folder contains the .class files generated from the .java sources, notably the @Entity objects, as well as the [META-INF/persistence.xml] file that configures the JPA/Hibernate layer.

- Lines 53–59: The [hibernatetool] task defined on line 48 is called. It is passed numerous parameters, in addition to those already defined on line 48:

- Line 53: The output directory for the results produced by the task will be the current directory.

- Line 54: The task’s classpath will be the <bin> folder.

- Line 56: tells the [hibernatetool] task how to determine its runtime environment: the <jpaconfiguration/> tag indicates that it is in a JPA environment and that it must therefore use the [META-INF/persistence.xml] file, which it will find here in its classpath.

- Line 58 sets the conditions for generating the database: drop=true indicates that SQL drop table statements must be issued before the tables are created; create=true indicates that the text file containing the SQL statements for creating the database must be created; outputfilename specifies the name of this SQL file—here schema.sql in the <ddl> folder of the Eclipse project; export=false indicates that the generated SQL statements must not be executed in a connection to the DBMS. This point is important: it means that the target DBMS does not need to be running to execute the task. delimiter sets the character that separates two SQL statements in the generated schema, and format=true requests that basic formatting be applied to the generated text.

- Lines 51–60 pertain to the task of interest here: generating the database schema for the @Entity objects in our Eclipse project.

- (continued)

- Lines 63–72 define the task named BD. It is identical to the previous DDL task, except that this time it generates the database (export="true" on line 70). The task opens a connection to the DBMS using the information found in [persistence.xml], to execute the SQL schema and generate the database. To run the BD task, the DBMS must therefore be running.

2.1.7. Running the ant DDL task

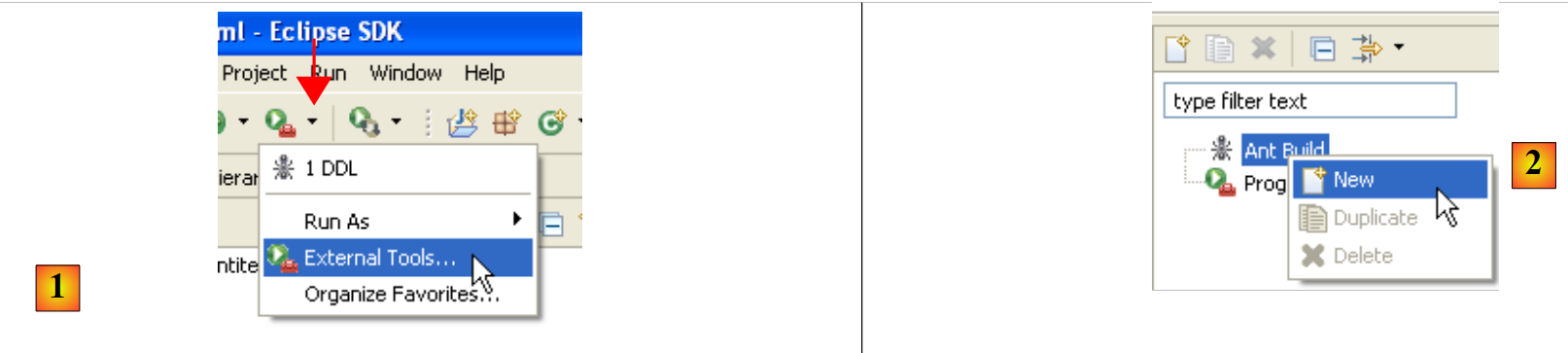

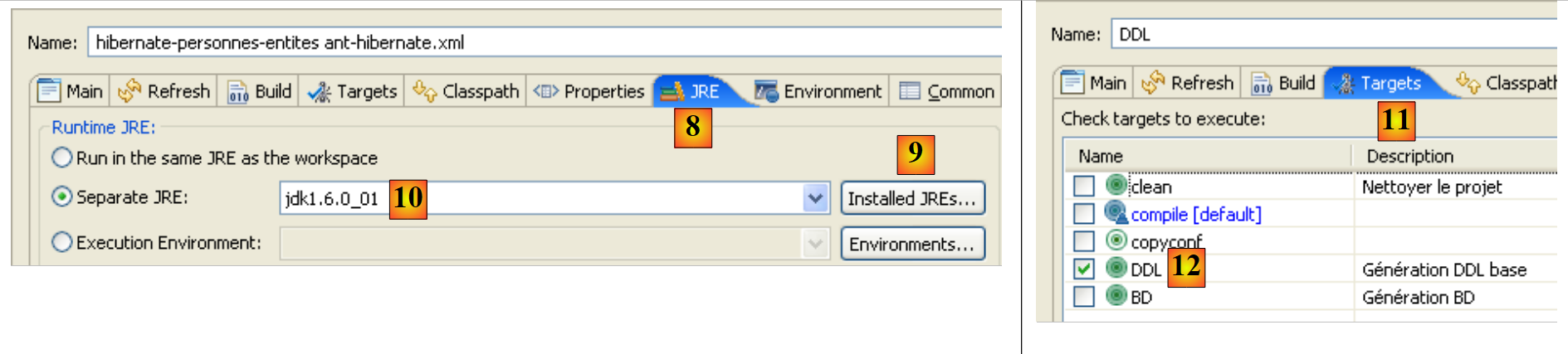

To run the [ant-hibernate.xml] script, we first need to make a few configurations within Eclipse.

|

- in [1]: select [External Tools]

- in [2]: create a new Ant configuration

|

- in [3]: name the Ant configuration

- In [5]: Specify the Ant script using the [4] button

- Step [6]: Apply the changes

- in [7]: the DDL Ant configuration has been created

|

|

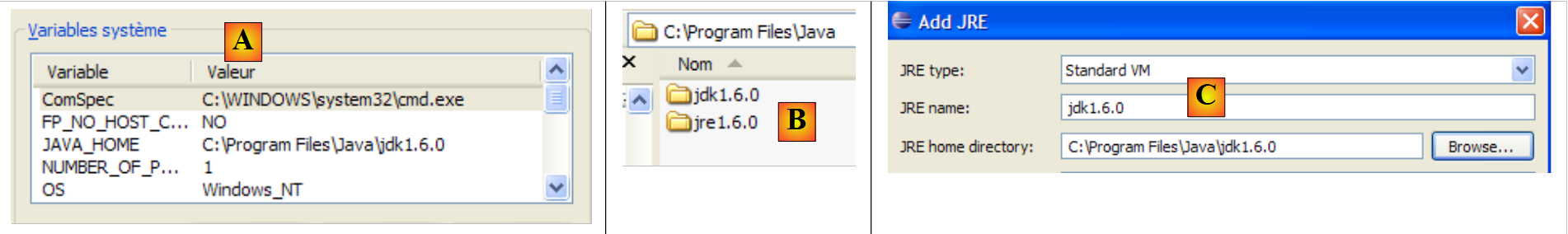

- in [8]: in the JRE tab, define the JRE to use. Field [10] is normally pre-filled with the JRE used by Eclipse. Therefore, there is usually nothing to do in this panel. However, I encountered a case where the Ant script could not find the <javac> compiler. This compiler is not located in a JRE (Java Runtime Environment) but in a JDK (Java Development Kit). Eclipse’s Ant tool locates this compiler via the JAVA_HOME environment variable (Start / Control Panel / Performance and Maintenance / System / Advanced tab / Environment Variables button) [A]. If this variable has not been defined, you can allow Ant to find the <javac> compiler by specifying a JDK instead of a JRE in [10]. The JDK is available in the same folder as the JRE [B]. Use button [9] to register the JDK among the available JREs [C] so that you can then select it in [10].

- In [12]: In the [Targets] tab, select the DDL task. Thus, the Ant configuration we named DDL [7] will correspond to the execution of the task named DDL [12], which, as we know, generates the DDL schema for the database representing the application’s @Entity objects.

|

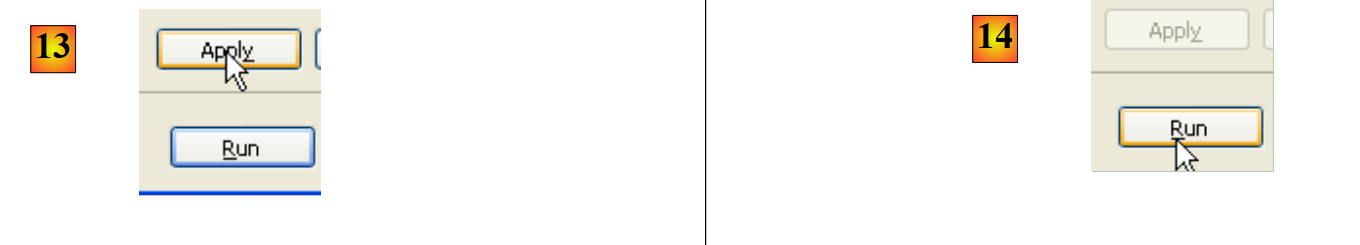

- in [13]: validate the configuration

- In [14]: Run it

In the [Console] view, you will see logs from the execution of the DDL Ant task:

Buildfile: C:\data\2006-2007\eclipse\dvp-jpa\hibernate\direct\personnes-entites\ant-hibernate.xml

clean:

[delete] Deleting directory C:\data\2006-2007\eclipse\dvp-jpa\hibernate\direct\personnes-entites\bin

[mkdir] Created dir: C:\data\2006-2007\eclipse\dvp-jpa\hibernate\direct\personnes-entites\bin

compile:

[javac] Compiling 3 source files to C:\data\2006-2007\eclipse\dvp-jpa\hibernate\direct\personnes-entites\bin

copyconf:

[copy] Copying 2 files to C:\data\2006-2007\eclipse\dvp-jpa\hibernate\direct\personnes-entites\bin

DDL:

[hibernatetool] Executing Hibernate Tool with a JPA Configuration

[hibernatetool] 1. task: hbm2ddl (Generates database schema)

[hibernatetool] drop table if exists jpa01_personne;

[hibernatetool] create table jpa01_personne (

[hibernatetool] ID integer not null auto_increment,

[hibernatetool] VERSION integer not null,

[hibernatetool] NOM varchar(30) not null unique,

[hibernatetool] PRENOM varchar(30) not null,

[hibernatetool] DATENAISSANCE date not null,

[hibernatetool] MARIE bit not null,

[hibernatetool] NBENFANTS integer not null,

[hibernatetool] primary key (ID)

[hibernatetool] ) ENGINE=InnoDB;

BUILD SUCCESSFUL

Total time: 5 seconds

- Recall that the DDL task is named [hibernatetool] (line 10) and depends on the tasks clean (line 2), compile (line 5), and copyconf (line 7).

- Line 10: The [hibernatetool] task uses the [persistence.xml] file from a JPA configuration

- line 11: the [hbm2ddl] task will generate the database DDL schema

- Lines 12–22: the database DDL schema

Recall that we instructed the [hbm2ddl] task to generate the DDL schema in a specific location:

<hbm2ddl drop="true" create="true" export="true" outputfilename="ddl/schema.sql" delimiter=";" format="true" />

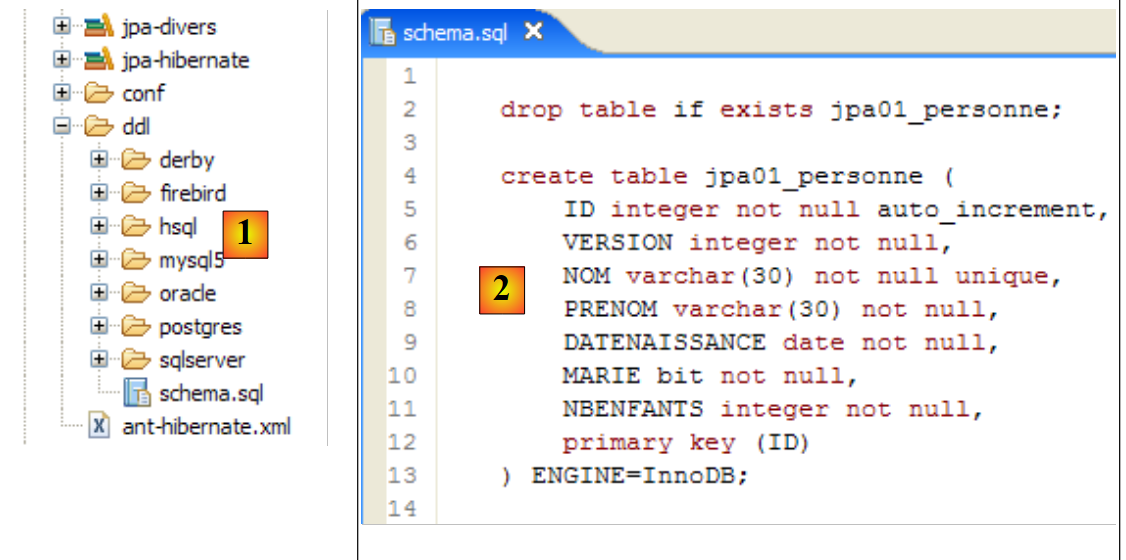

- line 74: the schema must be generated in the file ddl/schema.sql. Let’s check:

|

- in [1]: the ddl/schema.sql file is indeed present (press F5 to refresh the directory tree)

- in [2]: its contents. This is the schema for a MySQL5 database. The [persistence.xml] configuration file for the JPA layer did indeed specify a MySQL5 DBMS (line 8 below):

<!-- connexion JDBC -->

<property name="hibernate.connection.driver_class" value="com.mysql.jdbc.Driver" />

...

<!-- création automatique du schéma -->

<property name="hibernate.hbm2ddl.auto" value="create" />

<!-- Dialecte -->

<property name="hibernate.dialect" value="org.hibernate.dialect.MySQL5InnoDBDialect" />

<!-- propriétés DataSource c3p0 -->

...

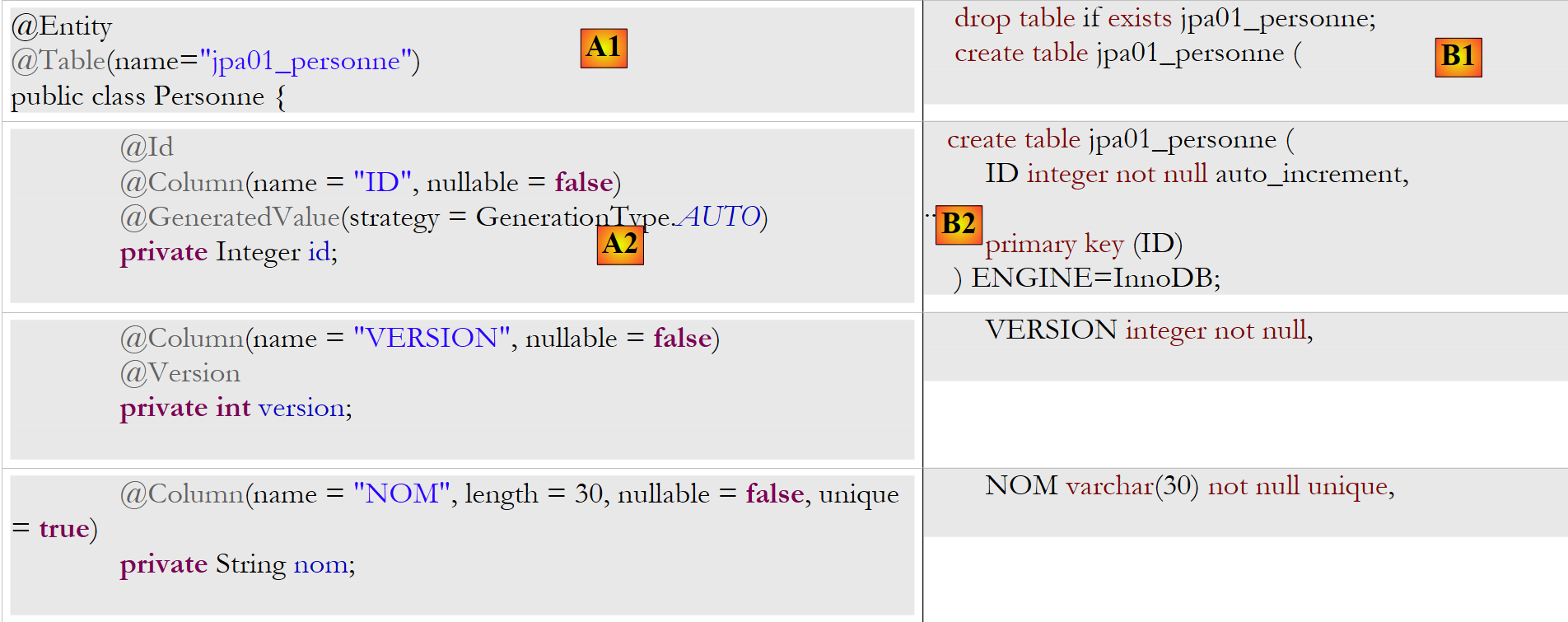

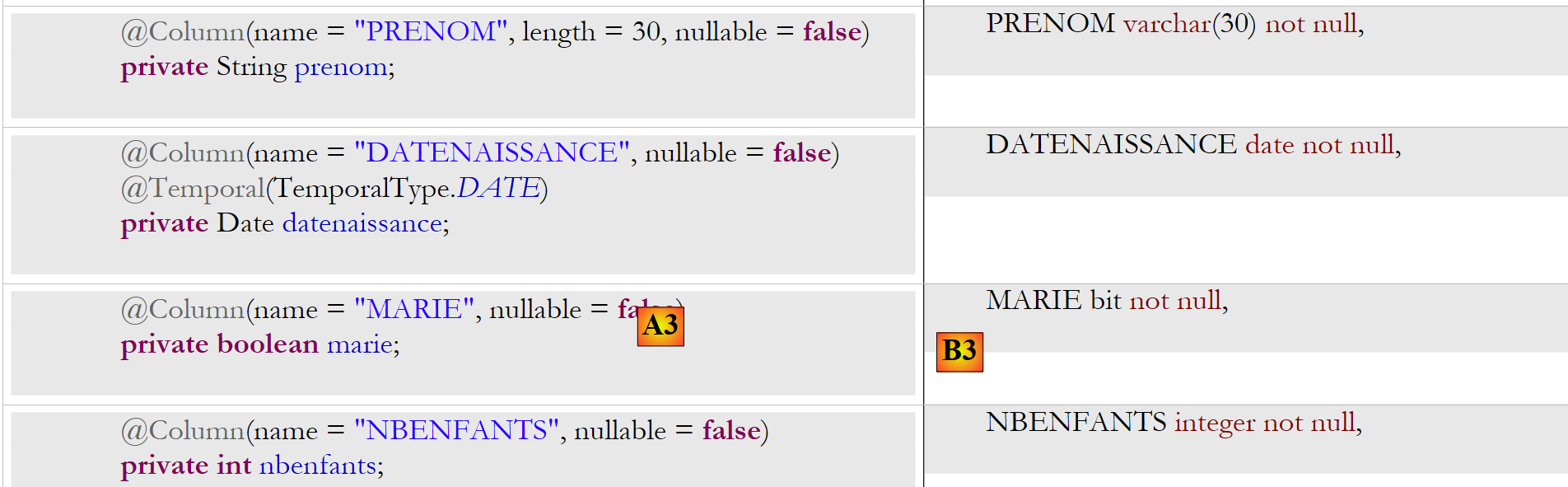

Let’s examine the object-relational mapping implemented here by looking at the configuration of the @Entity Person object and the generated DDL schema:

|

|

A few points are worth noting:

- A1-B1: The table name specified in A1 is indeed the one used in B1. Note the `DROP` statement preceding the `CREATE` in B1.

- A2-B2: show how the primary key is generated. The AUTO mode specified in A2 resulted in the autoincrement attribute specific to MySQL5. The primary key generation mode is most often specific to the DBMS.

- A3-B3: show the SQL bit type specific to MySQL 5 used to represent a Java boolean type.

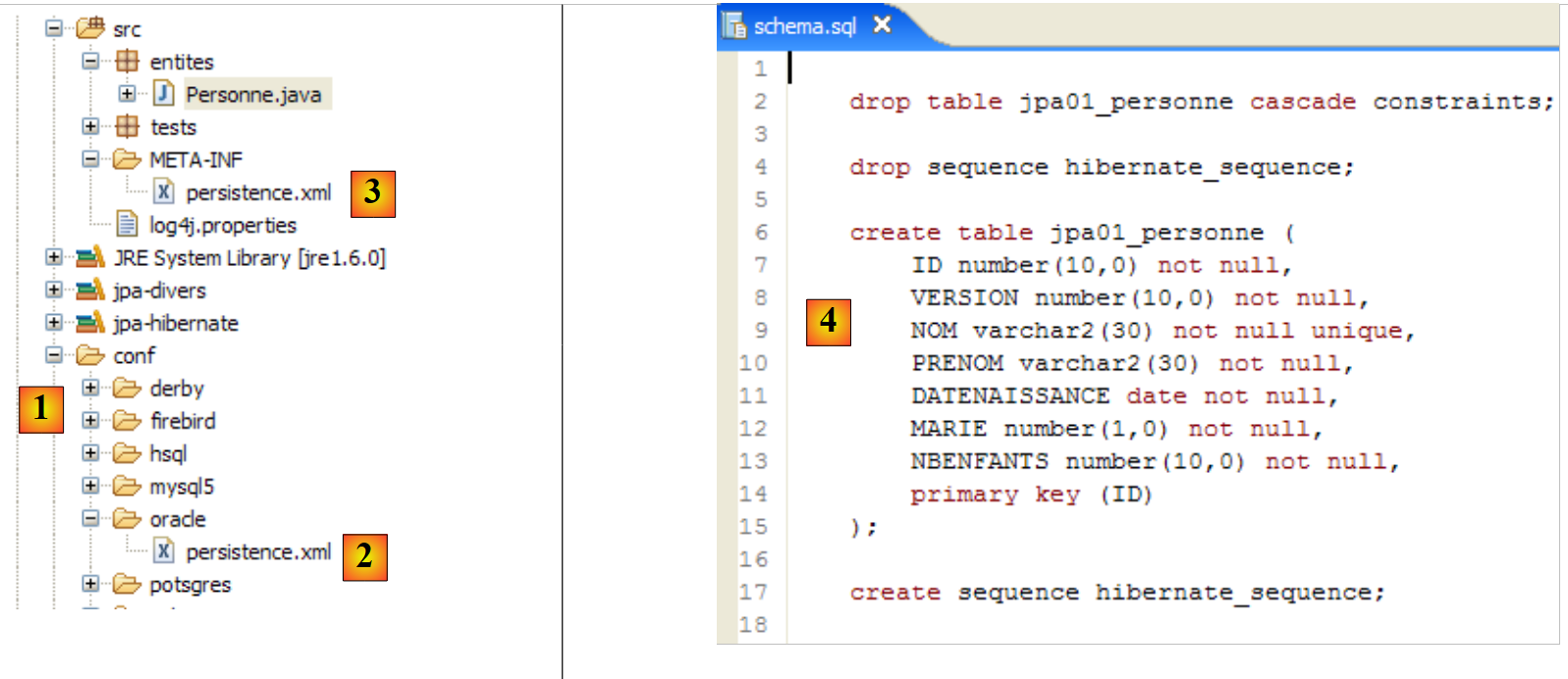

Let’s repeat this test with another DBMS:

|

- the [conf] folder [1] contains [persistence.xml] files for various DBMSs. Take the Oracle one [2], for example, and place it in the [META-INF] folder [3] in place of the previous one. Its contents are as follows:

<?xml version="1.0" encoding="UTF-8"?>

<persistence version="1.0" xmlns="http://java.sun.com/xml/ns/persistence">

<persistence-unit name="jpa" transaction-type="RESOURCE_LOCAL">

<!-- provider -->

<provider>org.hibernate.ejb.HibernatePersistence</provider>

<properties>

<!-- Persistent classes -->

<property name="hibernate.archive.autodetection" value="class, hbm" />

<!-- logs SQL

<property name="hibernate.show_sql" value="true"/>

<property name="hibernate.format_sql" value="true"/>

<property name="use_sql_comments" value="true"/>

-->

<!-- connection JDBC -->

<property name="hibernate.connection.driver_class" value="oracle.jdbc.OracleDriver" />

<property name="hibernate.connection.url" value="jdbc:oracle:thin:@localhost:1521:xe" />

<property name="hibernate.connection.username" value="jpa" />

<property name="hibernate.connection.password" value="jpa" />

<!-- automatic schematic creation -->

<property name="hibernate.hbm2ddl.auto" value="create" />

<!-- Dialect -->

<property name="hibernate.dialect" value="org.hibernate.dialect.OracleDialect" />

<!-- properties DataSource c3p0 -->

<property name="hibernate.c3p0.min_size" value="5" />

<property name="hibernate.c3p0.max_size" value="20" />

<property name="hibernate.c3p0.timeout" value="300" />

<property name="hibernate.c3p0.max_statements" value="50" />

<property name="hibernate.c3p0.idle_test_period" value="3000" />

</properties>

</persistence-unit>

</persistence>

Readers are encouraged to consult the appendix, specifically the section on Oracle (Section 5.7), particularly to understand the JDBC configuration.

Only line 25 is truly important here: we are telling Hibernate that the DBMS is now an Oracle DBMS. Executing the ant DDL task yields the result [4] shown above. Note that the Oracle schema differs from the MySQL5 schema. This is a key strength of JPA: the developer does not need to worry about these details, which significantly increases the portability of their applications.

2.1.8. Executing the " " Ant task

You may recall that the Ant task named BD does the same thing as the *DDL* task but also generates the database. The DBMS must therefore be running. We will use the MySQL5 DBMS and invite the reader to copy the file [conf/mysql5/persistence.xml] into the [src/META-INF] folder. To verify that the task is working, we will use the SQL Explorer plugin (see Section 5.2.6) to check the status of the JPA database before and after running the ant BD task.

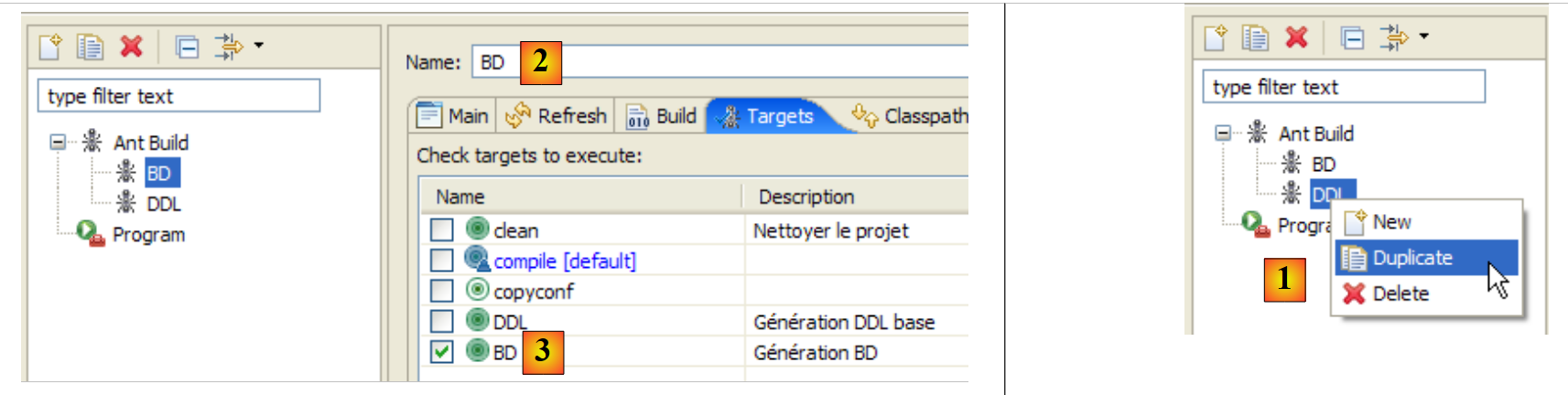

First, we need to create a new Ant configuration to run the BD task. The reader is invited to follow the procedure outlined for the DDL Ant configuration in section 2.1.7. The new Ant configuration will be named BD:

|

- in [1]: we duplicate the previous configuration named DDL

- in [2]: name the new configuration BD. It executes the ant BD task [3], which physically generates the database.

- Once this is done, launch the MySQL5 DBMS (Section 5.5).

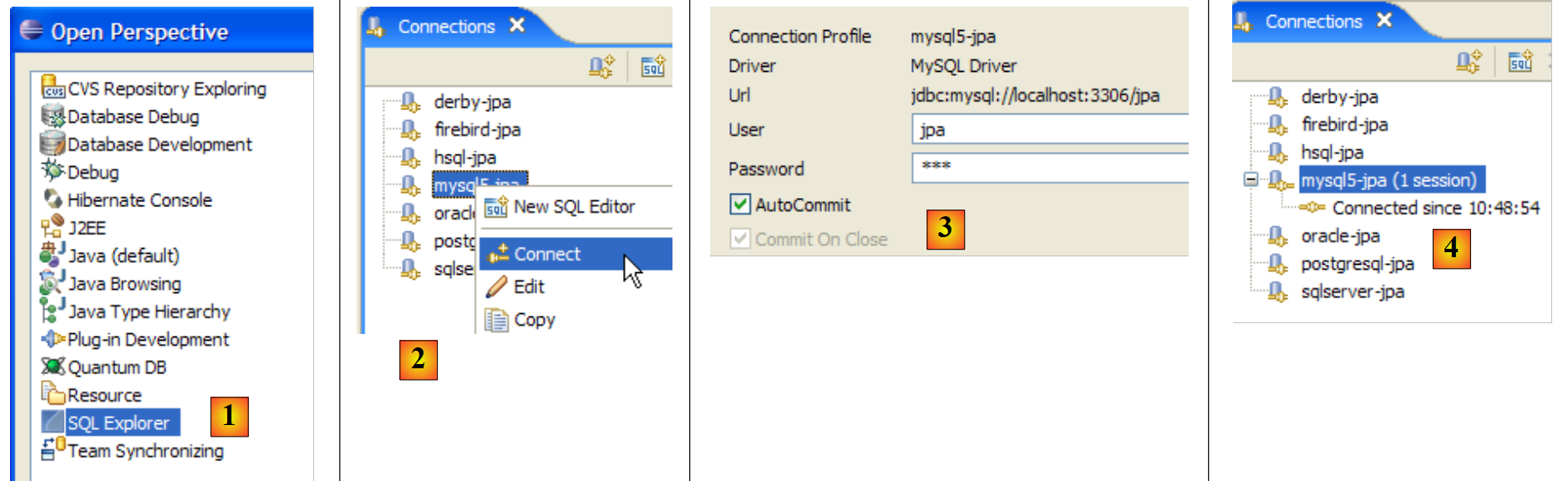

We now use the SQL Explorer plugin to explore the databases managed by the DBMS. The reader should familiarize themselves with this plugin beforehand if necessary (see section 5.2.6).

|

- [1]: Open the SQL Explorer perspective [Window / Open Perspective / Other]

- [2]: If necessary, create a connection [mysql5-jpa] (see section 5.5.5, page 252) and open it

- [3]: Log in as jpa / jpa

- [4]: You are now connected to MySQL5.

|

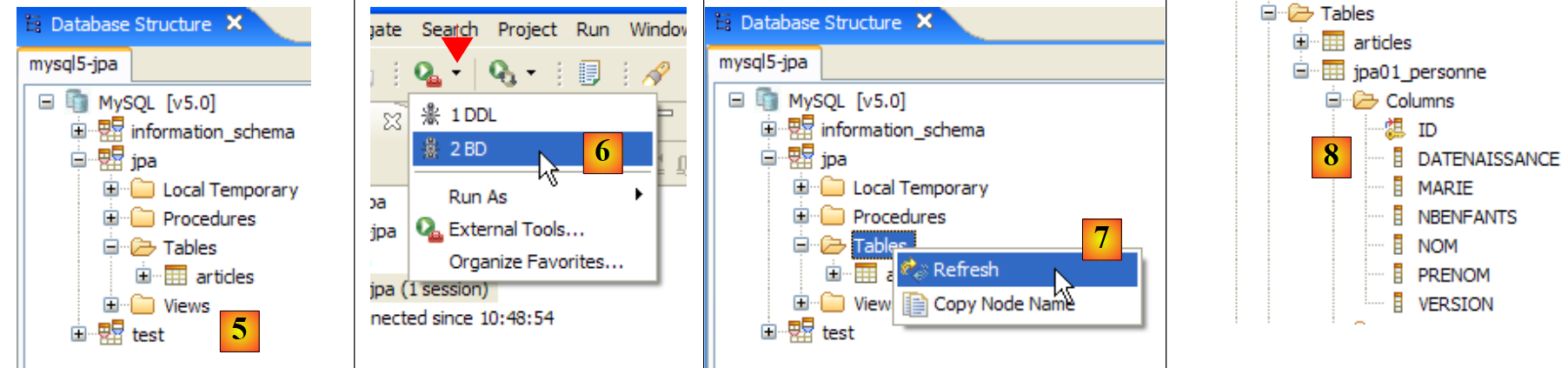

- In [5]: The jpa database has only one table: [articles]

- in [6]: Run the Ant DB task. Because you are in the [SQL Explorer] perspective, you cannot see the [Console] view, which displays the task logs. You can display this view [Window / Show View / ...] or return to the Java perspective [Window / Open Perspective / ...].

- in [7]: once the DB task is complete, return to the [SQL Explorer] perspective if necessary and refresh the JPA database tree.

- In [8]: You can see the [jpa01_personne] table that was created.

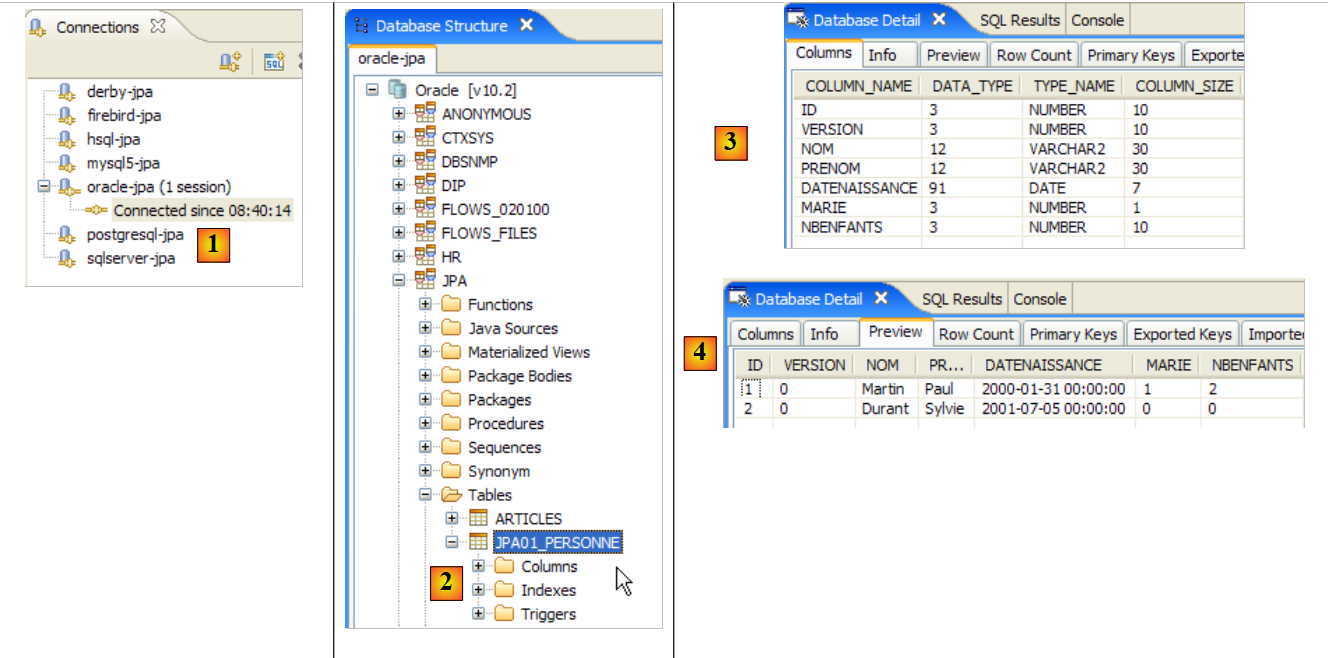

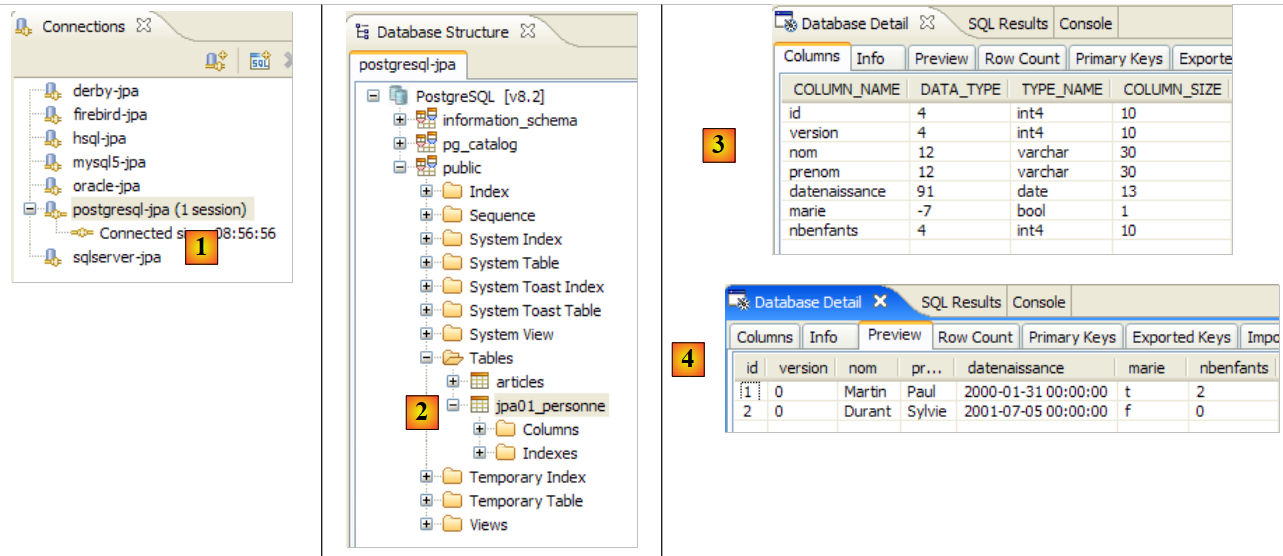

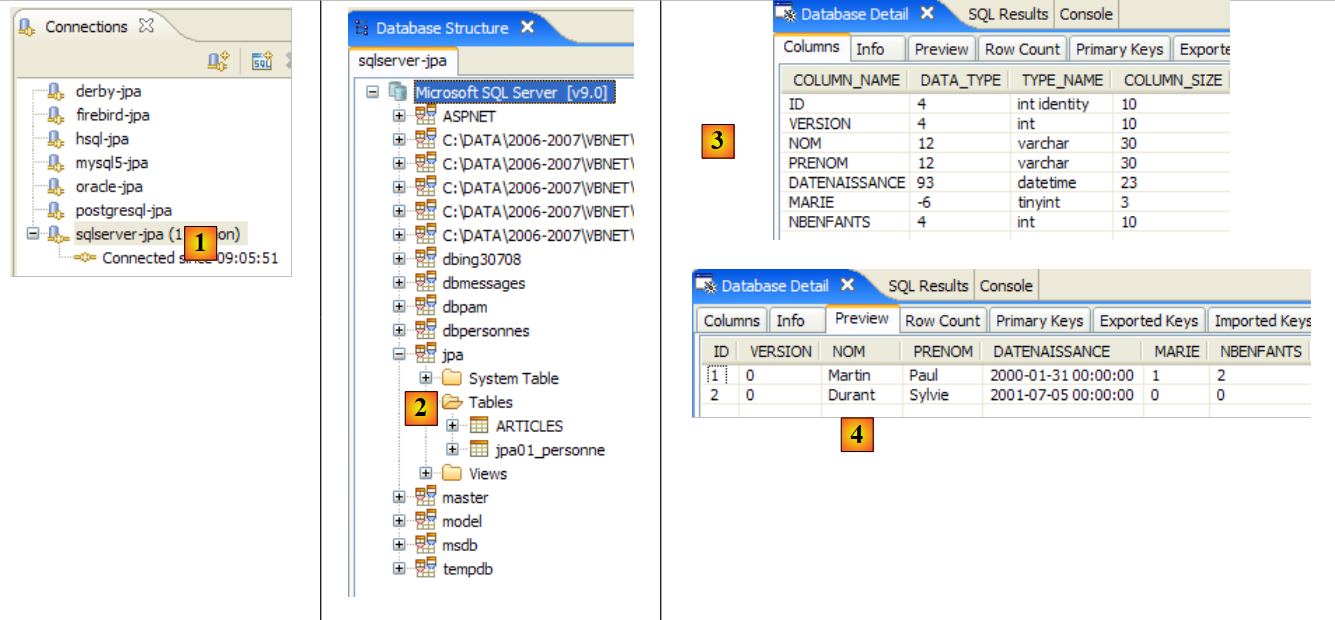

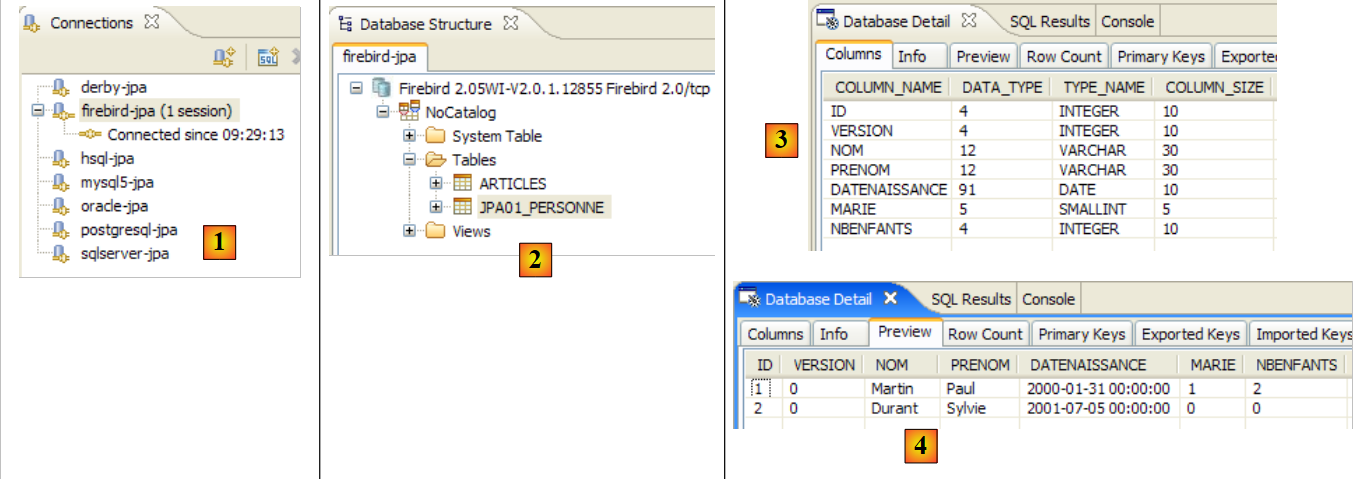

Readers are encouraged to repeat this database generation process with other DBMSs. The procedure is as follows:

- Copy the file [conf/<dbms>/persistence.xml] to the [src/META-INF] folder, where <dbms> is the DBMS being tested

- launch <dbms> by following the instructions in the appendix for that DBMS

- in the SQL Explorer view, create a connection to <dbms>. This is also explained in the appendices for each DBMS

- Repeat the previous tests

At this point, we have gained a number of insights:

- We have a better understanding of the object-relational bridge concept. Here, it was implemented using Hibernate. We will use TopLink later.

- We know that this object-relational bridge is configured in two places:

- in the @Entity objects, where we specify the relationships between object fields and database table columns

- in [META-INF/persistence.xml], where we provide the JPA implementation with information about the two components of the object-relational bridge: the @Entity objects (object) and the database (relational).

- We have created two Ant tasks, named DDL and DB, that allow us to create the database based on the previous configuration, even before writing any Java code.

Now that the JPA layer of our application is properly configured, we can begin exploring the JPA API with Java code.

2.1.9. s an application's persistence context

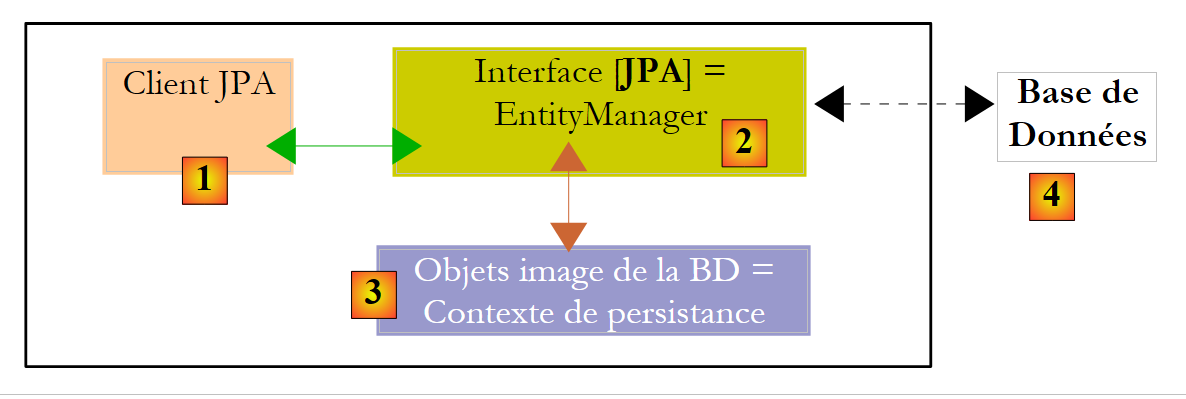

Let’s take a closer look at the runtime environment of a JPA client:

|

We know that the JPA layer [2] creates a bridge between objects [3] and relational data [4]. The "persistence context" refers to the set of objects managed by the JPA layer within this object-relational bridge. To access data in the persistence context, a JPA client [1] must go through the JPA layer [2]:

- it can create an object and ask the JPA layer to make it persistent. The object then becomes part of the persistence context.

- it can request a reference to an existing persistent object from the [JPA] layer.

- it can modify a persistent object obtained from the JPA layer.

- it can ask the JPA layer to remove an object from the persistence context.

The JPA layer provides the client with an interface called [EntityManager] which, as its name suggests, allows for the management of @Entity objects in the persistence context. Below are the main methods of this interface:

Adds the entity to the persistence context | |

removes entity from the persistence context | |

merges an entity object from the client that is not managed by the persistence context with the entity object in the persistence context that has the same primary key. The result returned is the entity object from the persistence context. | |

places an object retrieved from the database via its primary key. The type T of the object allows the JPA layer to know which table to query. The persistent object thus created is returned to the client. | |

creates a Query object from a JPQL query (Java Persistence Query Language). A JPQL query is analogous to an SQL query, except that it queries objects rather than tables. | |

A method similar to the previous one, except that queryText is an SQL statement rather than a JPQL query. | |

A method identical to createQuery, except that the JPQL query queryText has been externalized into a configuration file and associated with a name. This name is the method’s parameter. |

An EntityManager object has a lifecycle that is not necessarily the same as that of the application. It has a beginning and an end. Thus, a JPA client can work successively with different EntityManager objects. The persistence context associated with an EntityManager has the same lifecycle as the EntityManager itself. They are inseparable from one another. When an EntityManager object is closed, its persistence context is synchronized with the database if necessary, and then ceases to exist. A new EntityManager must be created to obtain a new persistence context.

The JPA client can create an EntityManager and thus a persistence context with the following statement:

EntityManagerFactory emf = Persistence.createEntityManagerFactory("jpa");

- javax.persistence.Persistence is a static class used to obtain a factory for EntityManager objects. This factory is associated with a specific persistence unit. Recall that the configuration file [META-INF/persistence.xml] is used to define persistence units, each of which has a name:

<persistence-unit name="jpa" transaction-type="RESOURCE_LOCAL">

In the example above, the persistence unit is named jpa. It comes with its own specific configuration, including the database management system (DBMS) it works with. The statement [Persistence.createEntityManagerFactory("jpa")] creates an EntityManagerFactory capable of providing EntityManager objects designed to manage persistence contexts associated with the persistence unit named jpa. An EntityManager object—and thus a persistence context—is obtained from the EntityManagerFactory object as follows:

The following methods of the [EntityManager] interface allow you to manage the lifecycle of the persistence context:

The persistence context is closed. Forces synchronization of the persistence context with the database:

| |

The persistence context is cleared of all its objects but not closed. | |

The persistence context is synchronized with the database as described for close() |

The JPA client can force synchronization of the persistence context with the database using the [EntityManager].flush method. Synchronization can be explicit or implicit. In the first case, it is up to the client to perform flush operations when it wants to synchronize; otherwise, synchronization occurs at specific times that we will specify. The synchronization mode is managed by the following methods of the [EntityManager] interface:

There are two possible values for flushMode: FlushModeType.AUTO (default): synchronization occurs before each SELECT query made on the database. FlushModeType.COMMIT: synchronization occurs only at the end of database transactions. | |

returns the current synchronization mode |

Let’s summarize. In FlushModeType.AUTO mode, which is the default, the persistence context will be synchronized with the database at the following times:

- before each SELECT operation on the database

- at the end of a transaction on the database

- following a flush or close operation on the persistence context

In FlushModeType.COMMIT mode, the same applies except for operation 1, which does not occur. The normal mode of interaction with the JPA layer is transactional mode. The client performs various operations on the persistence context within a transaction. In this case, the synchronization points between the persistence context and the database are cases 1 and 2 above in AUTO mode, and case 2 only in COMMIT mode.

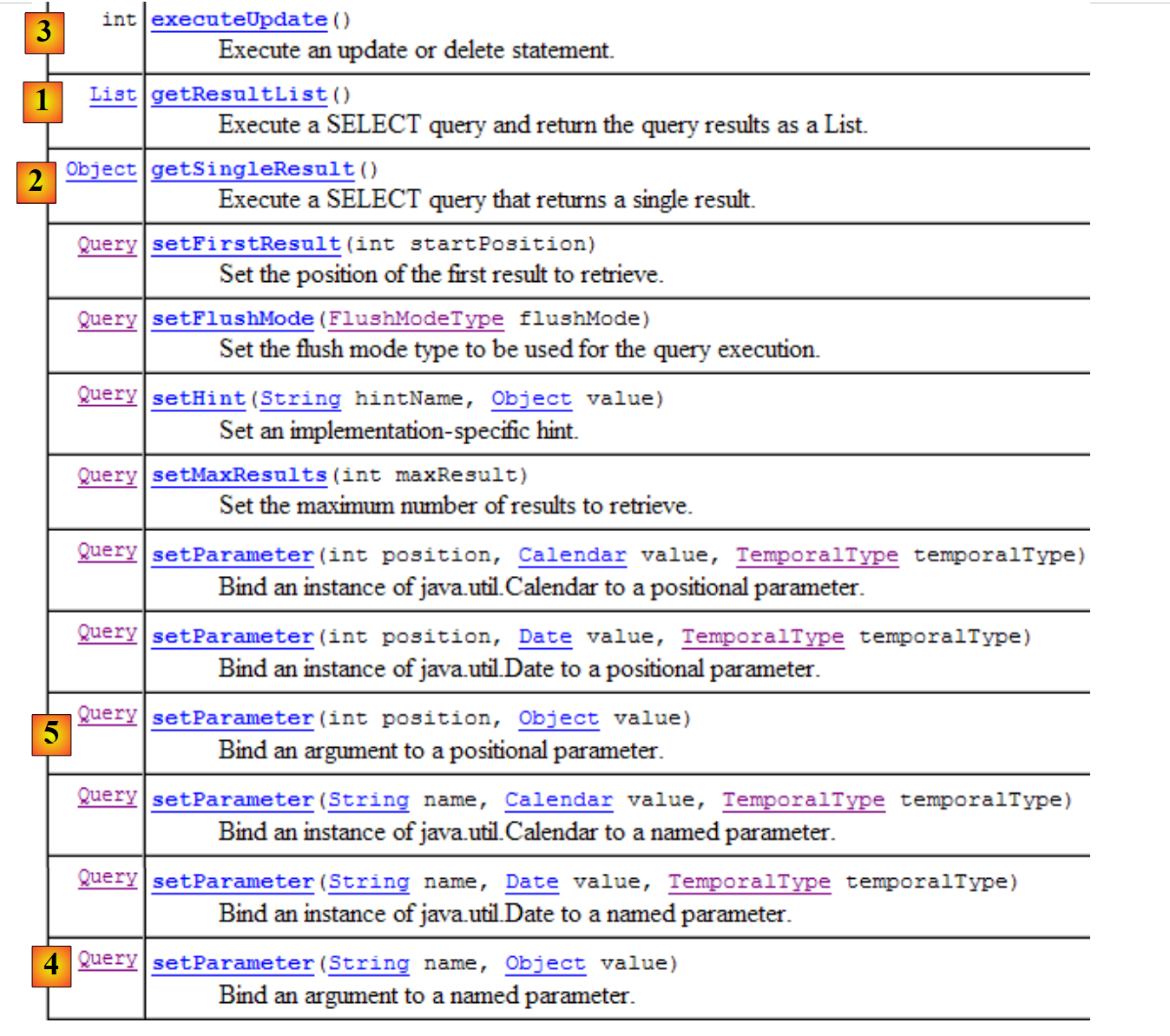

Let’s conclude with the Query interface API, which allows you to issue JPQL commands on the persistence context or SQL commands directly on the database to retrieve data. The Query interface is as follows:

|

We will use methods 1 through 4 above:

- 1 - The getResultList method executes a SELECT query that returns multiple objects. These are returned in a List object. This object is an interface. It provides an Iterator object that allows you to iterate through the elements of the list L as follows:

Iterator iterator = L.iterator();

while (iterator.hasNext()) {

// exploiter l'objet iterator.next() qui représente l'élément courant de la liste

...

}

The list L can also be iterated over using a for loop:

for (Object o : L) {

// exploiter objet o

}

- 2 - The getSingleResult method executes a JPQL/SQL SELECT statement that returns a single object.

- 3 - The `executeUpdate` method executes an SQL UPDATE or DELETE statement and returns the number of rows affected by the operation.

- 4 - The setParameter(String, Object) method allows you to assign a value to a named parameter in a parameterized JPQL query.

- 5 - The setParameter(int, Object) method sets the parameter, but the parameter is identified not by its name but by its position in the JPQL query.

2.1.10. A First JPA Client

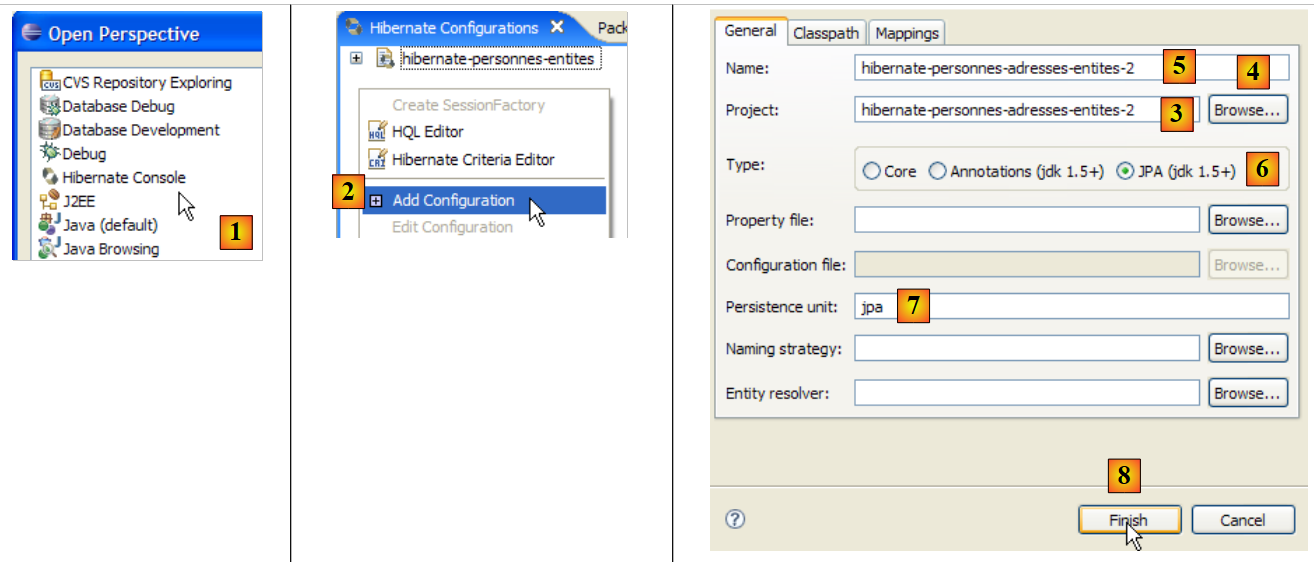

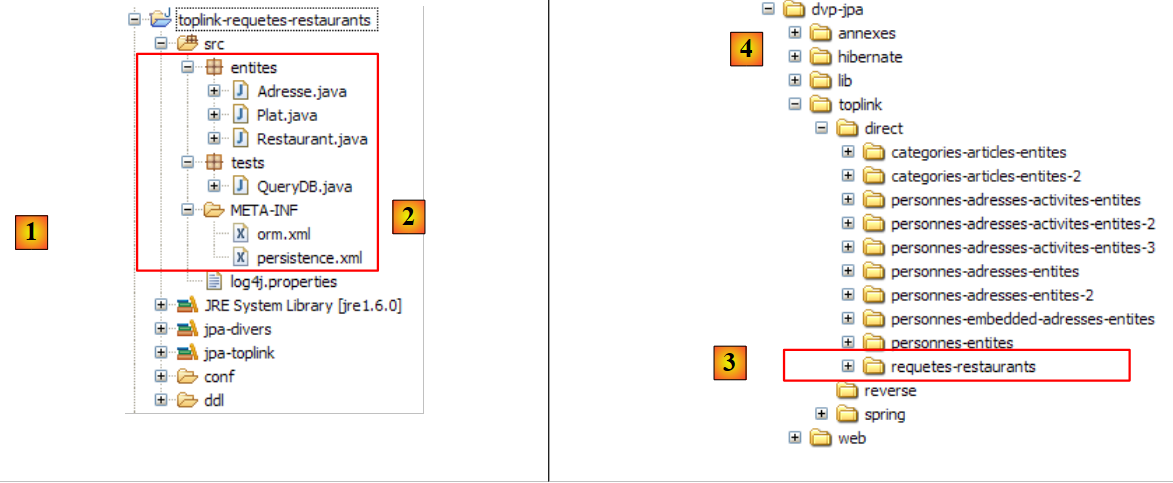

Let’s return to the Java perspective of the project:

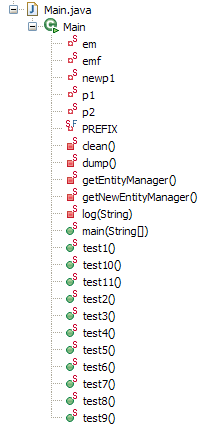

|

We now know almost everything about this project except for the contents of the [src/tests] folder, which we will examine next. The folder contains two test programs for the JPA layer:

- [InitDB.java] is a program that inserts a few rows into the [jpa01_personne] table in the database. Its code will introduce us to the first elements of the JPA layer.

- [Main.java] is a program that performs CRUD operations on the [jpa01_personne] table. Studying its code will allow us to explore the fundamental concepts of the persistence context and the lifecycle of objects within that context.

2.1.10.1. The code

The code for the [InitDB.java] program is as follows:

package tests;

import java.text.ParseException;

import java.text.SimpleDateFormat;

import javax.persistence.EntityManager;

import javax.persistence.EntityManagerFactory;

import javax.persistence.EntityTransaction;

import javax.persistence.Persistence;

import entites.Personne;

public class InitDB {

// constant

private final static String TABLE_NAME = "jpa01_personne";

public static void main(String[] args) throws ParseException {

// Persistence unit

EntityManagerFactory emf = Persistence.createEntityManagerFactory("jpa");

// retrieve a EntityManagerFactory from the persistence unit

EntityManager em = emf.createEntityManager();

// start of transaction

EntityTransaction tx = em.getTransaction();

tx.begin();

// delete items from the people table

em.createNativeQuery("delete from " + TABLE_NAME).executeUpdate();

// create two people

Personne p1 = new Personne("Martin", "Paul", new SimpleDateFormat("dd/MM/yy").parse("31/01/2000"), true, 2);

Personne p2 = new Personne("Durant", "Sylvie", new SimpleDateFormat("dd/MM/yy").parse("05/07/2001"), false, 0);

// persistence of people

em.persist(p1);

em.persist(p2);

// people display

System.out.println("[personnes]");

for (Object p : em.createQuery("select p from Personne p order by p.nom asc").getResultList()) {

System.out.println(p);

}

// end transaction

tx.commit();

// end EntityManager

em.close();

// end EntityManagerFactory

emf.close();

// log

System.out.println("terminé ...");

}

}

This code should be read in light of what was explained in section 2.1.9.

- Line 19: An EntityManagerFactory (emf) object is requested for the JPA persistence unit (defined in persistence.xml). This operation is normally performed only once during the lifetime of an application.

- line 21: an EntityManager (em) object is requested to manage a persistence context.

- line 23: a Transaction object is requested to manage a transaction. Note that operations on the persistence context must be performed within a transaction. We will see that this is not strictly required, but failing to do so can lead to problems. If the application runs in an EJB3 container, operations on the persistence context are always performed within a transaction.

- Line 24: The transaction begins

- line 26: executes a delete SQL statement on the "jpa01_personne" table (nativeQuery). We do this to clear the table of all content and thus better see the result of the application's execution [InitDB]

- Lines 28–29: Two Person objects, p1 and p2, are created. These are ordinary objects and, for now, have nothing to do with the persistence context. In relation to the persistence context, Hibernate refers to these objects as being in a transient state, as opposed to persistent objects, which are managed by the persistence context. We will instead refer to non-persistent objects (a non-standard term) to indicate that they are not yet managed by the persistence context, and to persistent objects for those that are managed by it. We will encounter a third category of objects: detached objects, which are objects that were previously persistent but whose persistence context has been closed. The client may hold references to such objects, which explains why they are not necessarily destroyed when the persistence context is closed. They are then said to be in a detached state. The [EntityManager].merge operation allows them to be reattached to a newly created persistence context.

- Lines 31–32: The entities p1 and p2 are added to the persistence context via the [EntityManager].persist operation. They then become persistent objects.

- Lines 35–37: A JPQL query “select p from Person p order by p.name asc” is executed. Person is not the table (which is named jpa01_person) but the @Entity object associated with the table. Here we have a JPQL (Java Persistence Query Language) query on the persistence context, not an SQL query on the database. That said, apart from the Person object that has replaced the jpa01_personne table, the syntaxes are identical. A for loop iterates through the list (of people) resulting from the select to display each element on the console. Here, we are verifying that the elements placed in the persistence context in lines 31–32 are indeed present in the table. Transparent synchronization of the persistence context with the database will occur. In fact, a SELECT query will be issued, and we noted that this is one of the cases where synchronization occurs. It is therefore at this moment that, in the background, JPA/Hibernate will issue the two SQL INSERT statements that will insert the two people into the jpa01_personne table. The `persist` operation did not do this. This operation adds objects to the persistence context without affecting the database. The actual work happens during synchronization, here just before the `SELECT` query on the database.

- Line 39: We end the transaction started on line 24. A synchronization will take place again. Nothing will happen here since the persistence context has not changed since the last synchronization.

- Line 41: We close the persistence context.

- Line 43: We close the EntityManager factory.

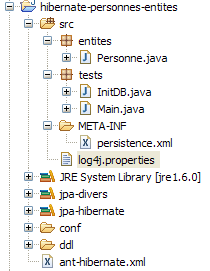

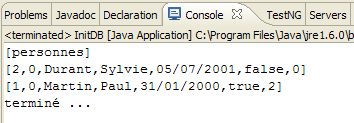

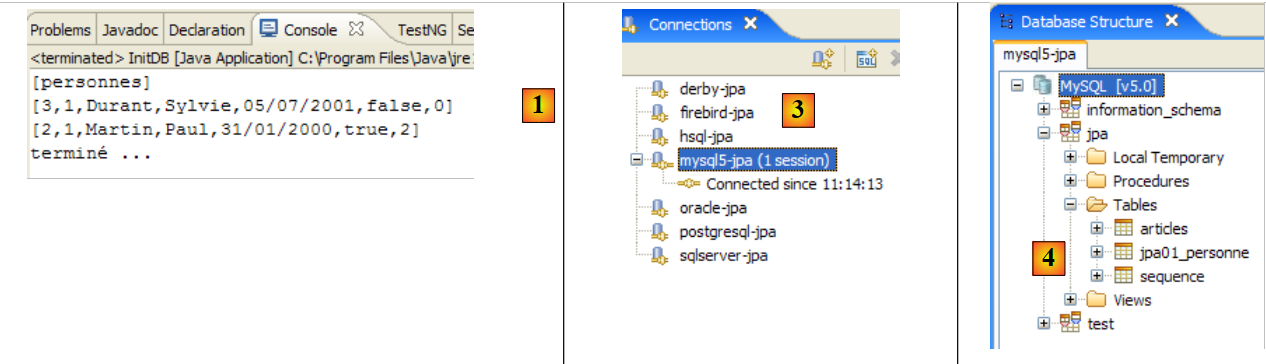

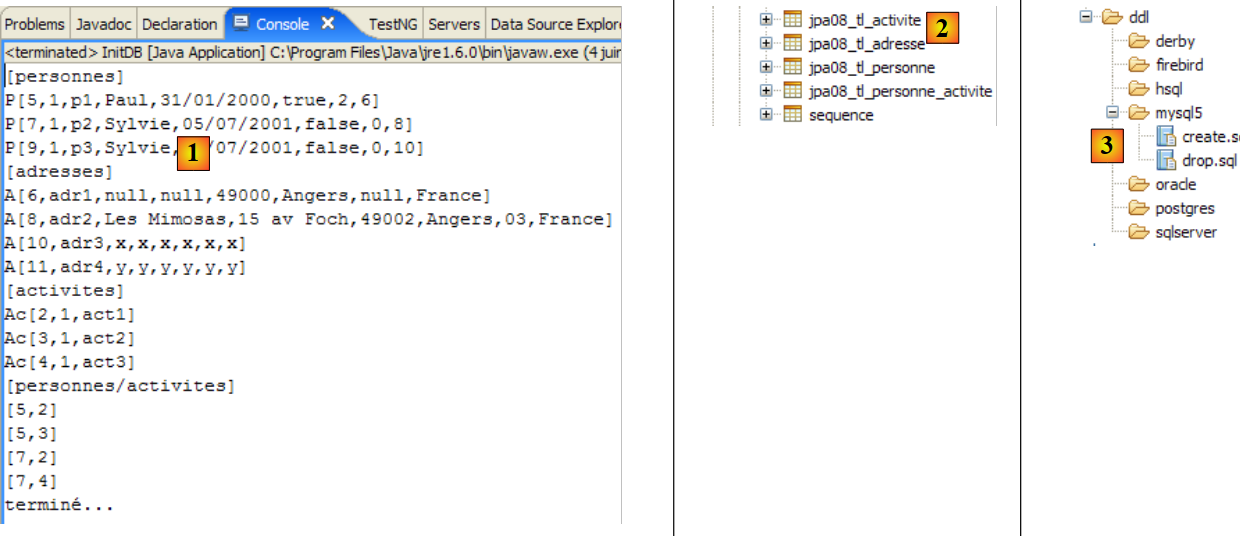

2.1.10.2. The : executing the code

- Start the MySQL5 DBMS

- Place conf/mysql5/persistence.xml in META-INF/persistence.xml if necessary

- Run the [InitDB] application

The following results are obtained:

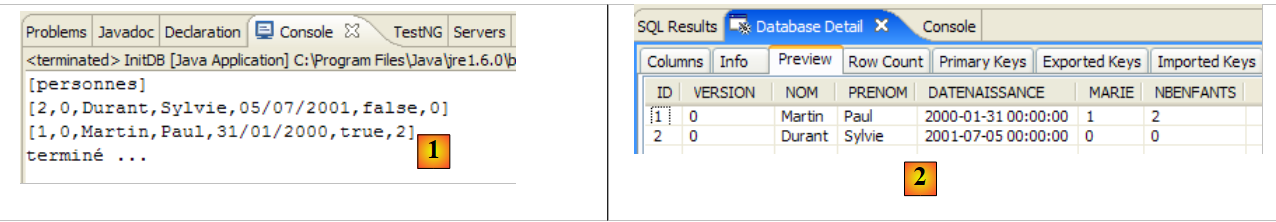

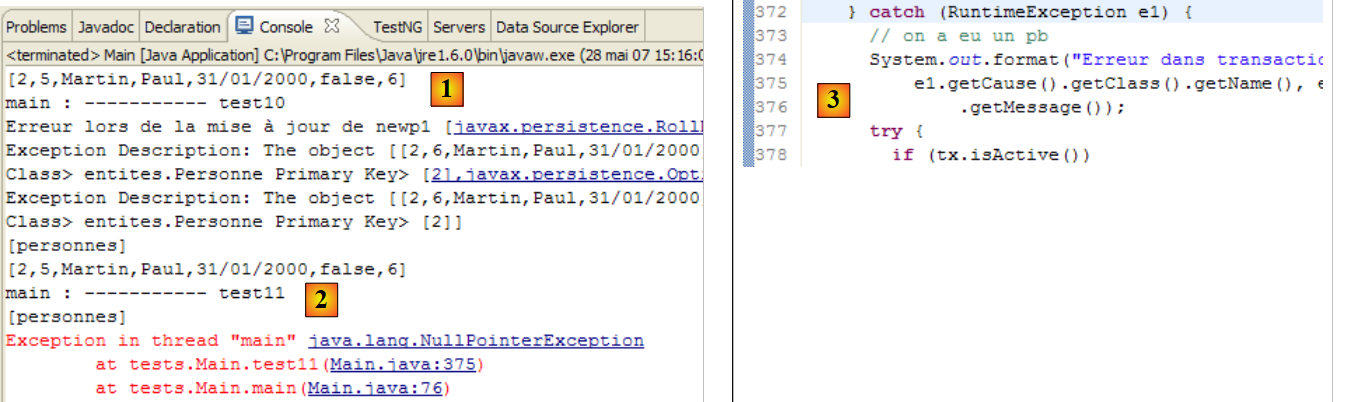

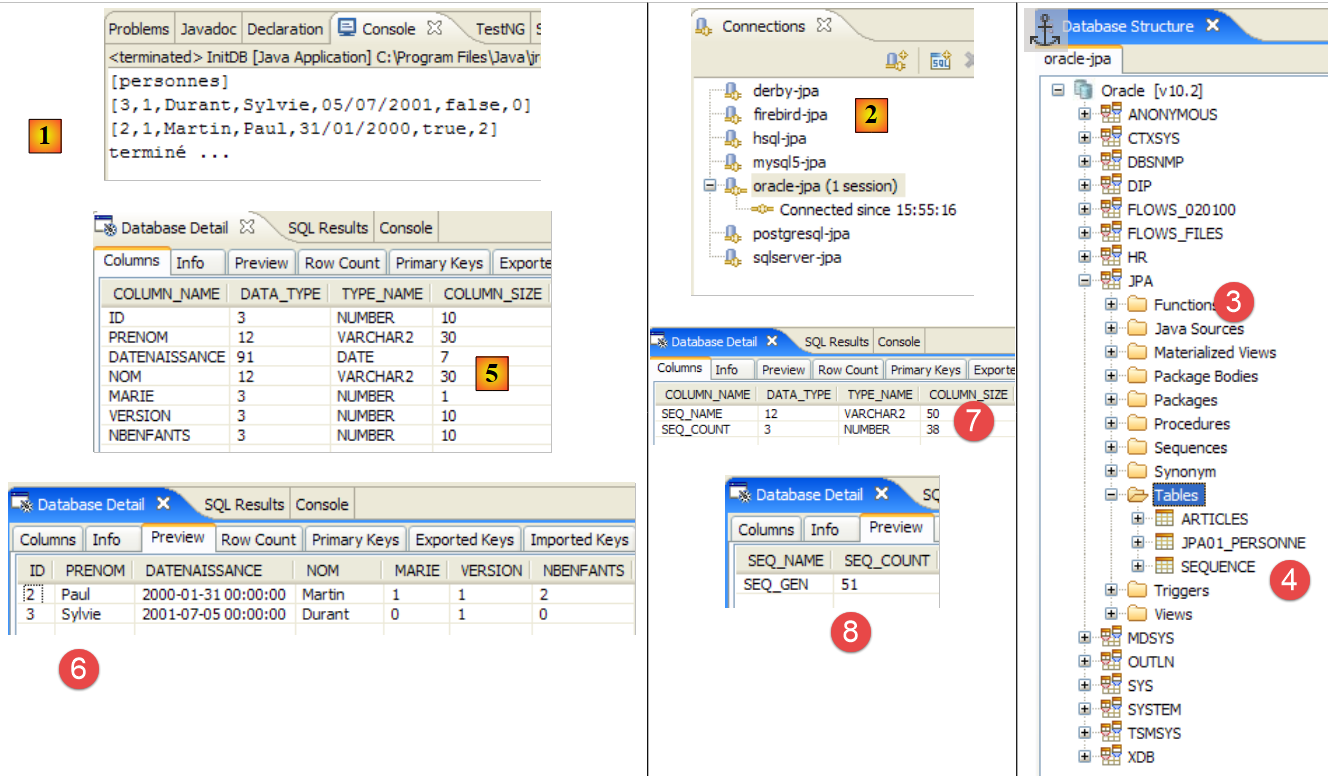

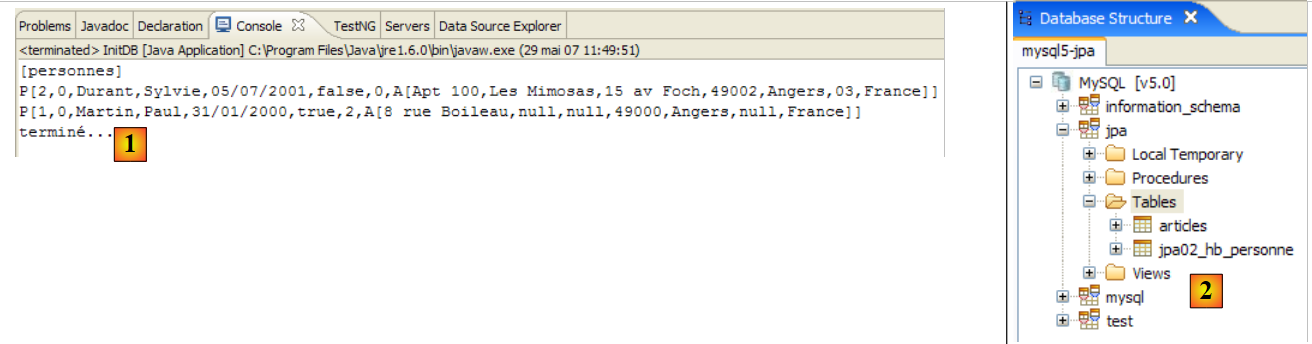

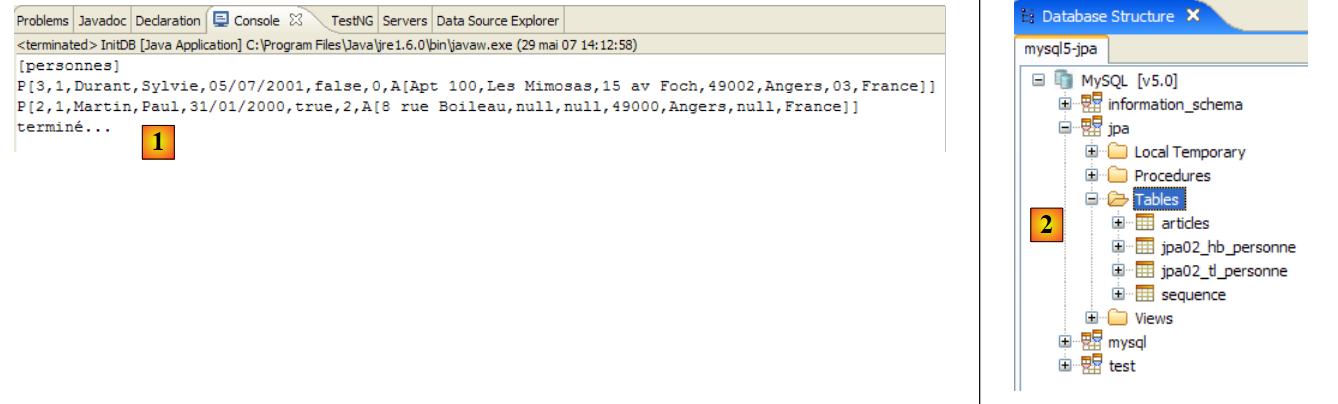

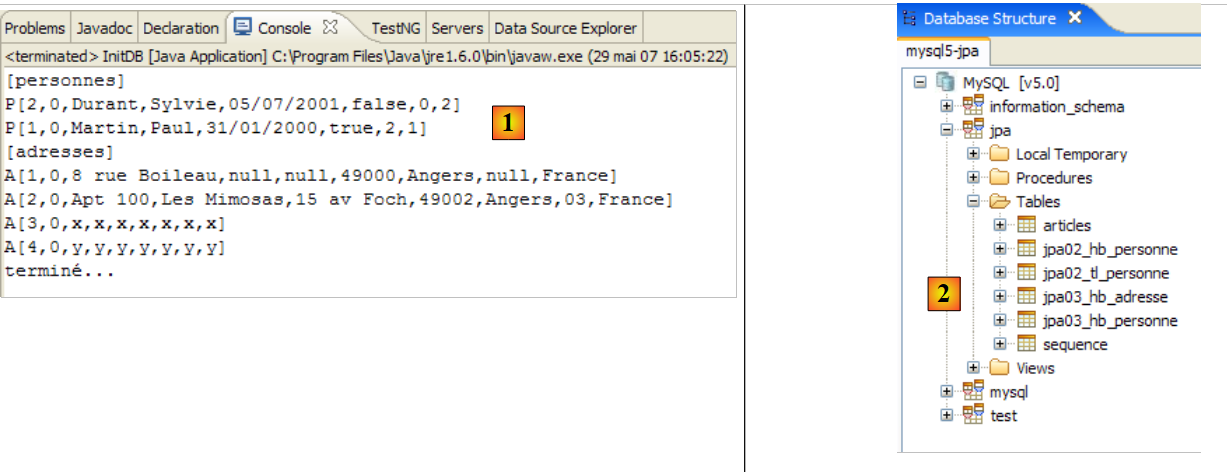

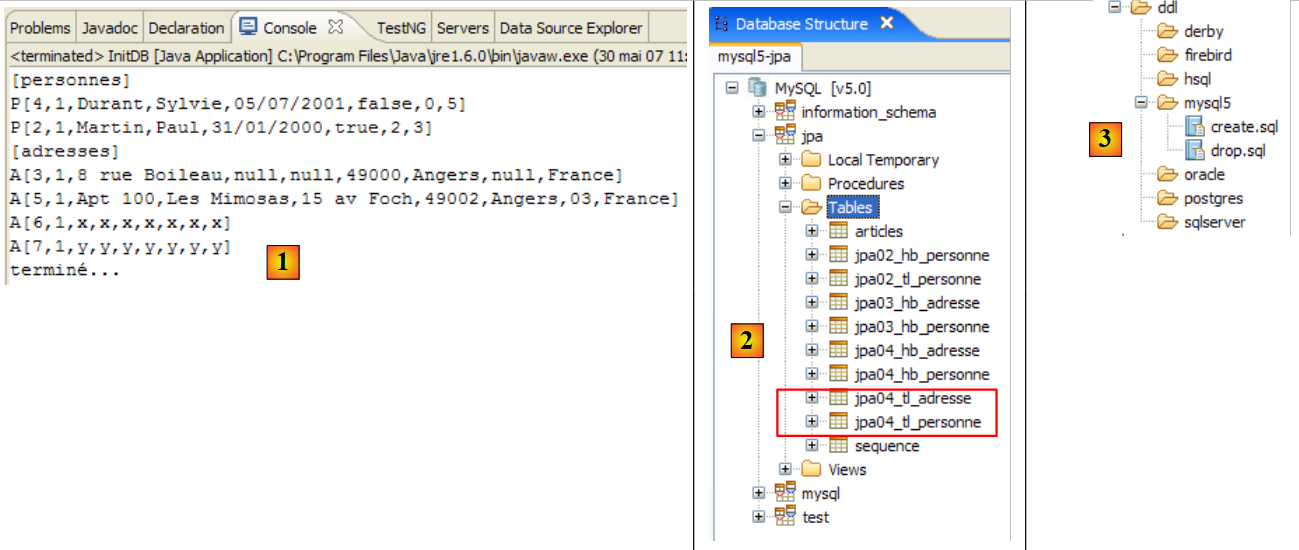

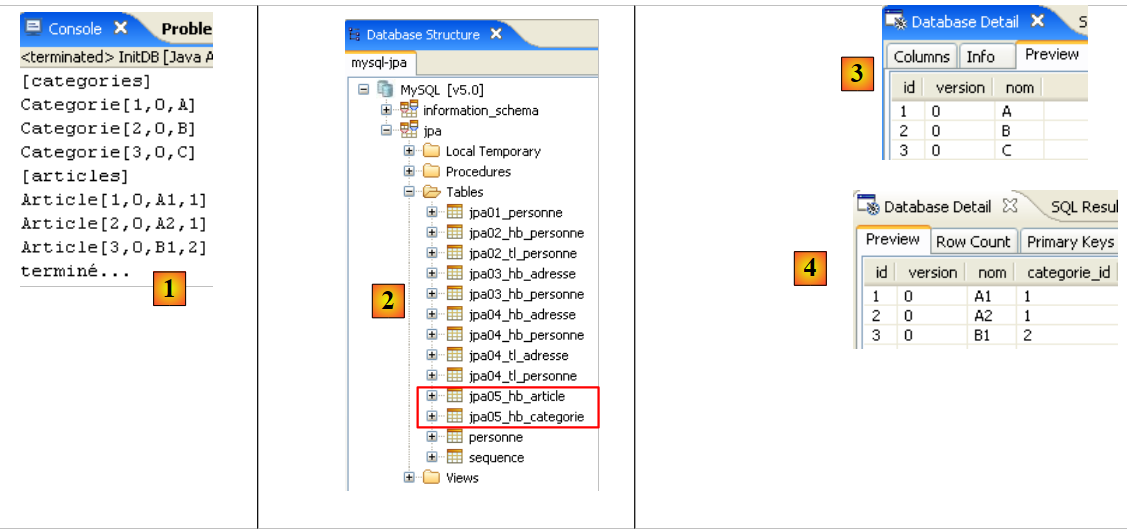

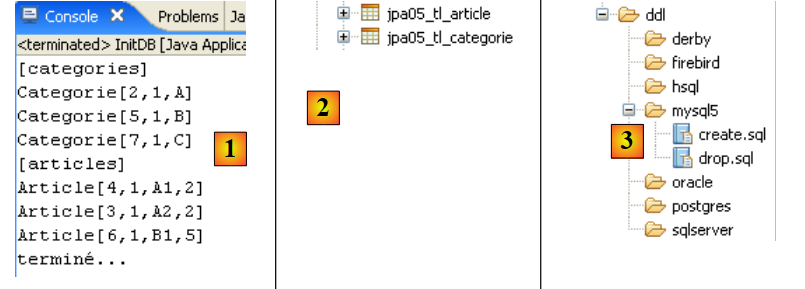

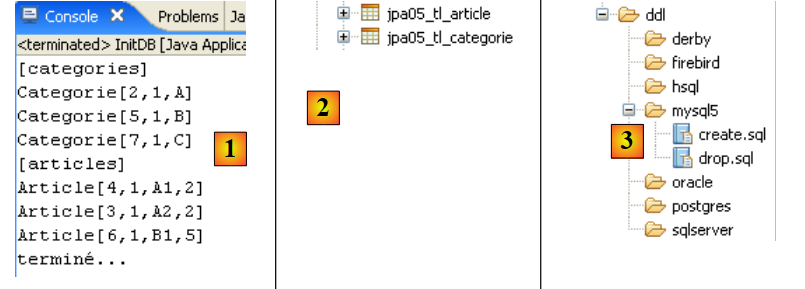

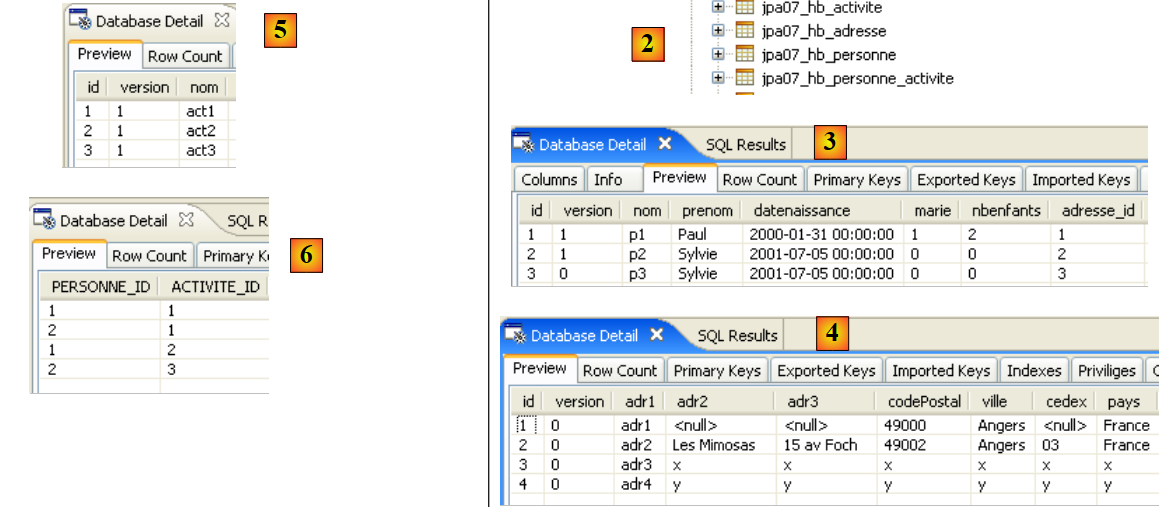

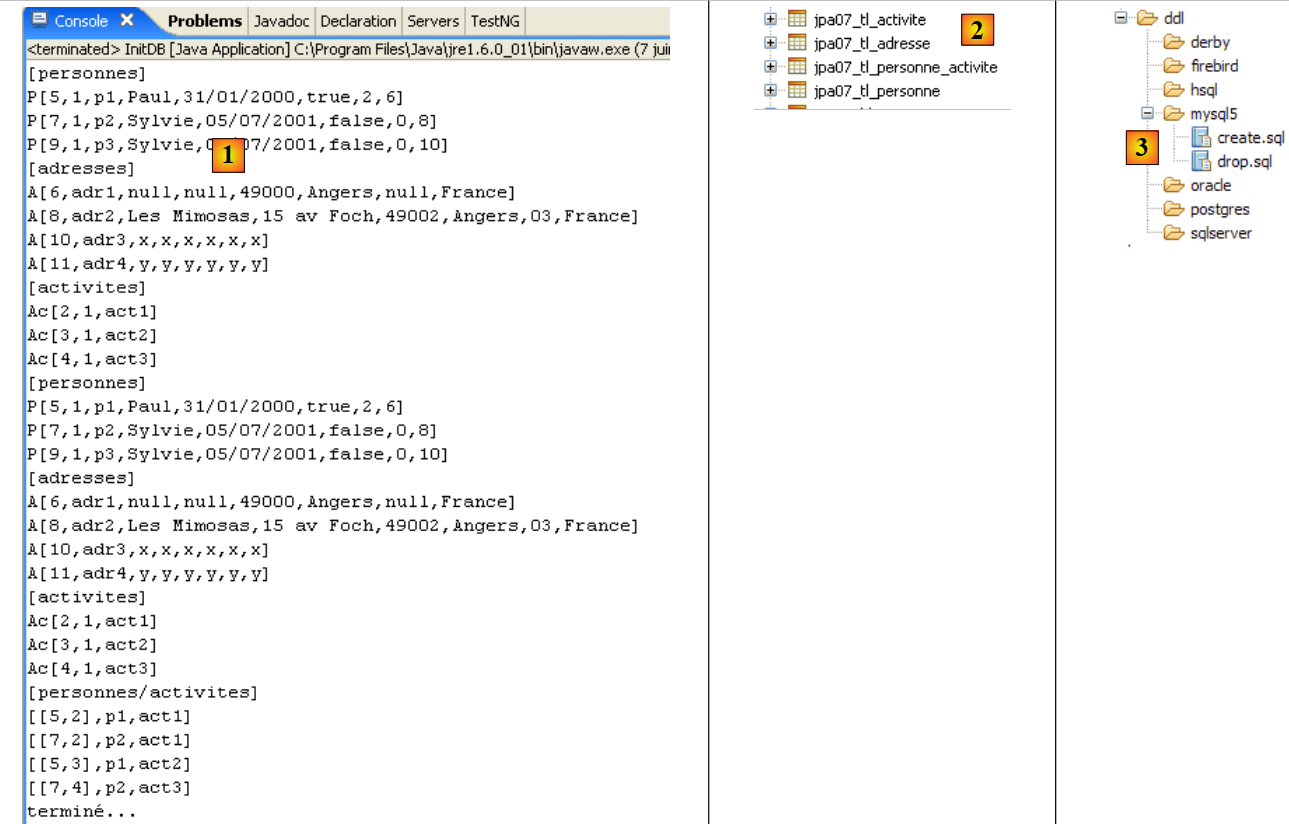

|

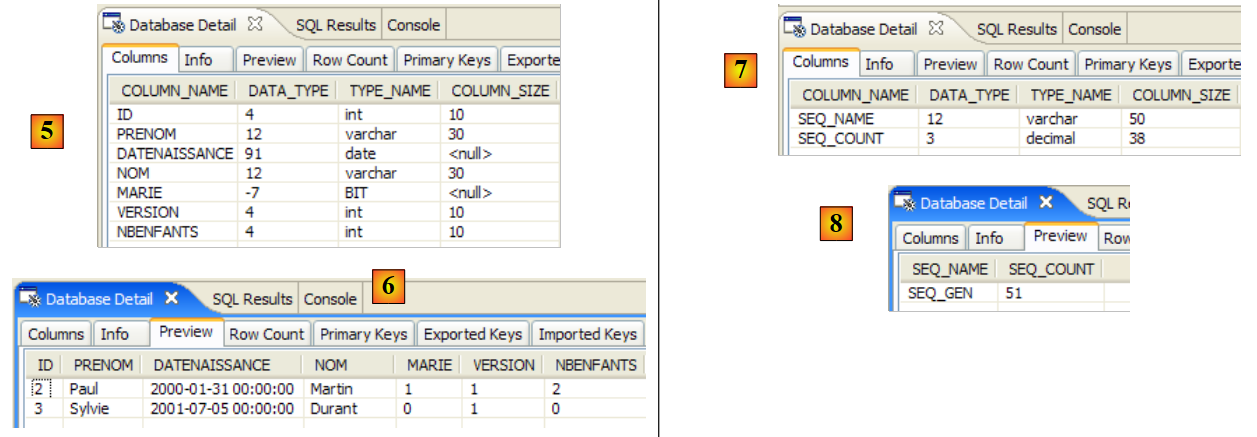

- in [1]: the console output in the Java perspective. The expected results are obtained.

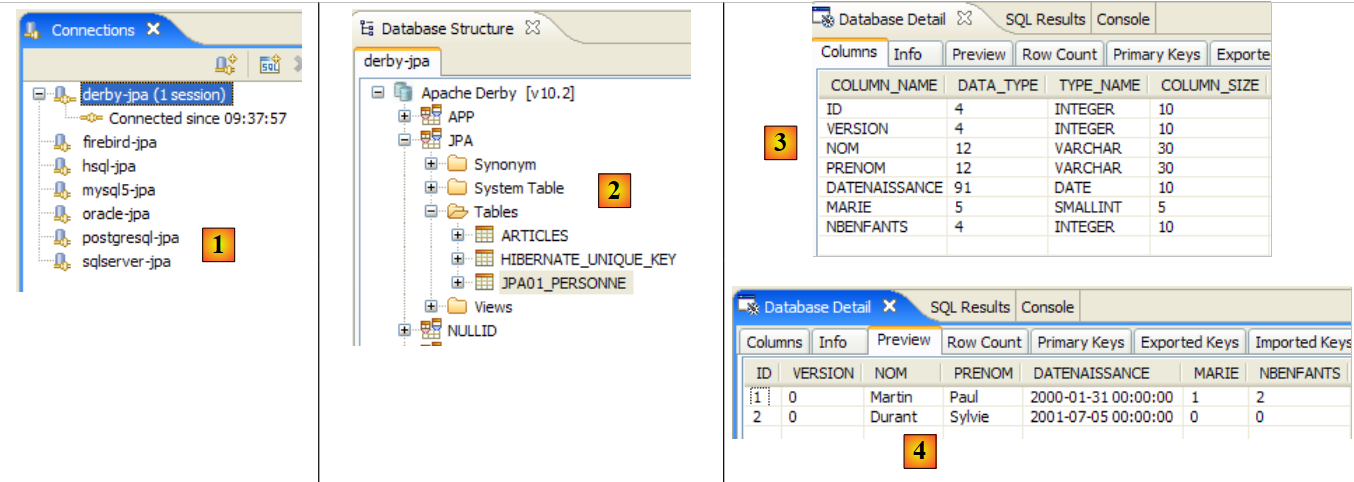

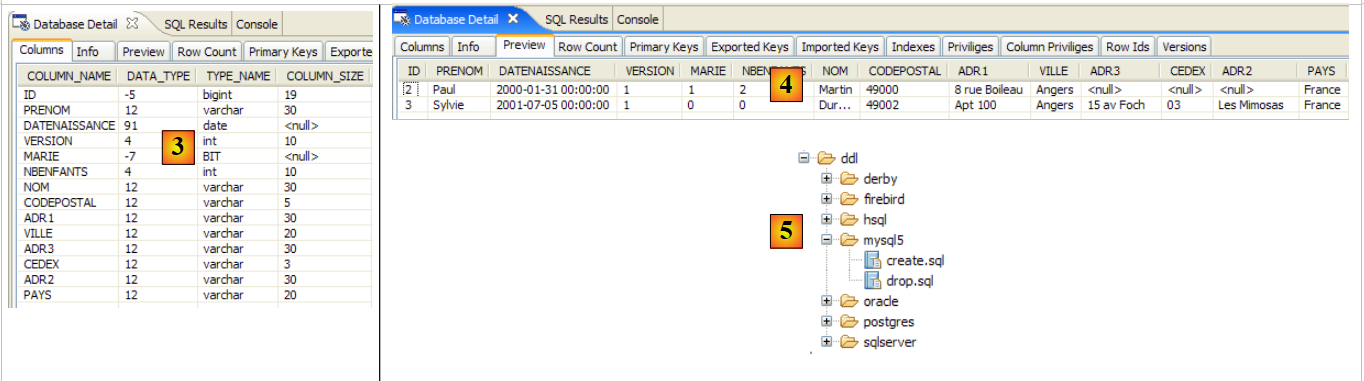

- in [2]: we verify the contents of the [jpa01_personne] table using the SQL Explorer view, as explained in section 2.1.8. Two points are worth noting:

- the primary key ID was generated automatically

- the same applies to the version number. We see that the first version has the number 0..

Here we have the first elements of the JPA framework. We have successfully inserted data into a table. We will build on this foundation to write the second test, but first let’s discuss logs.

2.1.11. Implementing Hibernate logs

It is possible to view the SQL statements sent to the database by the JPA/Hibernate layer. It is useful to examine these to see if the JPA layer is as efficient as a developer who had written the SQL statements themselves.

With JPA/Hibernate, SQL logging can be configured in the [persistence.xml] file:

<!-- Classes persistantes -->

<property name="hibernate.archive.autodetection" value="class, hbm" />

<!-- logs SQL

<property name="hibernate.show_sql" value="true"/>

<property name="hibernate.format_sql" value="true"/>

<property name="use_sql_comments" value="true"/>

-->

<!-- connexion JDBC -->

<property name="hibernate.connection.driver_class" value="com.mysql.jdbc.Driver" />

- Lines 4–6: SQL logs were not enabled at this point. We enable them now by removing the comment tags from lines 3 and 7.

We rerun the [InitDB] application. The console output then becomes as follows:

- Lines 2-4: The SQL DELETE statement resulting from the command:

// supprimer les éléments de la table des personnes

em.createNativeQuery("delete from " + TABLE_NAME).executeUpdate();

- lines 5-18: the SQL insert statements from the instructions:

// persistance des personnes

em.persist(p1);

em.persist(p2);

- lines 21-32: the SQL SELECT statement resulting from the instruction:

for (Object p : em.createQuery("select p from Personne p order by p.nom asc").getResultList())

If we perform intermediate console prints, we will see that the SQL logs for a statement I in the Java code are written when statement I is executed. This does not mean that the displayed SQL statement is executed on the database at that moment. It is actually cached for execution during the next synchronization of the persistence context with the database.

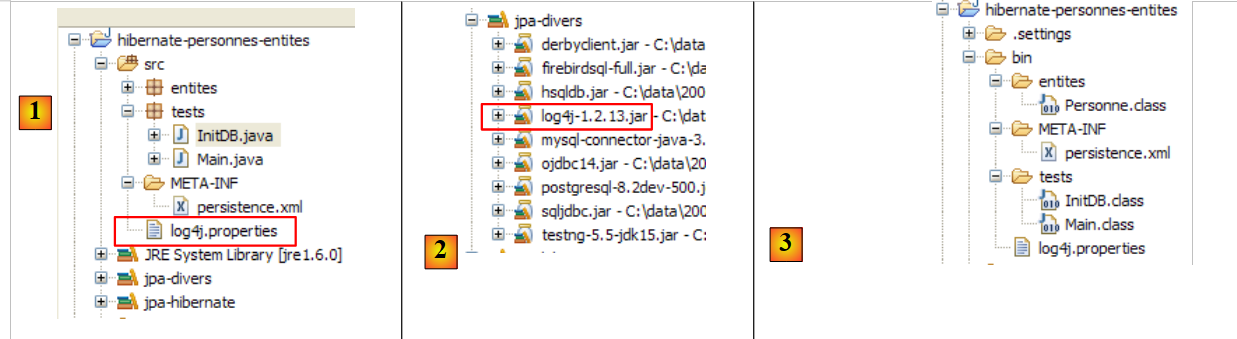

Additional logs can be obtained via the [src/log4j.properties] file:

|

- In [1], the [log4j.properties] file is used by the [log4j-1.2.13.jar] [2] archive from the tool called LOG4j (Logs for Java), available at the URL [http://logging.apache.org/log4j/docs/index.html]. Placed in the [src] folder of the Eclipse project, we know that [log4j.properties] will be automatically copied to the [bin] folder of the project [3]. Once this is done, it is now in the project’s classpath, and that is where the [2] archive will retrieve it.

The [log4j.properties] file allows us to control certain Hibernate logs. In previous runs, its contents were as follows:

# Direct log messages to stdout

log4j.appender.stdout=org.apache.log4j.ConsoleAppender

log4j.appender.stdout.Target=System.out

log4j.appender.stdout.layout=org.apache.log4j.PatternLayout

log4j.appender.stdout.layout.ConversionPattern=%d{ABSOLUTE} %5p %c{1}:%L - %m%n

# Root logger option

log4j.rootLogger=ERROR, stdout

# Hibernate logging options (INFO only shows startup messages)

#log4j.logger.org.hibernate=INFO

# Log JDBC bind parameter runtime arguments

#log4j.logger.org.hibernate.type=DEBUG

I won’t comment much on this configuration since I’ve never taken the time to seriously learn about LOG4j.

- Lines 1–8 are found in all log4j.properties files I have encountered

- Lines 10–14 are present in the log4j.properties files of the Hibernate examples.

- Line 11: controls Hibernate’s general logs. Since the line is commented out, these logs are disabled here. There are several log levels: INFO (general information about what Hibernate is doing), WARN (Hibernate warns us of a potential problem), DEBUG (detailed logs). The INFO level is the least verbose, while DEBUG mode is the most verbose. Enabling line 11 allows you to see what Hibernate is doing, particularly when the application starts up. This is often useful.

- Line 12, if enabled, allows you to see the actual arguments used when executing parameterized SQL queries.

Let’s start by uncommenting line 14

# Log JDBC bind parameter runtime arguments

log4j.logger.org.hibernate.type=DEBUG

and rerun [InitDB]. The new logs generated by this change are as follows (partial view):

- Lines 8–10 are new logs generated by enabling line 14 of [log4j.properties]. They indicate the 5 values assigned to the formal parameters ? of the parameterized query in lines 2–7. Thus, we see that the VERSION column will receive the value 0 (line 8).

Now let’s enable line 11 of [log4j.properties]:

and rerun [InitDB]:

Reading these logs provides a lot of interesting information:

- line 7: Hibernate indicates the name of an @Entity class it has found

- line 8: indicates that the [Person] class will be mapped to the [jpa01_person] table

- line 9: indicates the C3P0 connection pool that will be used, the name of the JDBC driver, and the URL of the database to be managed

- line 10: provides additional details about the JDBC connection: owner, commit type, etc.

- line 14: the dialect used to communicate with the DBMS

- line 15: the type of transaction used. JDBCTransactionFactory indicates that the application manages its own transactions. It does not run in an EJB3 container that would provide its own transaction service.

- The following lines relate to Hibernate configuration options that we have not encountered. Interested readers are encouraged to consult the Hibernate documentation.

- Line 37: SQL statements will be displayed on the console. This was requested in [persistence.xml]:

<property name="hibernate.show_sql" value="true" />

<property name="hibernate.format_sql" value="true" />

<property name="use_sql_comments" value="true" />

- Lines 43–45: The database schema is exported to the DBMS, i.e., the database is emptied and then recreated. This mechanism stems from the configuration in [persistence.xml] (line 4 below):

...

<property name="hibernate.connection.password" value="jpa" />

<!-- création automatique du schéma -->

<property name="hibernate.hbm2ddl.auto" value="create" />

<!-- Dialecte -->

...

When an application "crashes" with a Hibernate exception that you don't understand, start by enabling Hibernate logs in DEBUG mode in [log4j.properties] to get a clearer picture:

# Root logger option

log4j.rootLogger=ERROR, stdout

# Hibernate logging options (INFO only shows startup messages)

log4j.logger.org.hibernate=DEBUG

In the rest of this document, logging is disabled by default to ensure a more readable console output.

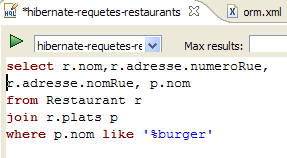

2.1.12. Exploring the JPQL/HQL language with the Hibernate console

Note: This section requires the Hibernate Tools plugin (section 5.2.5).

In the code for the [InitDB] application, we used a JPQL query. JPQL (Java Persistence Query Language) is a language for querying the persistence context. The query used was as follows:

It selected all records from the table associated with the @Entity [Person] and returned them in ascending order by name. In the query above, p.name is the name field of an instance p of the [Person] class. A JPQL query therefore operates on the @Entity objects in the persistence context and not directly on the database tables. The JPA layer translates this JPQL query into an SQL query appropriate for the DBMS it is working with. Thus, in the case of a JPA/Hibernate implementation connected to a MySQL5 DBMS, the previous JPQL query is translated into the following SQL query:

select

personne0_.ID as ID0_,

personne0_.VERSION as VERSION0_,

personne0_.NOM as NOM0_,

personne0_.PRENOM as PRENOM0_,

personne0_.DATENAISSANCE as DATENAIS5_0_,

personne0_.MARIE as MARIE0_,

personne0_.NBENFANTS as NBENFANTS0_

from

jpa01_personne personne0_

order by

personne0_.NOM asc

The JPA layer used the configuration of the @Entity object [Person] to generate the correct SQL query. This is an example of the object-relational mapping being implemented here.

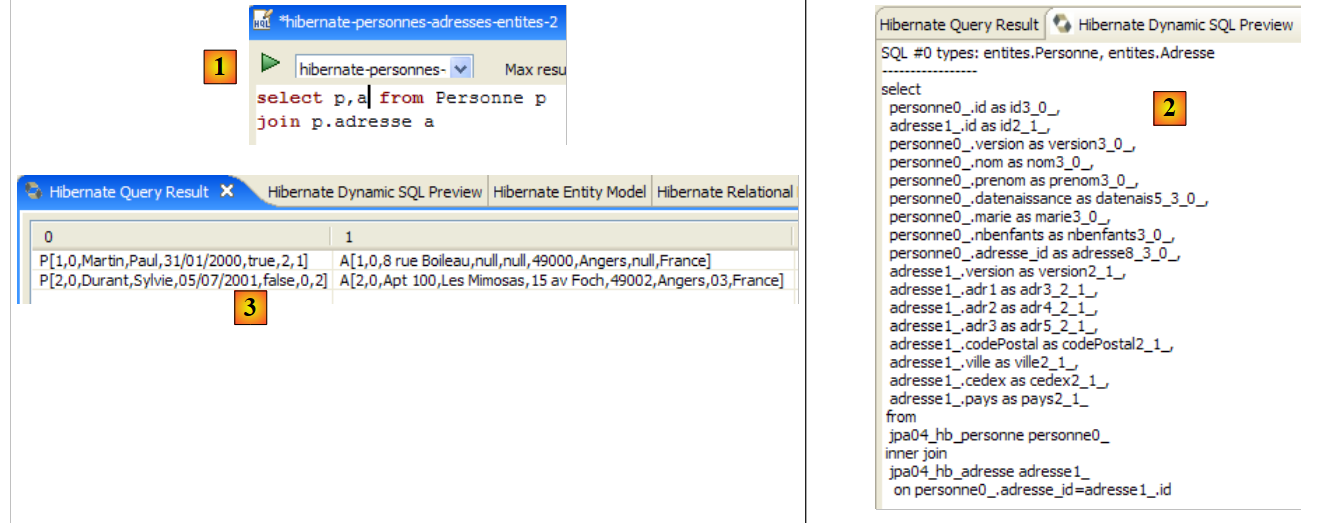

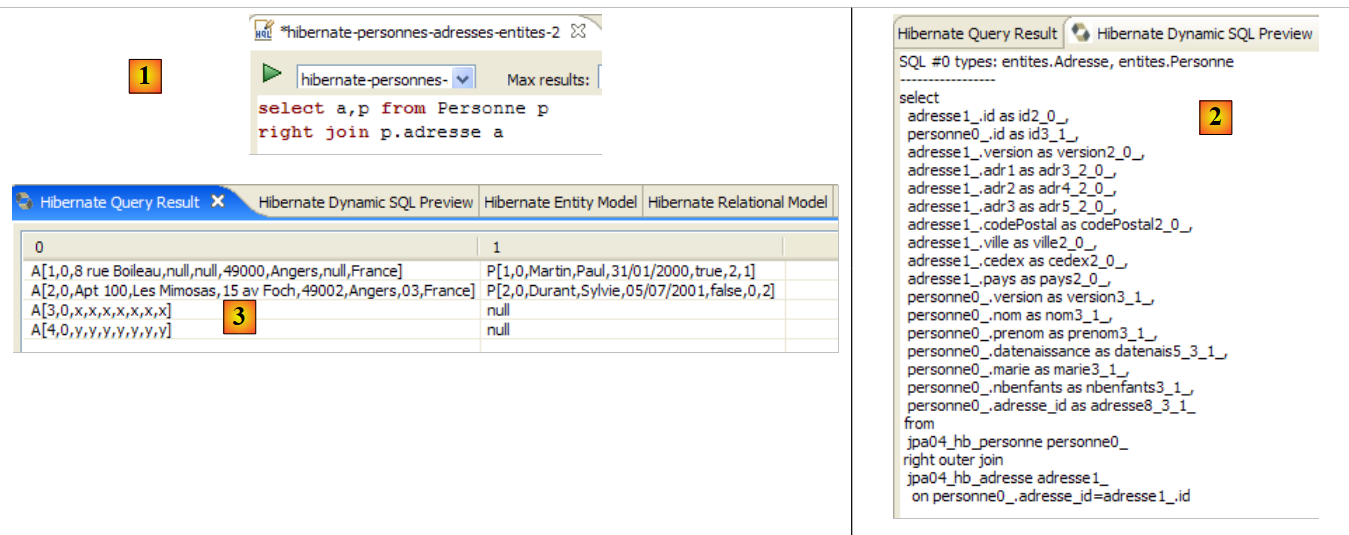

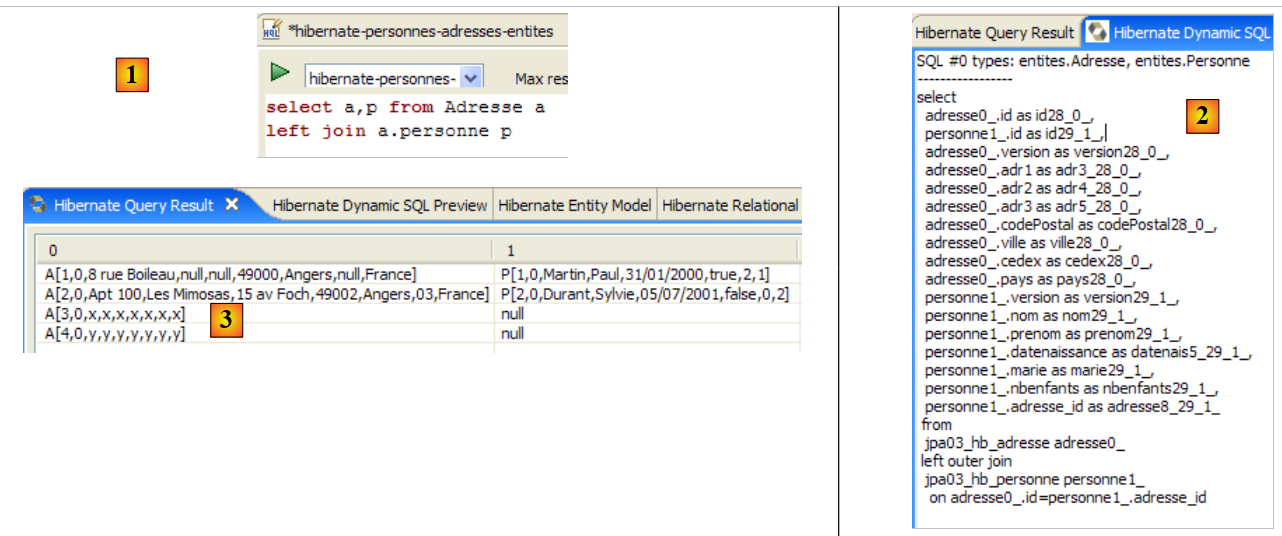

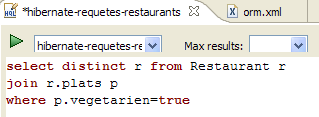

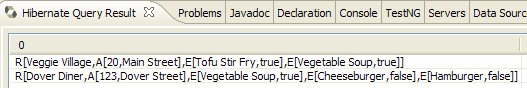

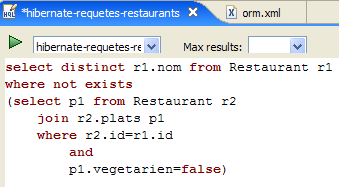

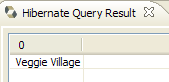

The [Hibernate Tools] plugin (Section 5.2.5) offers a tool called "Hibernate Console" that allows

- you to issue JPQL or HQL (Hibernate Query Language) queries on the persistence context

- to retrieve the results

- to see the SQL equivalent that was executed on the database

The Hibernate Console is an invaluable tool for learning the JPQL language and becoming familiar with the JPQL/SQL bridge. It is well known that JPA drew heavily on ORM tools such as Hibernate or TopLink. JPQL is very similar to Hibernate’s HQL but does not include all of its features. In the Hibernate console, you can issue HQL commands that will execute normally in the console but are not part of the JPQL language and therefore cannot be used in a JPA client. When this is the case, we will point it out.

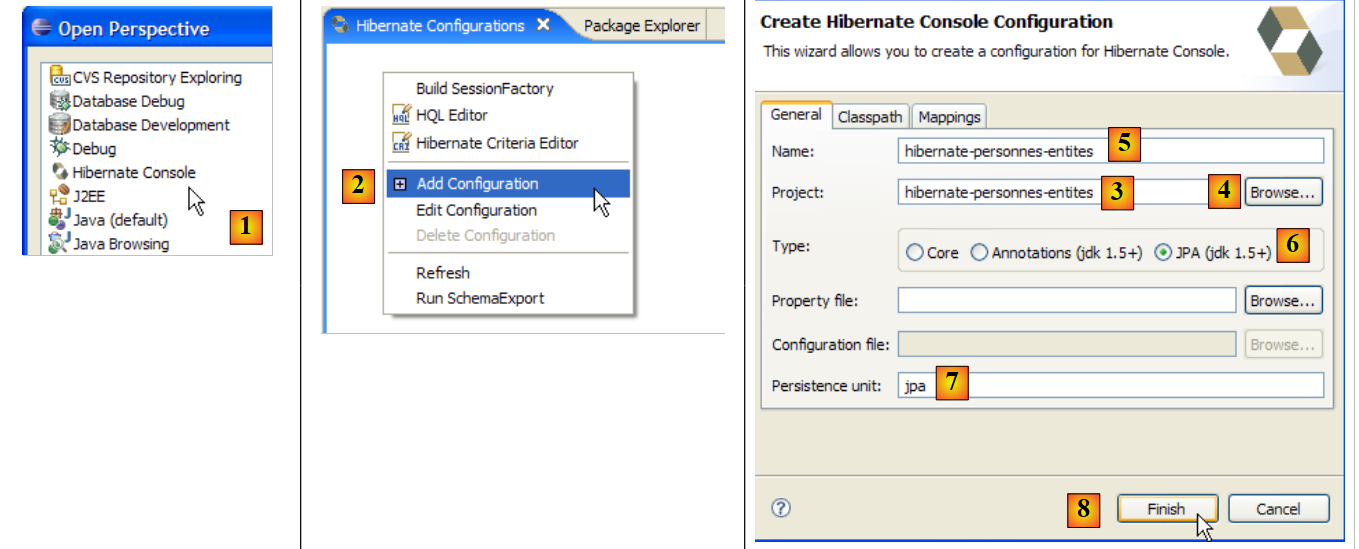

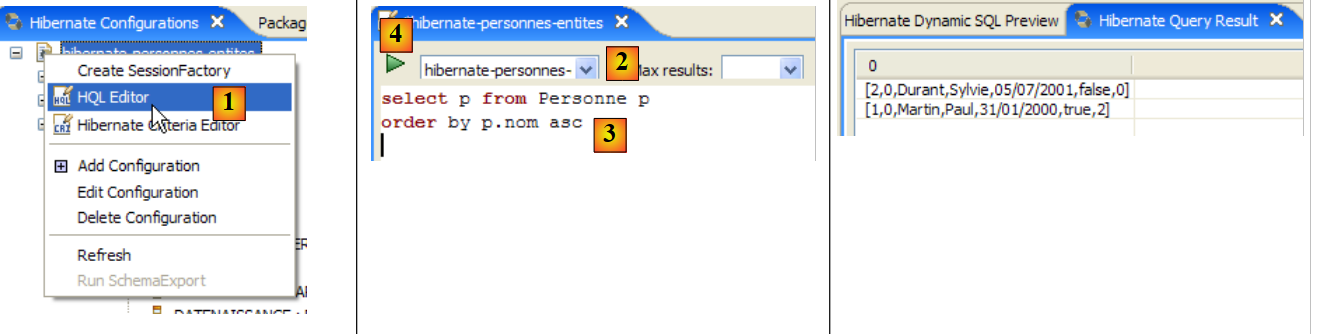

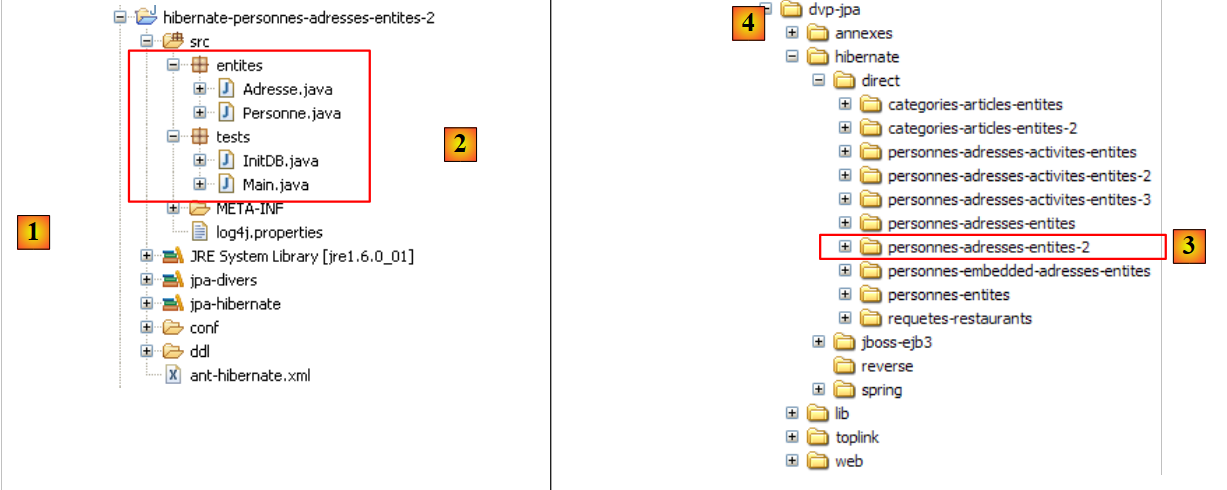

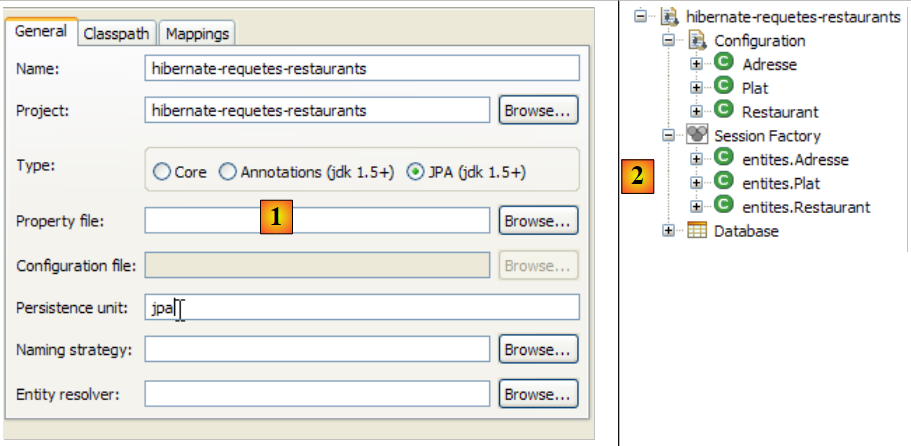

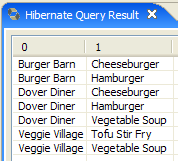

Let’s create a Hibernate console for our current Eclipse project:

|

- [1]: Switch to the [Hibernate Console] perspective (Window / Open Perspective / Other)

- [2]: We create a new configuration in the [Hibernate Configuration] window

- using the [4] button, we select the Java project for which the Hibernate configuration is being created. Its name appears in [3].

- In [5], we enter the name we want for this configuration. Here, we’ve used [3].

- In [6], we specify that we are using a JPA configuration so that the tool knows it must use the [META-INF/persistence.xml] file

- In [7], we specify that in this [META-INF/persistence.xml] file, the persistence unit named jpa should be used.

- In [8], we validate the configuration.

Next, the DBMS must be started. Here, we are using MySQL 5.

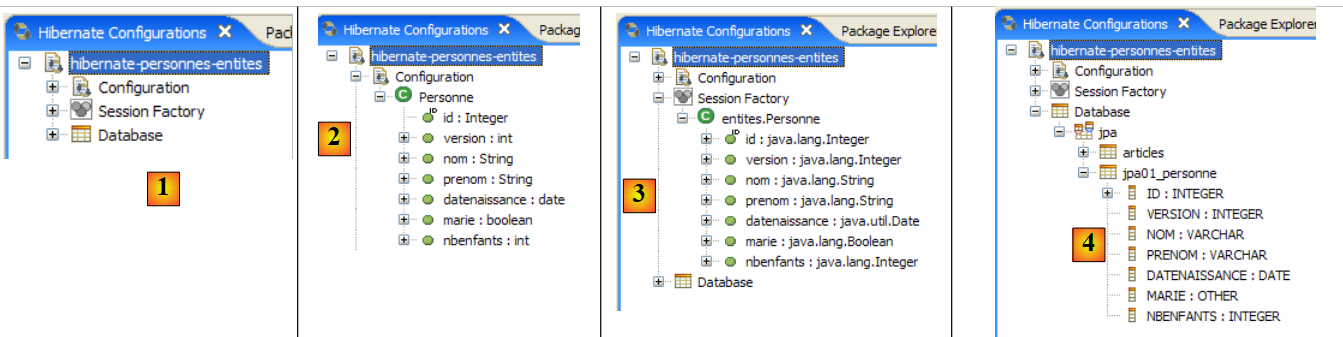

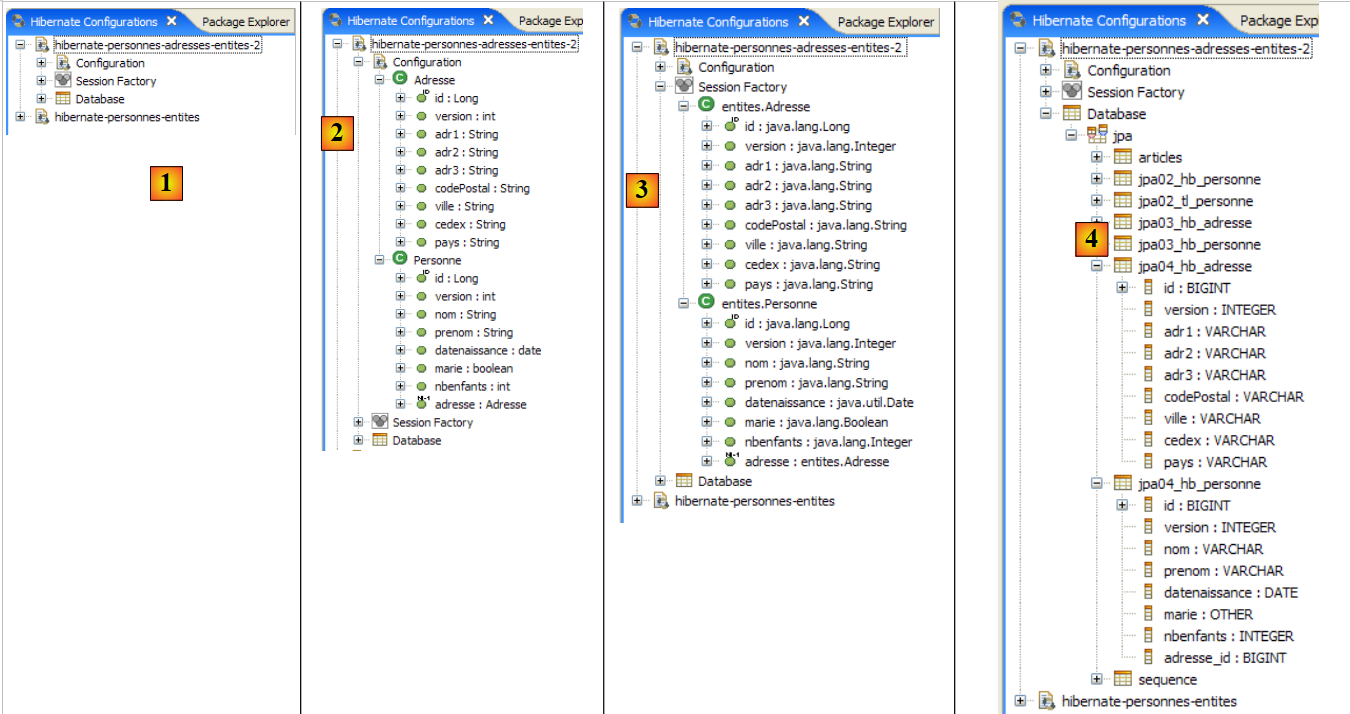

|

- In [1]: The created configuration displays a three-branch tree

- In [2]: The [Configuration] branch lists the objects the console used to configure itself: here, the @Entity Person.

- In [3]: The Session Factory is a Hibernate concept similar to JPA’s EntityManager. It bridges the object-relational gap using the objects in the [Configuration] branch. In [3], the objects of the persistence context are shown; here, again, the @Entity Person.

- in [4]: the database accessed via the configuration found in [persistence.xml]. The [jpa01_personne] table is found there.

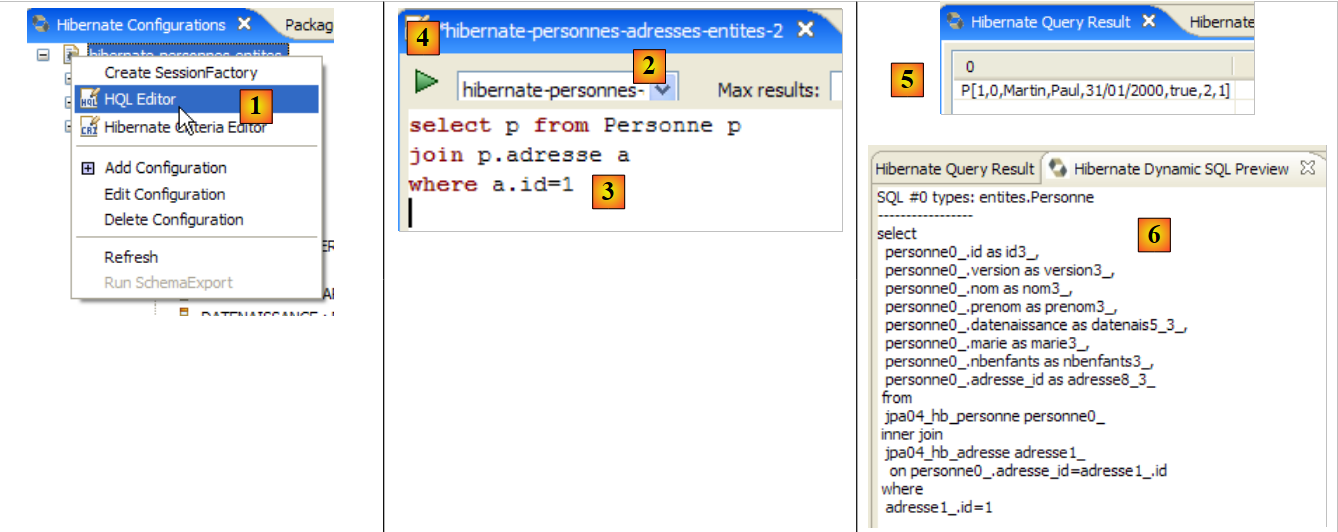

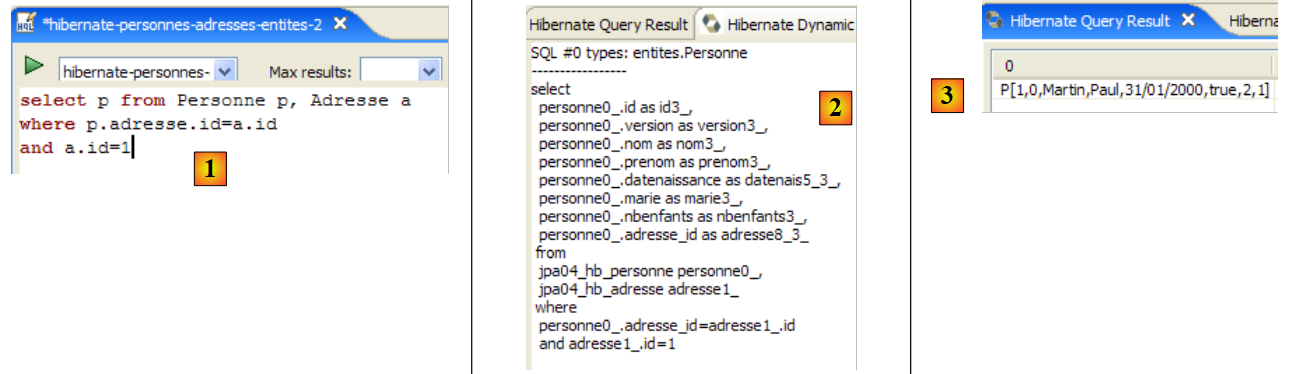

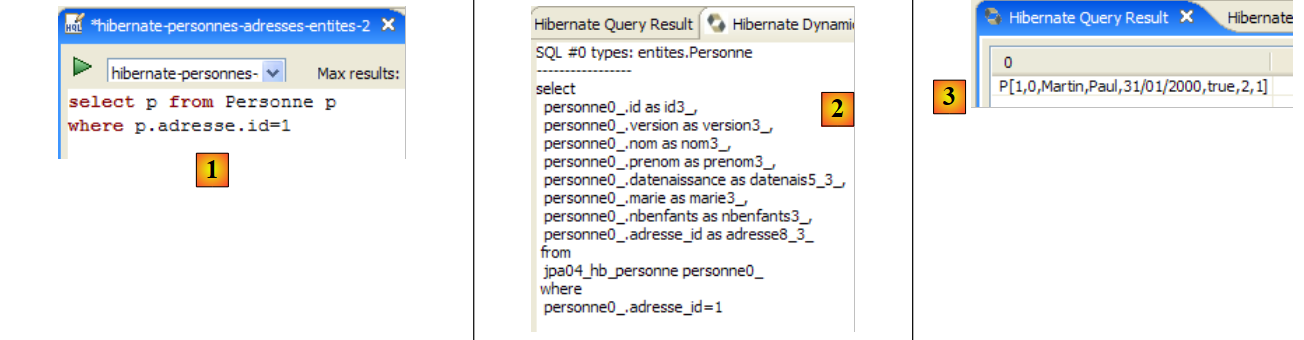

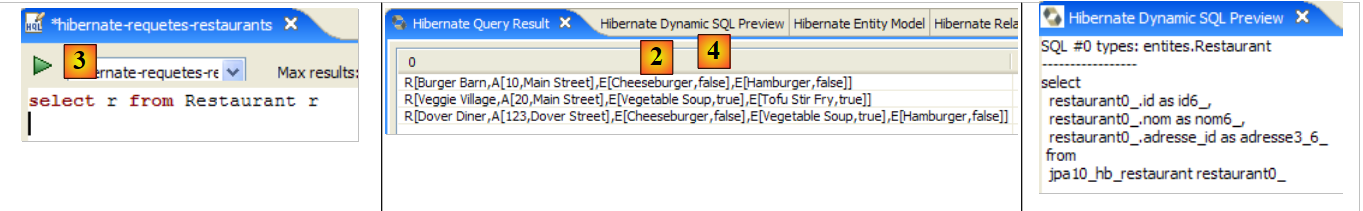

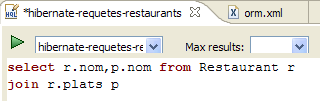

|

- In [1], we create an HQL editor

- in the HQL editor,

- in [2], we select the Hibernate configuration to use if there are multiple

- in [3], we type the JPQL command we want to execute

- in [4], execute it

- In [5], you get the query results in the [Hibernate Query Result] window. You may encounter two issues here:

- You get nothing (no rows). The Hibernate console used the contents of [persistence.xml] to establish a connection with the DBMS. However, this configuration has a property that instructs the database to be emptied:

<property name="hibernate.hbm2ddl.auto" value="create" />

You must therefore rerun the [InitDB] application before re-executing the JPQL command above.

- (continued)

- The [Hibernate Query Result] window is not displayed. You can open it via [Window / Show View / ...]

The [Hibernate Dynamic SQL preview] window ([1] below) allows you to see the SQL query that will be executed to run the JPQL command you are currently writing. As soon as the JPQL command syntax is correct, the corresponding SQL command appears in this window:

|

- In [2], you can clear the previous HQL command

- At [3], you execute a new one

- at [4], the result

- in [5], the SQL command that was executed on the database

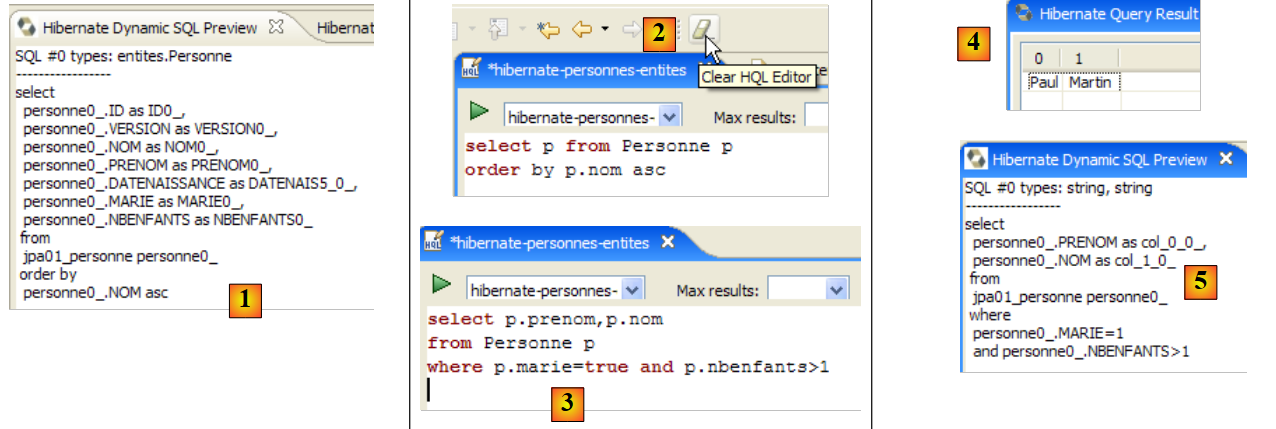

The HQL editor provides assistance for writing HQL commands:

|

- in [1]: once the editor knows that p is a Person object, it can suggest p’s fields as you type.

- in [2]: an incorrect HQL query. You must write where p.marie=true.

- in [3]: the error is reported in the [SQL Preview] window

We invite the reader to issue other HQL/JPQL commands on the database.

2.1.13. A second JPA client

Let’s return to the Java perspective of the project:

|

- [InitDB.java] is a program that inserted a few rows into the [jpa01_personne] table in the database. Studying its code allowed us to grasp the basics of the JPA API.

- [Main.java] is a program that performs CRUD operations on the [jpa01_personne] table. Examining its code will allow us to revisit the fundamental concepts of the persistence context and the lifecycle of objects within that context.

2.1.13.1. The structure of the code

[Main.java] will run a series of tests, each designed to demonstrate a specific aspect of JPA:

|

The [main] method

- successively calls the methods test1 through test11. We will present the code for each of these methods separately.

- It also uses private utility methods: clean, dump, log, getEntityManager, getNewEntityManager.

We present the main method and the so-called utility methods:

package tests;

...

import entites.Personne;

@SuppressWarnings("unchecked")

public class Main {

// constant

private final static String TABLE_NAME = "jpa01_personne";

// Persistence context

private static EntityManagerFactory emf = Persistence.createEntityManagerFactory("jpa");

private static EntityManager em = null;

// shared objects

private static Personne p1, p2, newp1;

public static void main(String[] args) throws Exception {

// base cleaning

log("clean");clean();

// dump table

dump();

// test1

log("test1");test1();

...

// test11

log("test11");test11();

// fine persistence context

if (em.isOpen())

em.close();

// closure EntityManagerFactory

emf.close();

}

// retrieve the current EntityManager

private static EntityManager getEntityManager() {

if (em == null || !em.isOpen()) {

em = emf.createEntityManager();

}

return em;

}

// pick up a new EntityManager

private static EntityManager getNewEntityManager() {

if (em != null && em.isOpen()) {

em.close();

}

em = emf.createEntityManager();

return em;

}

// table content display

private static void dump() {

// current persistence context

EntityManager em = getEntityManager();

// start of transaction

EntityTransaction tx = em.getTransaction();

tx.begin();

// people display

System.out.println("[personnes]");

for (Object p : em.createQuery("select p from Personne p order by p.nom asc").getResultList()) {

System.out.println(p);

}

// end transaction

tx.commit();

}

// raz BD

private static void clean() {

// persistence context

EntityManager em = getEntityManager();

// start of transaction

EntityTransaction tx = em.getTransaction();

tx.begin();

// delete elements from the PERSONNES table

em.createNativeQuery("delete from " + TABLE_NAME).executeUpdate();

// end transaction

tx.commit();

}

// logs

private static void log(String message) {

System.out.println("main : ----------- " + message);

}

// object creation

public static void test1() throws ParseException {

...

}

// modify a context object

public static void test2() {

...

}

// request items

public static void test3() {

...

}

// delete an object belonging to the persistence context

public static void test4() {

....

}

// detach, reattach and modify

public static void test5() {

...

}

// delete an object not belonging to the persistence context

public static void test6() {

...

}

// modify an object not belonging to the persistence context

public static void test7() {

...

}

// reattach an object to the persistence context

public static void test8() {

...

}

// a select request causes synchronization

// with the persistence context

public static void test9() {

....

}

// version control (optimistic locking)

public static void test10() {

...

}

// transaction rollback

public static void test11() throws ParseException {

...

}

}

- Line 13: The EntityManagerFactory object (emf) is constructed from the JPA persistence unit defined in [persistence.xml]. It will allow us to create various persistence contexts throughout the application.

- line 14: an EntityManager persistence context that has not yet been initialized

- line 17: three [Person] objects shared by the tests

- Line 21: The jpa01_personne table is cleared and then displayed on line 24 to ensure that we are starting with an empty table.

- Lines 27–31: sequence of tests

- Lines 34–35: Close the persistence context if it was open.

- Line 38: The EntityManagerFactory object emf is closed.

- Lines 42–47: The [getEntityManager] method returns the current EntityManager (or persistence context) or creates a new one if it does not exist (lines 43–44).

- lines 50-56: the [getNewEntityManager] method returns a new persistence context. If one existed previously, it is closed (lines 51-52)

- lines 59-72: the [dump] method displays the contents of the [jpa01_personne] table. This code has already been encountered in [InitDB].